The Government Suing Anthropic Just Told Banks to Use Its AI

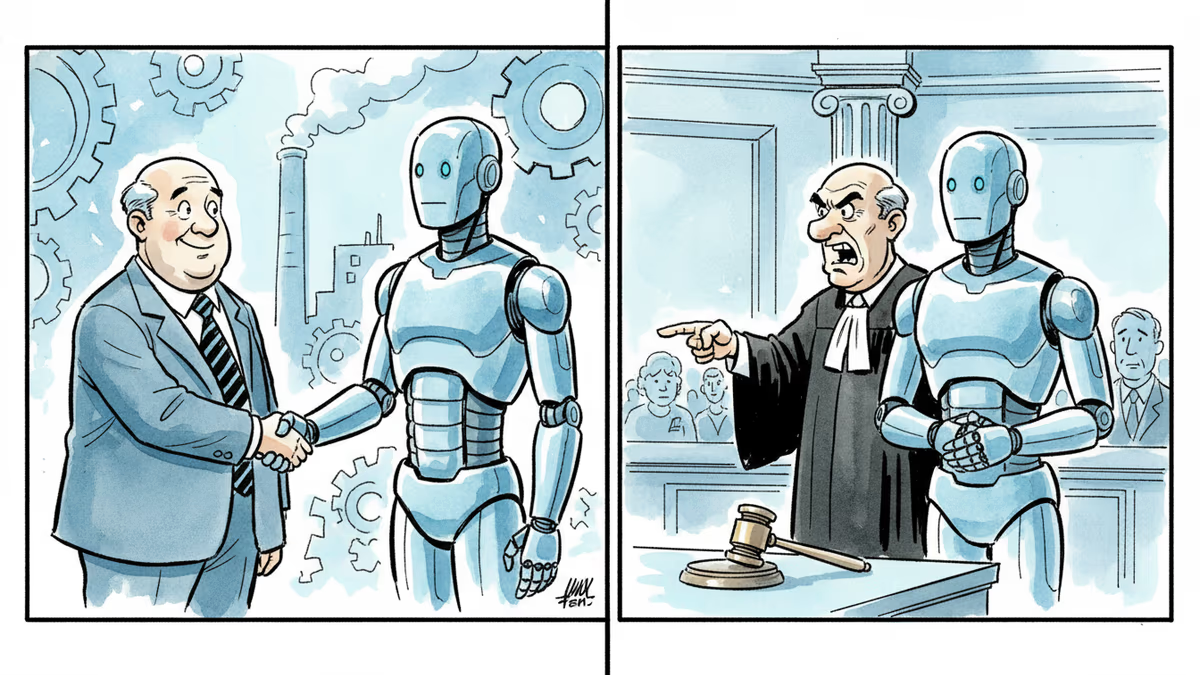

The Trump administration is battling Anthropic in court while simultaneously urging Wall Street banks to test its Mythos AI model. What does this contradiction reveal about US AI policy?

The Administration Suing Anthropic Just Recommended Its AI to Wall Street

Sometime this week, two things happened simultaneously inside the US government. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell called a meeting with bank executives and encouraged them to test Anthropic's new Mythos model for detecting security vulnerabilities. At the same time, the Trump administration was actively litigating against Anthropic in federal court.

The same government. The same week. Opposite postures toward the same company.

What Actually Happened

According to Bloomberg, the Bessent-Powell meeting wasn't a casual suggestion. Senior officials from the Treasury and the Fed convening with bank executives to recommend a specific AI product is about as close to a government endorsement as you can get without a formal mandate.

JPMorgan Chase was already named as an initial partner with access to Mythos. But Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are reportedly testing it too. That's effectively the entire top tier of US banking exploring a single AI model in parallel — a level of coordinated adoption that rarely happens without a nudge from above.

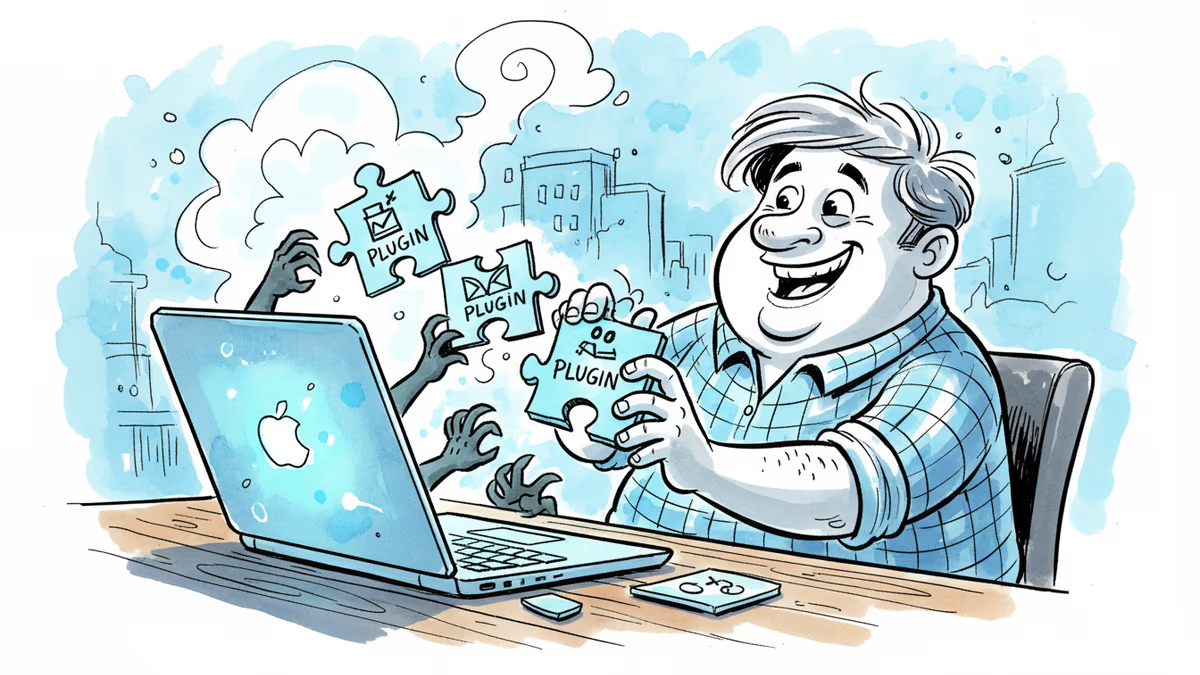

Anthropic announced Mythos this week but deliberately restricted access. The stated reason is striking: the model wasn't specifically trained for cybersecurity, yet it's exceptionally good at finding security vulnerabilities. Too good, apparently, to release freely. Some observers take this at face value as a genuine safety precaution. Others read it as a calculated enterprise sales move — scarcity as marketing.

Across the Atlantic, the Financial Times reports that UK financial regulators are also assessing the risks Mythos poses to financial systems. This isn't a purely American conversation.

The Lawsuit in the Room

The legal conflict adds a layer that makes this story more than a routine AI adoption story.

Anthropic has been fighting the Trump administration over the Department of Defense's decision to designate the company as a supply-chain risk — a classification that carries significant consequences for government contracting and national security partnerships. The designation came after negotiations broke down over Anthropic's attempts to limit how its AI models can be deployed by the government. Anthropic didn't want its technology used for certain military applications. The administration wouldn't accept those conditions.

So the DoD labeled them a risk. And now the Treasury and the Fed are telling banks to use their product.

This isn't just bureaucratic miscommunication. It exposes something more structural: the US government has no unified AI policy framework. Different agencies are pulling in different directions, and the gap between national security posture and financial regulatory posture has rarely been this visible.

Three Ways to Read This

For the banks, the politics are largely beside the point. Financial institutions face thousands of cyberattack attempts daily. If Mythos genuinely outperforms existing tools at finding vulnerabilities, that's a competitive and regulatory imperative — especially when senior officials from Treasury and the Fed are in the room encouraging adoption. It also provides institutional cover: if something goes wrong, the banks can point to the government recommendation.

For cybersecurity professionals, the more uncomfortable question is about dual use. A model that's exceptionally good at finding vulnerabilities is, by definition, also useful for exploiting them. Restricting Mythos access doesn't prevent adversarial actors from developing comparable capabilities — it just slows the timeline. The financial sector may be acquiring a powerful defensive tool while simultaneously raising the ceiling on what attackers will eventually build toward.

***For Anthropic itself***, the situation is paradoxical but not necessarily bad. Being recommended by the Treasury and the Fed while fighting the DoD in court is an unusual position, but it demonstrates the company's technology is considered credible enough to deploy in systemically important institutions. Whether this creates leverage in the legal dispute — or complicates it — remains to be seen.

What Comes Next

Two plausible trajectories are worth watching.

In the first, pragmatism wins. The legal dispute quietly moves toward settlement, the financial sector's adoption of Mythos creates a de facto partnership between Anthropic and the US government, and the supply-chain risk designation gets revisited. Commercial reality softens the political conflict.

In the second, the principle holds. Anthropic refuses to cede ground on how its models can be used by government actors. The administration escalates. The case becomes a landmark test of whether AI companies can impose ethical constraints on government customers — and what happens when they try.

The UK regulatory response adds a third variable. If British financial regulators move to restrict or condition Mythos use, it could mark another divergence point between US and European approaches to AI governance in critical infrastructure — a divide that's been widening since the EU AI Act.

Authors

Related Articles

A critical vulnerability in Starlette—downloaded 325 million times per week—puts millions of AI agent servers at risk, exposing stored credentials for email, databases, and third-party services.

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

GitHub confirmed hackers stole data from 3,800 internal repositories via a poisoned VS Code extension. Here's why developer tools are now the most dangerous attack surface in tech.

Thoughts

Share your thoughts on this article

Sign in to join the conversation