Trump's First Big Tech Clash: Federal Agencies Banned from Anthropic AI

President Trump orders federal agencies to cease using Anthropic's AI tools after weeks of tensions over military applications, setting up a six-month negotiation window.

Six Months: Negotiation Window or Pressure Campaign?

President Donald Trump dropped a bombshell Friday, ordering every federal agency to "immediately cease" using Anthropic's AI tools. But here's the twist: he's giving them six months to phase out. That's not the timeline of a permanent breakup—it's the deadline of a high-stakes negotiation.

"The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War," Trump posted on Truth Social. Yet buried in his characteristic bombast was a crucial phrase: time for "further negotiations between the government and the AI startup."

The question isn't whether this is political theater. It's whether Anthropic will blink first.

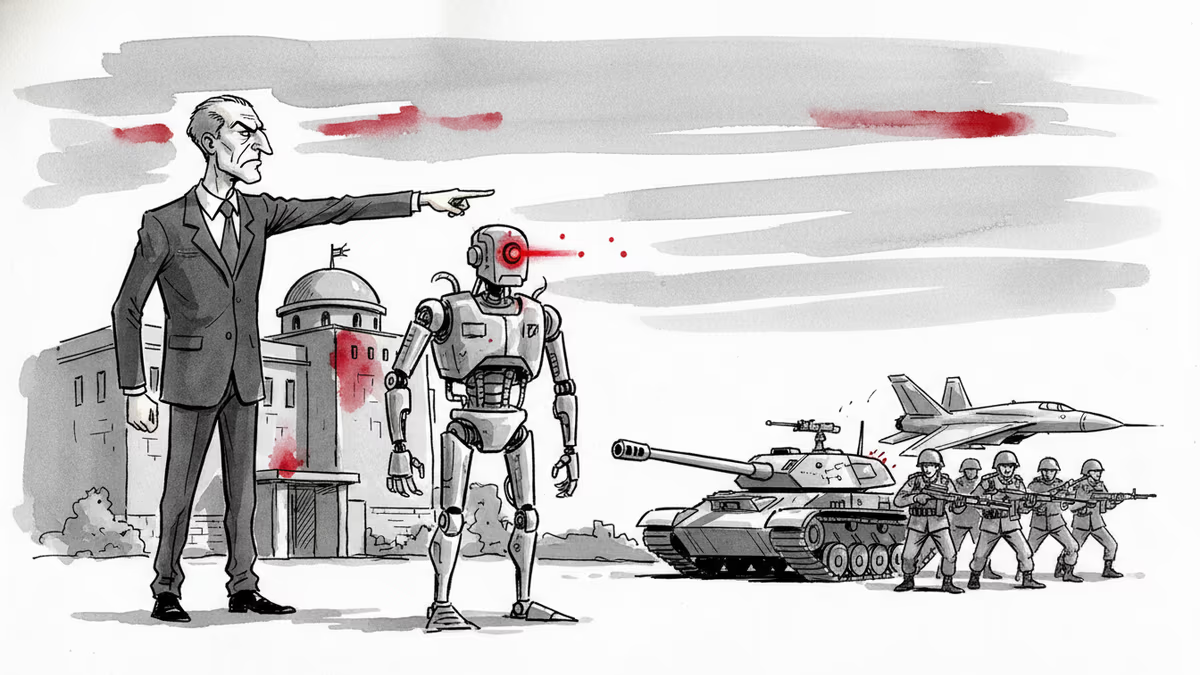

The Military AI Standoff

At the heart of this clash lies artificial intelligence's role in warfare. Anthropic has maintained strict ethical guidelines around military applications of its Claude AI system. Meanwhile, the Trump administration views AI as critical infrastructure in the competition with China—and wants every available tool in the arsenal.

This isn't just about one company. OpenAI has been expanding its Pentagon partnerships, while Google'sDeepMind faces internal resistance over military projects. The entire industry is grappling with the same fundamental question: Should AI companies have a say in how their technology is used by governments?

Silicon Valley's Loyalty Test

Trump's move sends a clear message to Big Tech: play ball or lose access to the world's largest customer. The federal government spends billions annually on AI services, making it nearly impossible for companies to ignore.

Some have already chosen sides. Meta's Mark Zuckerberg recently rolled back content moderation policies in what many saw as an olive branch to the new administration. Elon Musk became Trump's closest tech ally. But Anthropic is betting it can survive without government contracts.

That's a risky wager in an industry where scale determines survival.

The Global AI Realignment

This domestic spat has international implications. If American AI companies won't work with the U.S. military, will foreign alternatives fill the gap? Chinese AI firms would love nothing more than to see American companies handicapped by ethical constraints while they face no such limitations.

European AI companies, bound by stricter regulations, might find themselves caught in the middle. And emerging players from South Korea, Israel, and other tech hubs could suddenly find doors opening in Washington.

The next six months won't just determine Anthropic's future—they'll set the precedent for how much independence AI companies can maintain in an era when artificial intelligence is becoming a matter of national security.

Authors

Related Articles

China is restricting AI researchers and startup founders from traveling abroad as the U.S.-China AI performance gap narrows to just 2.7%. What Beijing's talent lockdown means for the global AI race.

A small but growing group of developers has gone all-in on AI coding agents like Claude Code and OpenClaw. History suggests the rest of us won't be far behind.

Josh D'Amaro took over Disney with a bold Disney Plus vision. Days later, he's in a First Amendment fight with the Trump administration over The View. What does this mean for media freedom?

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation