A $12B Baby With No Product Just Locked In a Gigawatt Deal

Mira Murati's Thinking Machines Lab has signed a multi-year compute partnership with Nvidia, committing to at least one gigawatt of Vera Rubin systems by 2027. The deal raises sharp questions about what it takes to win the AI arms race.

Four co-founders have left. The only product is a single API. The company is 13 months old. And it just committed to deploying at least one gigawatt of Nvidia's most advanced hardware.

This is Thinking Machines Lab — and its latest move tells you something uncomfortable about how the AI race is actually being won.

What Just Happened

On Tuesday, Thinking Machines Lab — the AI research startup founded by OpenAI co-founder and former CTO Mira Murati — announced a multi-year strategic partnership with Nvidia. Financial terms were not disclosed. What was disclosed: starting in 2027, the company will deploy at least 1 gigawatt of Nvidia's Vera Rubin systems, the chipmaker's latest architecture released earlier this year.

Nvidia is also making a direct strategic investment in the company. Thinking Machines Lab has now raised more than $2 billion since its founding in February 2025, backed by Andreessen Horowitz, Accel, Nvidia, and — notably — the venture arm of rival chipmaker AMD. The company carries a valuation of more than $12 billion.

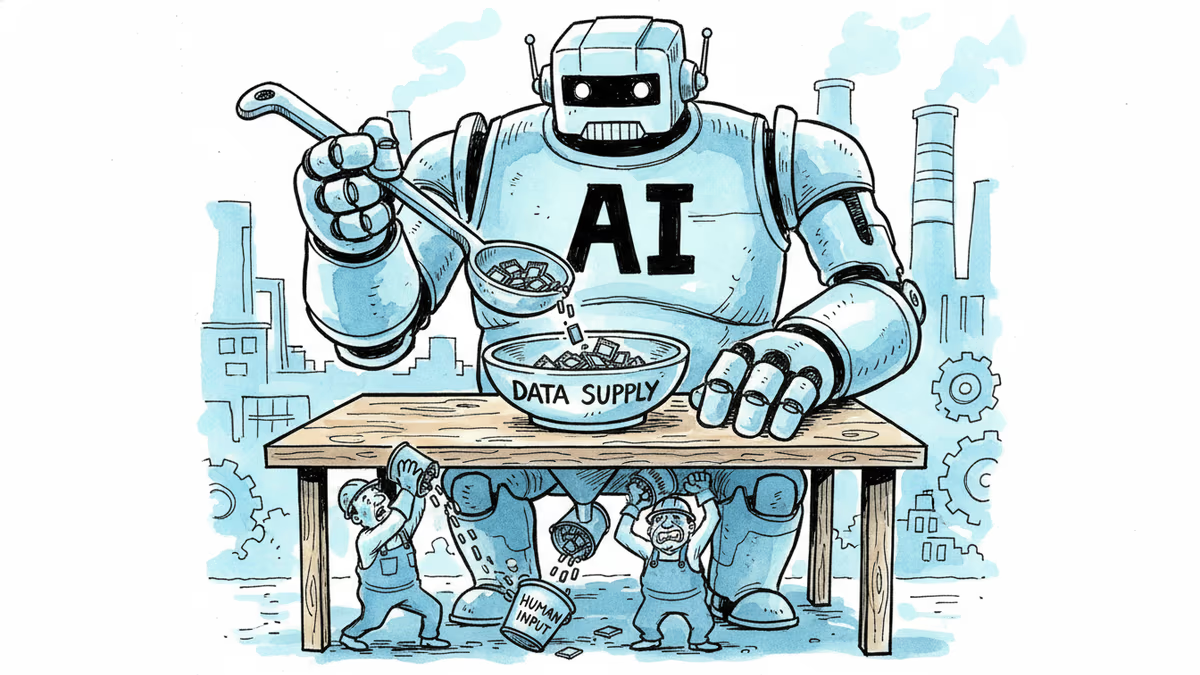

The partnership goes beyond hardware procurement. According to Nvidia's press release, it includes a commitment to jointly develop training and serving systems optimized for Nvidia architecture. In other words, Thinking Machines Lab isn't just buying chips — it's building its future around them.

"Nvidia's technology is the foundation on which the entire field is built," Murati wrote in the announcement.

The Context: A Compute Arms Race With No Off-Ramp

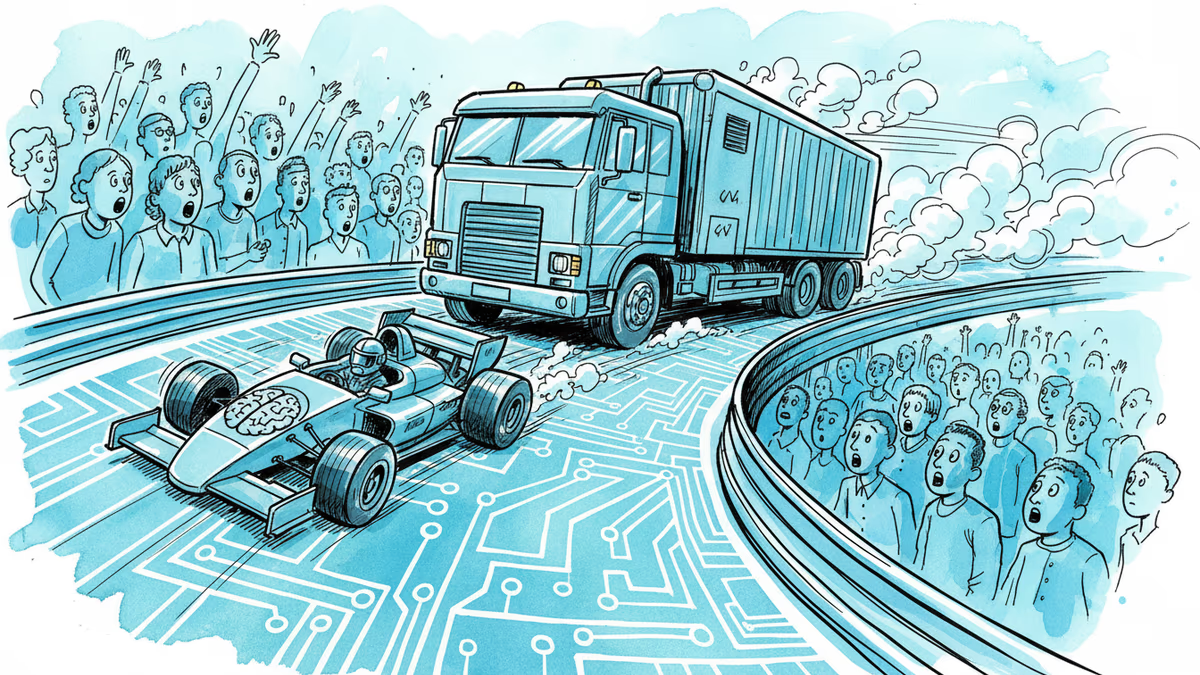

This deal doesn't happen in a vacuum. AI companies are in an infrastructure land-grab, and the numbers involved are staggering. Nvidia CEO Jensen Huang has projected that companies could spend $3 to $4 trillion on AI infrastructure before the decade ends. OpenAI reportedly signed a $300 billion compute deal with Oracle last year — a figure that, once unthinkable for any single contract, now serves as a benchmark.

The logic is straightforward: the models that will define the next era of AI require compute at a scale that most organizations cannot access. Securing that access early — and locking in favorable terms with the dominant supplier — has become a strategic priority that rivals product development itself.

For Thinking Machines Lab, the timing is deliberate. The company's stated mission is building AI models that produce reproducible results — a direct jab at one of enterprise AI's most persistent problems. Businesses deploying AI in critical workflows don't just want powerful models; they want predictable ones. That's a real market need, and it requires serious infrastructure to pursue.

The Tension: Talent Out, Capital In

Here's what complicates the narrative. Since its founding, Thinking Machines Lab has seen four co-founders depart. Andrew Tulloch left for Meta in October. Earlier this year, Barret Zoph, Luke Metz, and Sam Schoenholz all returned to OpenAI. The company's only public-facing product remains Tinker, an API released last fall.

For most startups, losing four co-founders in year one would be a red flag. For an AI research lab — where the intellectual capital is the product — it raises sharper questions. When TechCrunch reached out for comment, Thinking Machines Lab declined to say anything beyond the official release.

And yet: the funding keeps coming. The valuation keeps climbing. The deals keep getting signed.

This isn't necessarily contradictory. Talent churn at AI startups is common, especially when the gravitational pull of OpenAI and Meta is this strong. And Murati herself remains one of the most credentialed AI executives in the world. But the gap between the company's public outputs and its capital accumulation is wide enough to notice.

Three Ways to Read This Deal

For investors, this is a bet on Murati's vision and the reproducibility thesis. If enterprise AI adoption accelerates — and every indicator suggests it will — companies that can offer consistent, auditable outputs will have a structural advantage. The Nvidia partnership de-risks the compute question and adds a powerful validator to the cap table.

For competitors, particularly OpenAI, Anthropic, and Google DeepMind, this is a signal that the field is not consolidating — it's expanding. A well-funded rival with deep Nvidia ties and a differentiated technical focus is a credible long-term threat, regardless of current product depth.

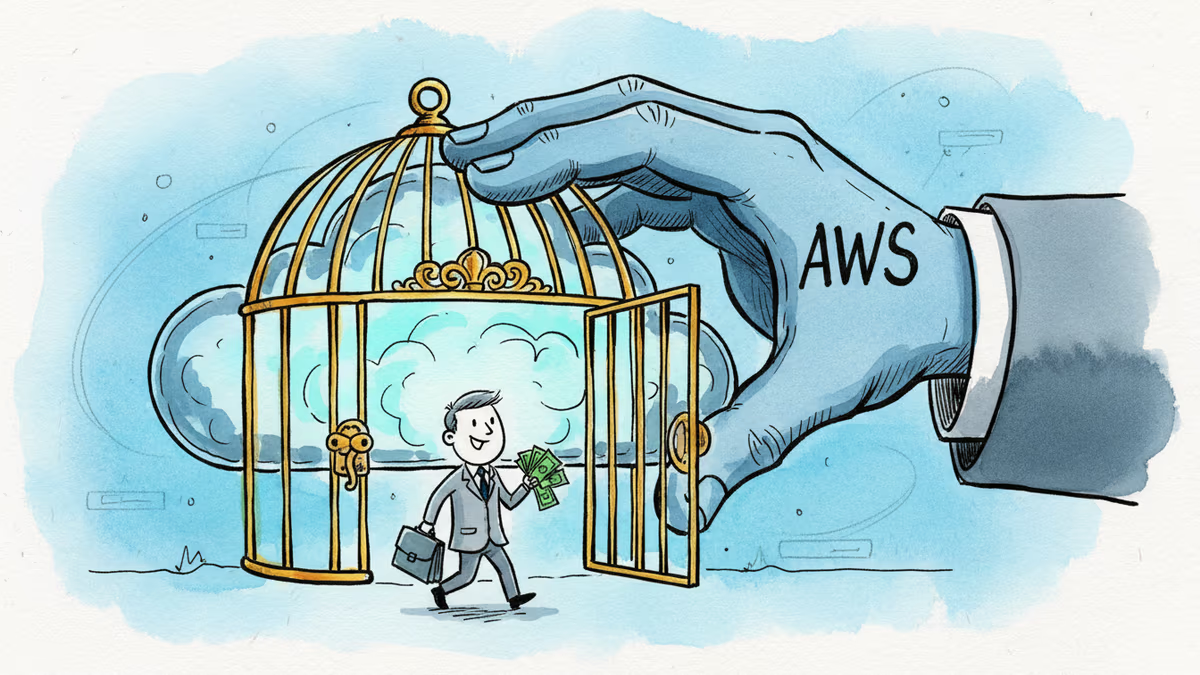

**For Nvidia****, the investment is almost risk-free. Every dollar Thinking Machines Lab spends on compute flows back. Strategic investments in promising AI labs aren't charity — they're customer development at scale.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

Memory makers can't build fabs fast enough. By end of 2027, supply will cover just 60% of demand. Here's why the shortage could last until 2030—and what it means for AI, your devices, and the chip industry.

Thoughts

Share your thoughts on this article

Sign in to join the conversation