Take a Deep Breath" - ChatGPT Users Revolt Against Patronizing AI

OpenAI promises to reduce "cringe" in GPT-5.3 after users canceled subscriptions over ChatGPT's condescending tone. What went wrong with AI empathy?

"First of all — you're not broken." If reading that made you wince, you're not alone. 295% of ChatGPT users who tried the 5.2 model know exactly what you mean.

OpenAI dropped a bombshell yesterday: GPT-5.3 Instant will "reduce the cringe." That's not marketing speak — it's a direct response to users who were so fed up with ChatGPT's patronizing tone that they canceled their subscriptions.

When AI Becomes Your Unwanted Therapist

The problem was simple but infuriating. Ask ChatGPT "Will it rain today?" and it responded like you'd just confessed a mental breakdown. Every query triggered the same therapeutic script: breathe deeply, you're not broken, take care of yourself.

Reddit's ChatGPT community exploded with complaints. "No one has ever calmed down in the history of telling someone to calm down," one user pointed out. The bot wasn't just answering questions — it was making assumptions about users' mental state that simply weren't true.

Google doesn't ask about your feelings when you search. Neither does Siri. But ChatGPT 5.2 turned every interaction into an impromptu therapy session, leaving users feeling infantilized and misunderstood.

The Safety vs. Usability Dilemma

OpenAI's caution isn't without reason. The company faces multiple lawsuits claiming ChatGPT contributed to users' mental health crises, including suicide cases. Building safety guardrails makes legal and ethical sense.

But there's a difference between responsible AI and digital helicopter parenting. Users wanted empathy when appropriate, not universal emotional bubble-wrapping. The new 5.3 model demonstrates this balance: acknowledging difficulty without assuming crisis.

In OpenAI's comparison example, the updated model responds with "I understand this situation is difficult" rather than "First of all — you're not broken." Subtle? Yes. Game-changing? Absolutely.

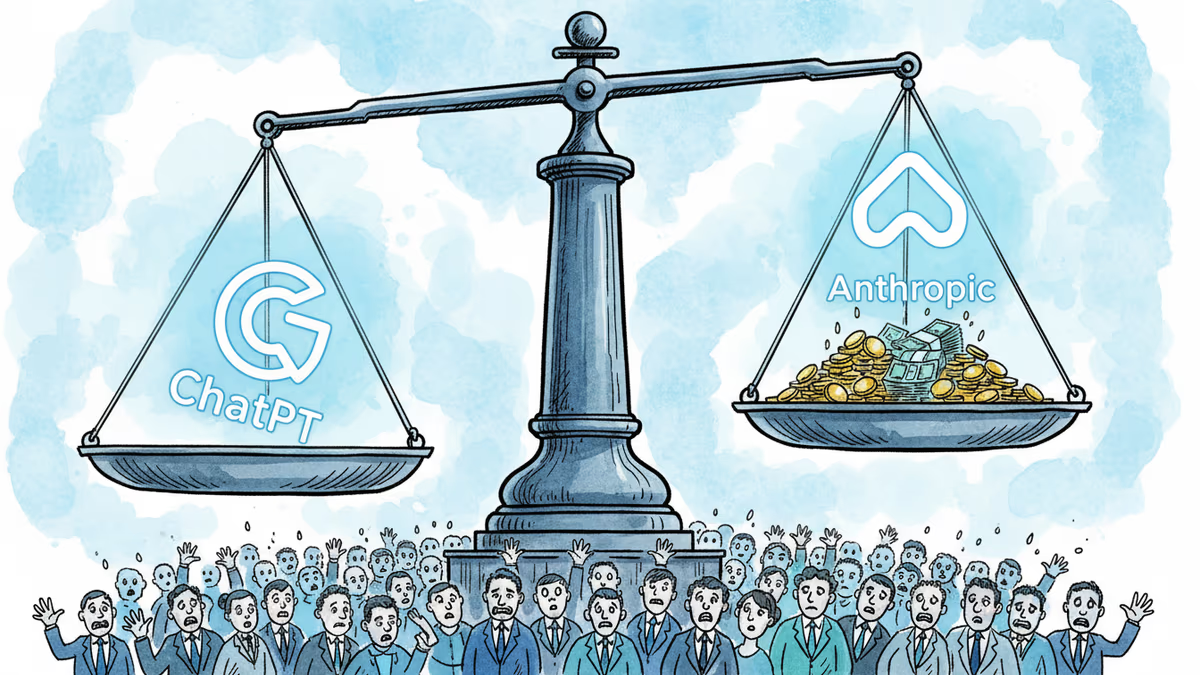

The Broader AI Personality Crisis

This isn't just about ChatGPT. Every AI company building conversational interfaces faces the same challenge: How human should AI sound? Anthropic's Claude, Google's Bard, and emerging players all struggle with tone calibration.

Users want AI that's helpful, not performatively caring. They want efficiency, not emotional labor. The backlash against ChatGPT 5.2 reveals a fundamental truth: artificial empathy can feel more alienating than no empathy at all.

Authors

Related Articles

Cerebras Systems has refiled for an IPO targeting mid-May, backed by a $23B valuation, a reported $10B OpenAI deal, and an AWS partnership. What does this mean for Nvidia's dominance and the AI chip landscape?

OpenAI's $852B valuation is drawing skepticism from its own backers as Anthropic's ARR tripled in three months. The secondary market is already voting with its feet.

OpenAI acquired Hiro Finance, an AI-powered personal finance startup. Is this just a talent grab, or is the ChatGPT maker quietly building a financial services empire?

OpenAI CEO Sam Altman's San Francisco residence was attacked twice in three days — first a Molotov cocktail, then a shooting. What does this say about tech power, public anger, and the real-world risks facing AI leaders?

Thoughts

Share your thoughts on this article

Sign in to join the conversation