Supreme Court Says No to AI Art Copyright—But the Real Battle Just Began

US Supreme Court declines AI copyright case, leaving AI-generated art unprotected. What this means for creators, tech giants, and the future of creativity.

The $2 Billion Question Just Got Answered

The US Supreme Court dropped a bombshell Monday by declining to hear Stephen Thaler's case about AI-generated art copyright. The computer scientist from Missouri had been fighting since 2019 to get copyright protection for an image called "A Recent Entrance to Paradise"—created entirely by his algorithm. The Copyright Office said no. Lower courts said no. Now the Supreme Court has effectively said no too.

But here's what makes this more than just another legal defeat: Thaler's case could've rewritten the rules for a rapidly exploding industry where AI tools generate everything from marketing copy to movie scripts.

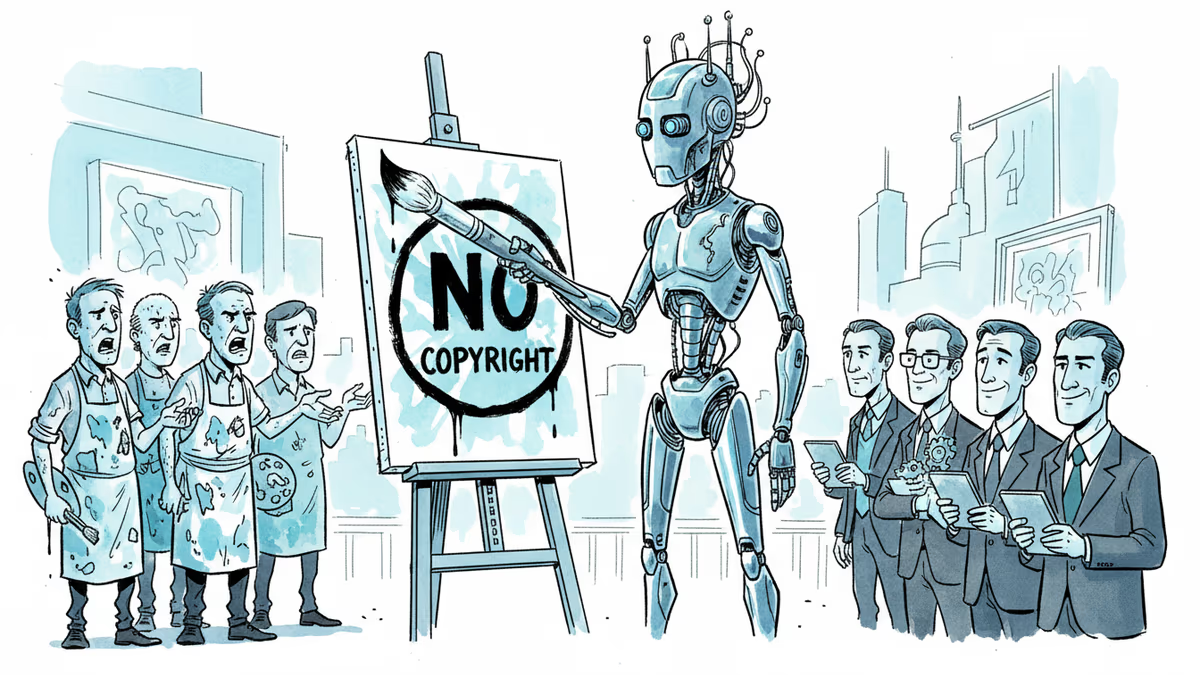

Artists Breathe Easy, Tech Bros Sweat

Traditional artists are celebrating—and for good reason. "If AI could claim copyright, we'd be competing against machines that never sleep, never demand payment, and pump out thousands of works daily," says Maria Rodriguez, a freelance illustrator from Brooklyn.

Meanwhile, the AI art community is split. Some see this as discrimination against new creative tools. Others worry about their business models. Take MidJourney users who sell AI-generated prints on Etsy—their "original" works now sit in a legal gray zone with zero copyright protection.

The irony? Anyone can now freely copy, modify, and commercialize AI-generated content without permission. That's a double-edged sword for the very companies pushing AI creativity tools.

Big Tech's Billion-Dollar Headache

OpenAI, Google, and Adobe built entire business models around AI-generated content. No copyright means their AI outputs enter the public domain immediately. That's potentially catastrophic for subscription services promising "unique, copyrightable content."

But here's the twist: it might actually help them. Without copyright restrictions, these companies can freely train their models on any AI-generated content—including competitors' outputs. It's a legal free-for-all that could accelerate AI development while making individual AI creations worthless.

Adobe already hedged its bets by focusing on AI as a creative assistant rather than a replacement. Their stock barely moved after the news.

The Global Chess Game

While America draws a hard line, other countries are taking different approaches. The EU leans toward strict human authorship requirements. China explores limited AI copyright protection. The UK considers case-by-case evaluation.

This fragmentation creates a bizarre scenario: an AI artwork might have copyright protection in Beijing but not in New York. For global platforms and creators, that's a compliance nightmare waiting to happen.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation