2026 US State Tech Laws: A New Era for AI and Digital Rights

Explore the new US state tech laws taking effect in 2026, covering AI transparency, the right to repair, and crypto protections in CA, CO, and WA.

While Congress remains locked in partisan dysfunction, state legislatures aren't waiting around. As of January 1, 2026, a wave of new regulations is sweeping across the US, fundamentally changing how tech companies operate. According to The Verge, these laws focus on everything from AI transparency to the fundamental Right to Repair, marking a significant shift in consumer power.

2026 US State Tech Laws: Expanding Consumer Protection

Starting today, residents in Colorado now have the right to crypto ATM refunds, a move designed to protect users from fraudulent transactions. Meanwhile, both Colorado and Washington have enacted wide-ranging electronics repair laws. It's no longer just about iPhones; these rules cover a vast array of digital devices, forcing manufacturers to provide parts and manuals to the public.

AI Systems and the Battle for the App Store

In California, new rules regarding AI system transparency are officially in effect. Companies must now be more open about how their algorithms make decisions. However, it's not all smooth sailing for regulators; a last-minute court ruling in Texas has temporarily blocked a controversial law that would have required age verification for App Stores, highlighting the ongoing tension between state mandates and judicial oversight.

Authors

Related Articles

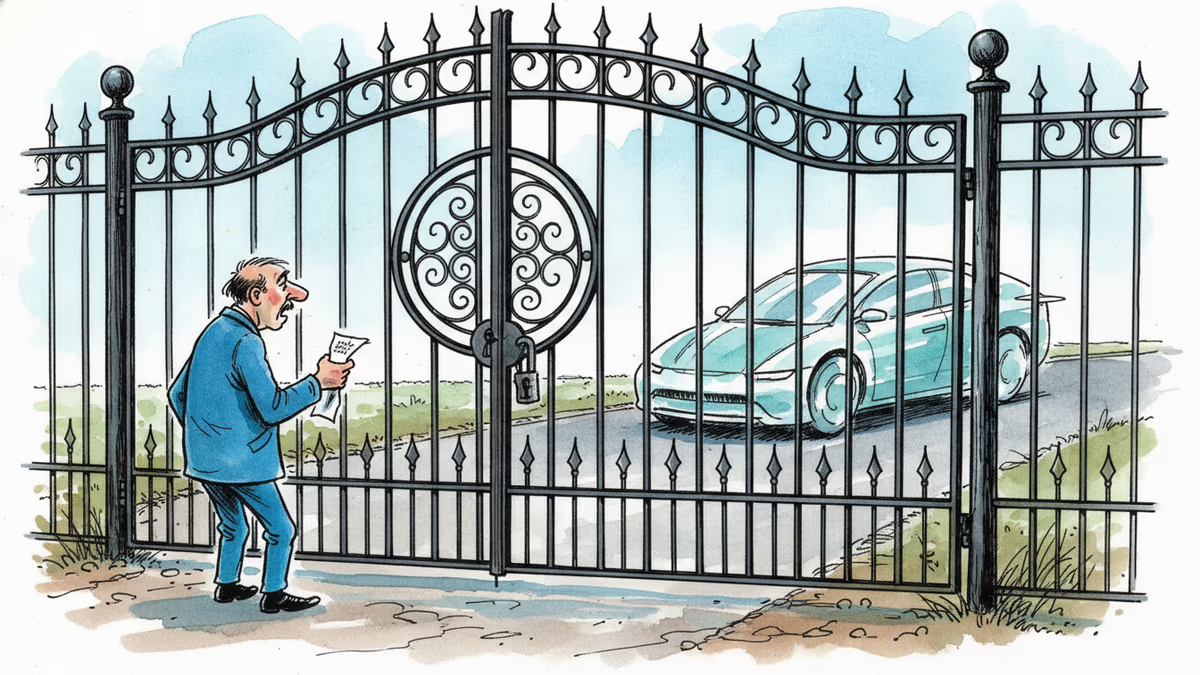

Elon Musk confirmed on Tesla's Q1 2026 earnings call that Hardware 3 vehicles will never receive unsupervised Full Self-Driving — locking out millions who paid for the feature.

Florida is investigating OpenAI over alleged links to a mass shooting. As AI firms quietly restrict their most powerful tools, a harder question is taking shape: who's legally responsible when AI helps someone plan violence?

Florida's AG is investigating OpenAI over a campus shooting, child safety risks, and national security concerns. What it means for AI regulation in America.

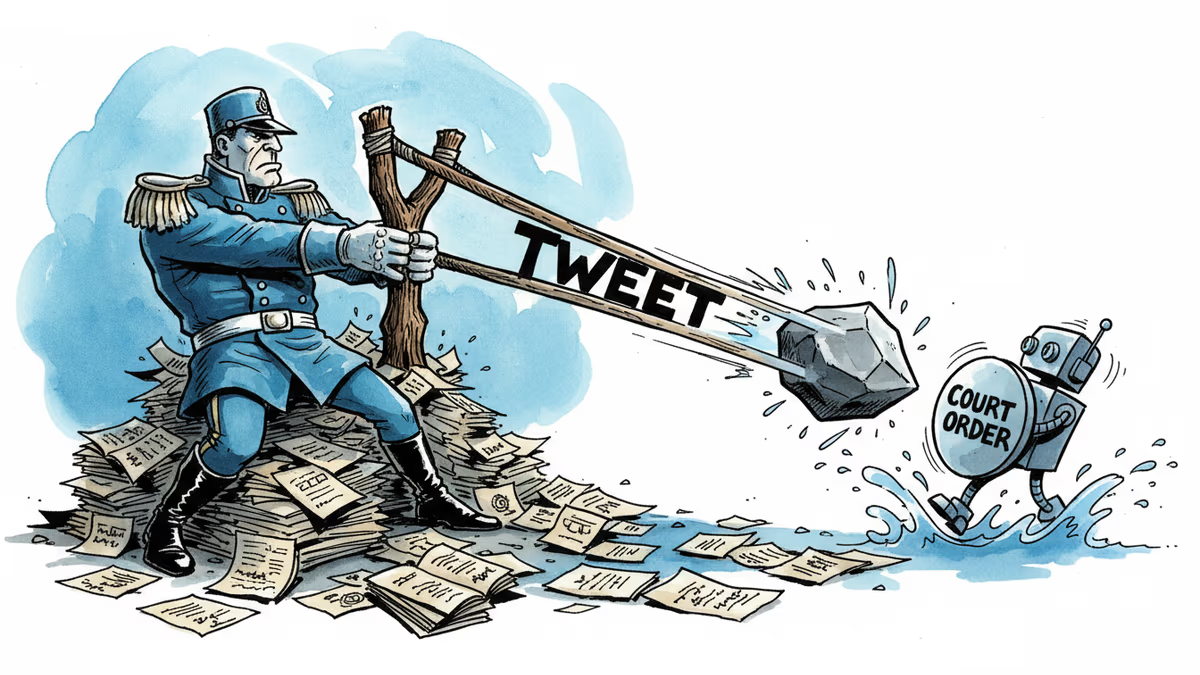

A California judge blocked the Pentagon from labeling Anthropic a supply chain risk. The 43-page ruling exposes a pattern: tweet first, lawyer later. What it means for AI governance and the limits of government leverage.

Thoughts

Share your thoughts on this article

Sign in to join the conversation