When the Pentagon Picked a Culture War Over a Contract Dispute

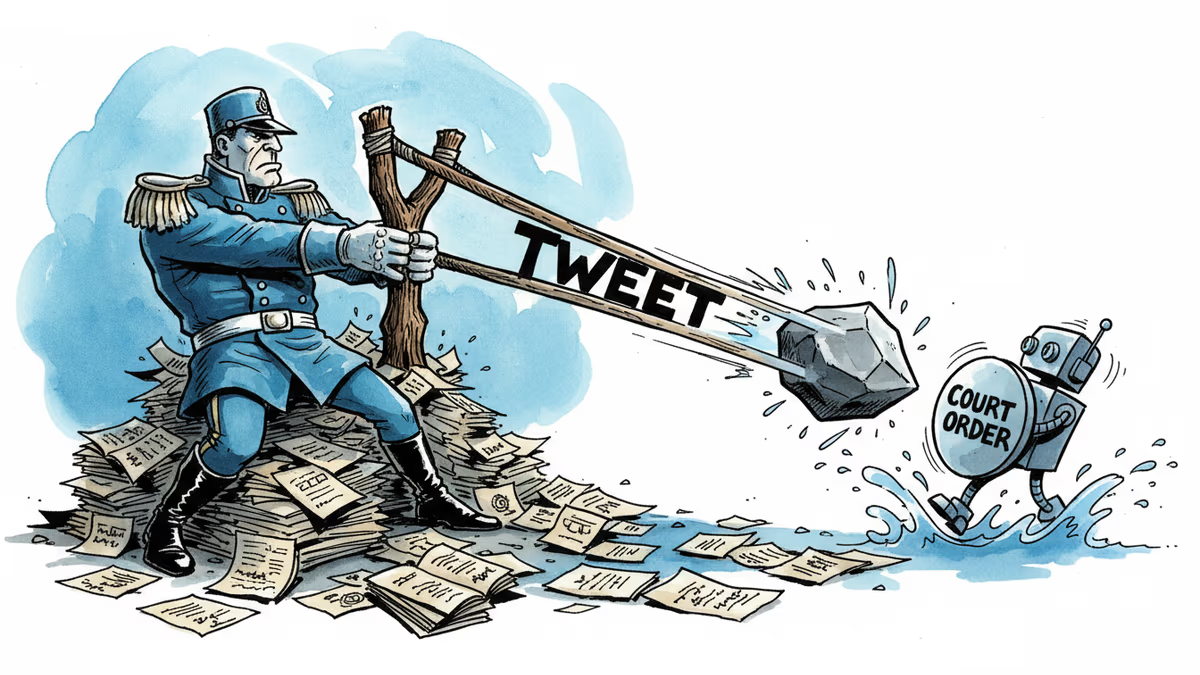

A California judge blocked the Pentagon from labeling Anthropic a supply chain risk. The 43-page ruling exposes a pattern: tweet first, lawyer later. What it means for AI governance and the limits of government leverage.

The Pentagon Needed Six Months to Stop Using the AI It Was Trying to Ban

That's not a paradox. That's the whole story.

On February 27, President Trump called Anthropic employees "Leftwing nutjobs" on Truth Social and ordered every federal agency to stop using the company's AI. Four days later, the Pentagon formally designated Anthropic a supply chain risk. One month after that, a federal judge in California issued a 43-page opinion halting that designation—and in the process, documented what she found to be a pattern of the government saying things in public it couldn't defend in court.

The case isn't over. But what's already on the record is striking.

What Actually Happened

For most of 2025, the U.S. government used Anthropic's Claude without complaint. Defense employees accessed it through Palantir under a government-specific usage policy that Anthropic co-founder Jared Kaplan said "prohibited mass surveillance of Americans and lethal autonomous warfare." No formal objections. No flags. Business as usual.

The friction started only when the government moved to contract with Anthropic directly. Negotiations broke down. And instead of invoking the existing legal process for contract disputes, the administration went to social media.

Trump's Truth Social post came first. Defense Secretary Pete Hegseth followed, announcing he'd label Anthropic a supply chain risk and declaring that "no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic." Strong words. The government's own lawyers later admitted in court that Hegseth doesn't actually have the power to do that, and agreed with the judge that the statement had "absolutely no legal effect at all."

The supply chain risk designation itself requires a specific set of procedural steps. Judge Rita Lin found that Hegseth hadn't completed them. Letters to congressional committees claimed less drastic options had been considered and ruled out—but offered no details. The government argued Anthropic could deploy a "kill switch" against government systems. Its lawyers later admitted there was no evidence of that.

Dean Ball, who worked on AI policy for the Trump administration before filing a brief supportingAnthropic, called the ruling "a devastating ruling for the government, finding Anthropic likely to prevail on essentially all of its theories."

Why the Timing Matters

The government wasn't just distracted when it pursued this case—it was fighting a war. Court documents indicate that senior Pentagon leadership spent the first five days of the Iran conflict simultaneously ordering airstrikes and compiling evidence that Anthropic was a saboteur of the U.S. government.

That's a resource allocation choice. And it tells you something about how seriously the administration takes what it sees as an AI culture war.

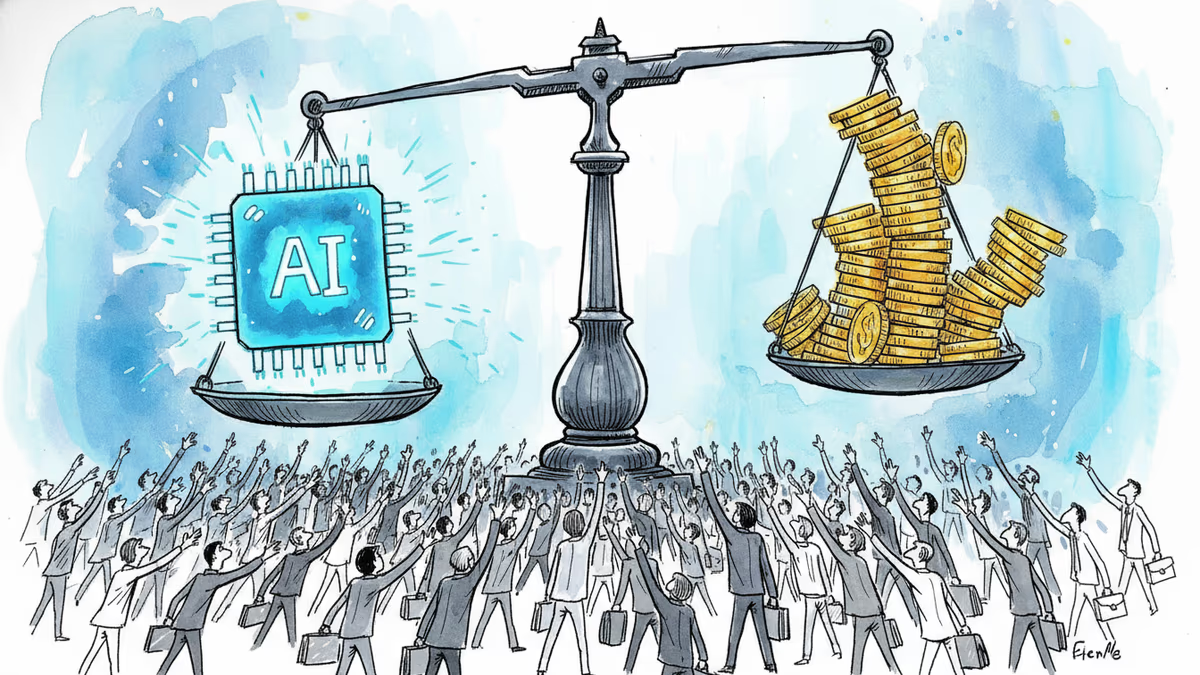

At the same time, Trump himself acknowledged the Pentagon would need six months to stop using Claude. The AI they were trying to punish was too embedded in operations to remove quickly. That's not a minor detail—it's a structural admission that the government's dependence on Anthropic's technology had already outpaced its political leverage over the company.

Three Ways to Read This

**For Anthropic:** The injunction is a win, but not a resolution. The company still has a second case pending in D.C., and the government is expected to appeal. More importantly, the informal pressure doesn't go away with a court order. Defense contractors who want to stay on good terms with the Pentagon now have little incentive to work with Anthropic, regardless of whether it carries an official designation. Charlie Bullock, a senior research fellow at the Institute for Law and AI, put it plainly: "The government can use mechanisms to apply pressure without breaking the law. It kind of depends how invested the government is in punishing Anthropic."

For other AI companies: This case is a data point, not a precedent that protects anyone else. OpenAI, Google DeepMind, and others are watching. The lesson could cut two ways: maintain ideological alignment with the administration to keep contracts, or hold the line on principles and trust the courts. The first path is easier in the short term. The second just got a little more credible.

For investors and the broader market: The case exposes a tension that isn't going away. AI companies increasingly depend on government contracts for scale and legitimacy. Governments increasingly depend on AI companies for operational capability. When those relationships fracture—over ethics policies, usage restrictions, or political allegiance—the fallout is messy, public, and expensive for both sides. The Anthropic case is unlikely to be the last one.

The Limits of Leverage

What the court documents ultimately reveal isn't just legal overreach. It's a miscalculation. The administration assumed it could use the supply chain risk designation as a blunt instrument to punish a company that wouldn't align politically. It turned out the instrument had rules attached to it, and the rules hadn't been followed.

The government still has tools. Informal pressure on contractors. Procurement prioritization. The slow erosion of Anthropic's position in the federal market without a single court filing. None of that is illegal. None of it requires winning in court.

But the public record now shows an administration that wanted a culture war with an AI company during the opening days of a military conflict—and couldn't make the legal case to justify it.

Authors

Related Articles

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

At Milken 2026, five AI insiders—from the CEO of ASML to a quantum physicist challenging LLMs—laid out the physical, energy, and geopolitical limits the AI boom is running into.

The Musk v. Altman trial in Oakland isn't just a contract dispute. It's become an unscripted window into how AI's most powerful figures actually operate—and who they think should control the technology's future.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

Thoughts

Share your thoughts on this article

Sign in to join the conversation