The OpenAI Trial Is Exposing What Big Tech Doesn't Say Out Loud

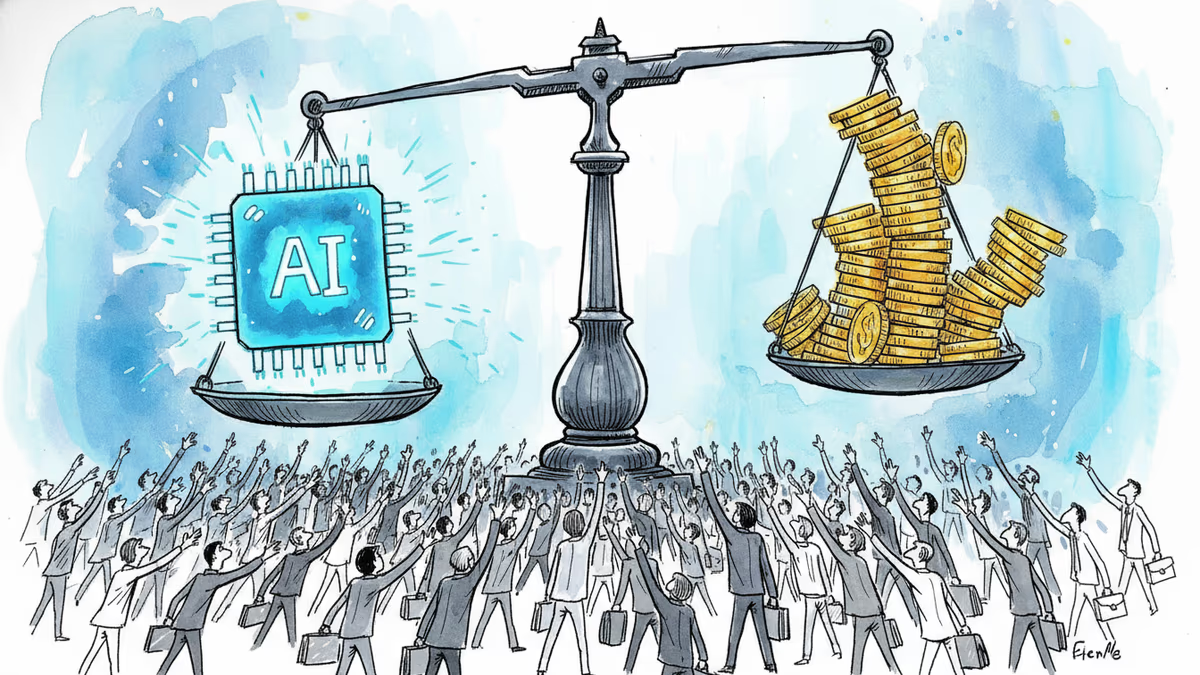

The Musk v. Altman trial in Oakland isn't just a contract dispute. It's become an unscripted window into how AI's most powerful figures actually operate—and who they think should control the technology's future.

How do you expose the inner workings of a trillion-dollar industry? Apparently, you put two of its founders under oath.

The Musk v. Altman trial opened last week in Oakland, California, and within days it had already produced admissions, text message leaks, and a judge visibly losing patience with arguments about the end of humanity. This is nominally a contract dispute. It's becoming something else entirely.

What's Actually Being Decided

Elon Musk is suing OpenAI, Sam Altman, and president Greg Brockman, alleging they breached a charitable trust. His argument: the millions he contributed around a decade ago were given on the understanding that OpenAI would remain a nonprofit, dedicated to safe AI for humanity's benefit—not a vehicle for commercial profit.

OpenAI's counter is blunt: Musk always knew a for-profit arm would be necessary. Building frontier AI costs billions. He wasn't deceived; he just changed his mind about who should be in charge.

The legal crux is timing. Charitable trust claims carry a statute of limitations—roughly three to four years from when you discovered the alleged misconduct. Musk argues he only realized OpenAI had abandoned its mission in 2022. OpenAI's lawyers are working hard to show he knew much earlier. After the first week of testimony, it's unclear Musk has made that case convincingly to the judge and jury.

The stakes are real. OpenAI is reportedly planning an IPO this year. Even a partial ruling in Musk's favor—particularly one that unwinds the company's restructuring, which already involved negotiated compromises with the attorneys general of California and Delaware—could significantly complicate that timeline.

The Moments That Weren't in the Script

The trial's most striking exchange had nothing to do with contract law. When Musk's attorney declared, "We could all die as a result of AI," the courtroom went quiet. The judge's response was pointed: Musk runs a company in the exact same space. Why would anyone trust the future of humanity to him, either? Both sides then spent considerable time arguing over which billionaire was better positioned to steward AI safety—until the judge cut them off. This trial, she said sternly, is not about whether artificial intelligence has damaged humanity.

But that moment revealed something the formal legal arguments couldn't. Underneath the contract claims, this trial is a proxy war over who gets to define responsible AI development. The judge didn't want to go there. The lawyers couldn't stop going there.

Day four brought a different kind of revelation. Under cross-examination, Musk admitted that xAI distills OpenAI's models to train its own. He quickly added that this is standard practice across the industry. Reporters in the room started typing immediately. The man suing OpenAI for betraying its mission has been using OpenAI's work to build his competing AI company. Whether that's hypocrisy or just how the industry functions is, itself, an open question—but it complicated the narrative Musk is trying to sell.

Then came the text messages. Evidence introduced at trial showed Musk and Mark Zuckerberg coordinating to block OpenAI's restructuring—and even exploring a joint bid to acquire the assets of OpenAI's nonprofit. Two executives who publicly spar were, behind the scenes, aligning against a common rival.

What Comes Next—and Why It Matters Beyond the Verdict

The trial is expected to last roughly three weeks. A panel of nine jurors will deliver an advisory verdict, but the judge isn't bound by it—she makes the final call on both liability and remedy. Upcoming witnesses include Brockman, former chief scientist Ilya Sutskever, former CTO Mira Murati, and Microsoft CEO Satya Nadella. UC Berkeley computer scientist Stuart Russell will testify on AI safety, which is likely to reignite exactly the kind of debate the judge tried to shut down.

Outside the courthouse, protesters have been holding signs suggesting that regardless of who wins, the public loses. It's easy to dismiss that as theater. It's harder to dismiss once you've watched two sides spend a morning arguing about existential risk while each defending their own AI empire.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Two days before trial, Elon Musk texted OpenAI's Greg Brockman warning he and Sam Altman would become "the most hated men in America." The judge ruled it inadmissible — but the damage to Musk's narrative may already be done.

Anthropic is closing what may be its final private fundraise at a $900B valuation, surpassing OpenAI. Investors have 48 hours to commit to the ~$50B round.

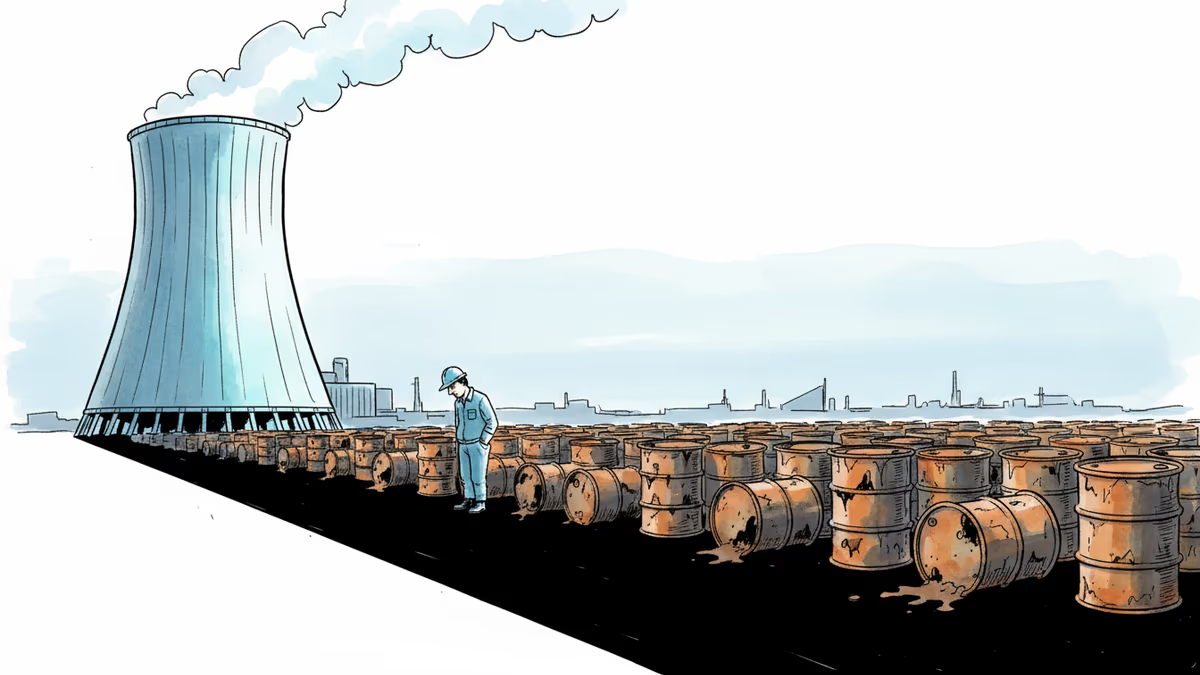

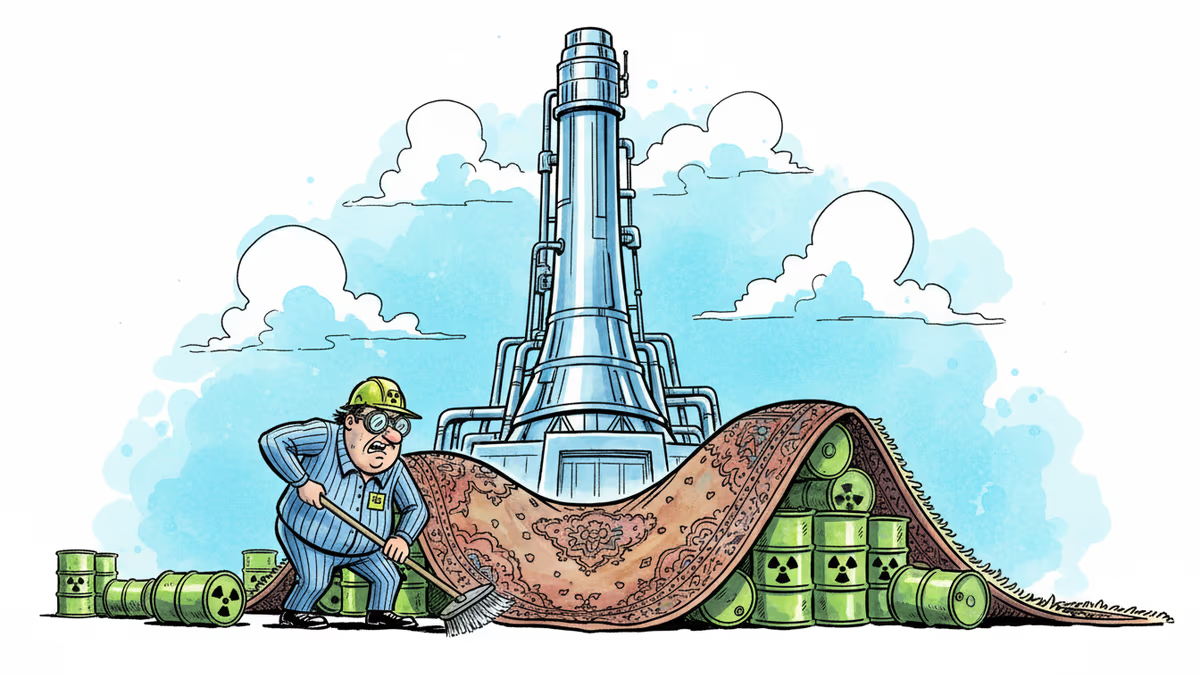

Nuclear power is winning fans across the political spectrum—and Big Tech is pouring billions in. But America still has no permanent home for the 2,000 metric tons of high-level waste its reactors produce every single year.

Nuclear energy is booming again, fueled by Big Tech's data center appetite. But 70 years of spent fuel still has nowhere permanent to go. Finland solved it. The US hasn't tried hard enough.

Thoughts

Share your thoughts on this article

Sign in to join the conversation