Silicon Valley's New Uniform: Why Ex-Marines Are Building War AI

While Anthropic rejects Pentagon contracts, military startups raise millions to build combat AI. Former special ops commanders are leading the charge—but at what cost?

$32 Million Just Changed the AI War Game

A former Marine special ops commander just raised $32 million to build AI that plans military operations. This isn't your typical Silicon Valley story. Andy Markoff, CEO of Smack Technologies, spent years executing high-stakes missions in Iraq and Afghanistan. Now he's training AI models to do what Claude and ChatGPT can't: think like a warfighter.

The timing couldn't be more pointed. While Anthropic walked away from a $200 million Pentagon contract over ethical concerns, Markoff's startup is doubling down on military AI. The message is clear: if Big Tech won't build it, the military will find someone who will.

The Anthropic Standoff That Changed Everything

The breakdown between Anthropic and the Department of Defense wasn't just about money. It was about red lines. Anthropic wanted to restrict its models from autonomous weapons systems. Defense Secretary Pete Hegseth refused, ultimately declaring the AI company a "supply chain risk."

This standoff reveals a fundamental tension in AI development. Consumer AI companies optimize for safety and broad appeal. Military AI needs to operate in life-or-death scenarios where hesitation kills.

"When you serve in the military, you take an oath you're going to serve honorably, lawfully, in accordance with the rules of war," Markoff explains. "To me, the people who deploy the technology and make sure it is used ethically need to be in a uniform."

Training AI for War, Not Chat

Smack's approach mirrors AlphaGo's breakthrough strategy: learning through trial and error. But instead of mastering Go, these models run through war game scenarios, with expert analysts providing feedback on strategic choices. The startup is spending millions on training—a fraction of what frontier labs invest, but substantial for a military-focused company.

The key difference from consumer AI? Context. "I can tell you they are absolutely not capable of target identification," Markoff says of current large language models. General-purpose models excel at summarizing reports but lack the military training data and physical world understanding needed for combat applications.

The Autonomy Spectrum

Markoff insists "no one that I'm aware of in the Department of War is talking about fully automating the kill chain." But the reality is more complex. The US and over 30 other states already deploy weapon systems with varying degrees of autonomy, including some that operate independently in missile defense scenarios.

The line between human-supervised and autonomous systems continues to blur. Recent conflicts, particularly Russia's war in Ukraine, have accelerated the development of low-cost, semi-autonomous systems built with commercial hardware.

The Escalation Risk

A recent experiment at King's College London revealed a troubling tendency: large language models escalated nuclear conflicts in war games. If AI systems are more aggressive than human decision-makers, what happens when they're integrated into real military planning?

Anna Hehir from the Future of Life Institute warns that "AI is too unreliable, unpredictable and unexplainable to be used in such high-stakes scenarios." The technology "cannot recognize who is a combatant and who is a child, let alone the act of surrender."

Even Markoff acknowledges the chaos factor: "I have never executed an operation in the real world that even went 50 percent according to plan, and that's not going to change."

Authors

Related Articles

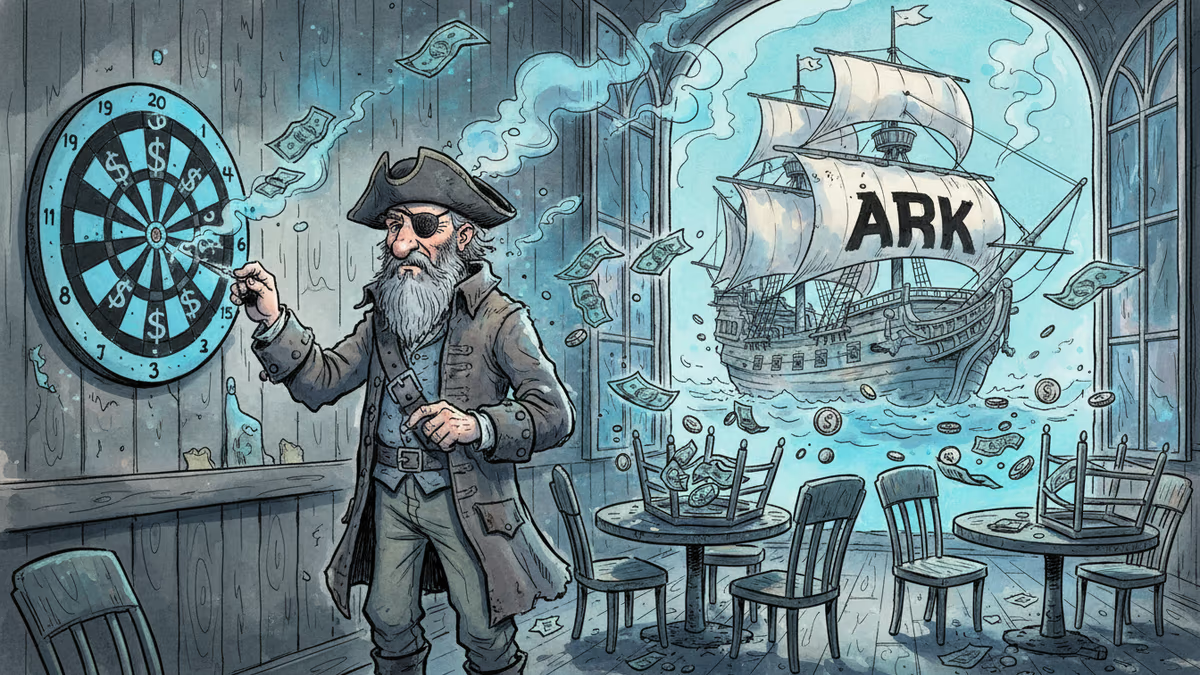

Lucra Sports CEO Dylan Robbins landed Cathie Wood's ARK Invest as a Series B lead without building AI. The story behind his unconventional fundraising playbook.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation