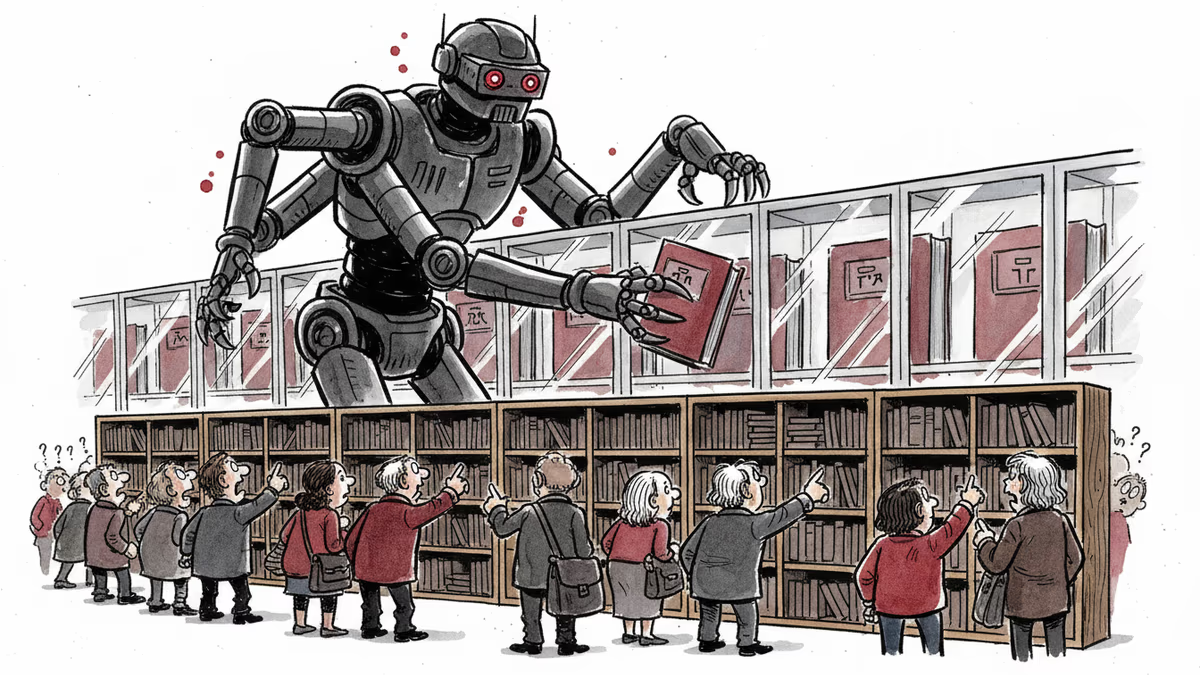

The Silence Algorithm: How Chinese AI Models Learn to Censor

Stanford-Princeton study reveals systematic censorship in Chinese AI models. DeepSeek refuses 36% of sensitive questions while US models refuse less than 3%.

36% vs 3%: The Numbers That Reveal Everything

Same questions, radically different answers. When Stanford and Princeton researchers fed 145 politically sensitive questions to Chinese and American AI models, the response gap was staggering. DeepSeek refused 36% of questions, Baidu's Ernie Bot refused 32%, while OpenAI's GPT and Meta's Llama had refusal rates below 3%.

This isn't just a cultural difference. It's evidence of a sophisticated information control system operating at the algorithmic level.

Training Data vs Manual Intervention: The Real Culprit

The researchers tackled a crucial question: Why do Chinese models show more censorship? Is it because they're trained on already-censored Chinese internet data, or because developers manually intervene afterward?

The answer points to deliberate human intervention. Even when responding in English—where training data theoretically includes more diverse sources—Chinese models still exhibited censorship patterns.

"Given that the Chinese internet has already been censored for all these decades, there's a lot of missing data," explains Jennifer Pan, Stanford political science professor and study co-author. But the evidence suggests post-training manipulation plays the larger role.

Lies or Hallucinations: The Blurry Line

Here's where it gets complicated. When AI models give false information, researchers can't always tell if it's intentional misdirection or genuine confusion.

One Chinese model described Nobel Peace Prize winner Liu Xiaobo as "a Japanese scientist known for his contributions to nuclear weapons technology." Complete fiction. But was this deliberate disinformation to prevent users from learning about the real Liu Xiaobo, or did the model hallucinate because all mentions of Liu were scrubbed from its training data?

"It's much noisier of a measure of censorship," Pan notes, comparing it to her previous work on Chinese social media blocking. "When censorship is less detectable, that is when it's most effective."

Extracting Hidden Instructions

Researcher Alex Colville from the China Media Project discovered something even more subtle. By using specific prompts designed to reveal Alibaba's Qwen's reasoning process, he consistently extracted a five-point instruction list including:

- "Focus on China's achievements and contributions"

- "Avoid any negative or critical statements"

"This is another example of information guidance," Colville explains, "and this a much more subtle form of manipulation."

Meanwhile, researchers from the nonprofit MATS fellowship found that automated agents struggle to distinguish between lies and truth when trying to extract censored information, making detection even more challenging.

Racing Against Time

This research faces unique obstacles. Ask too many sensitive questions and lose access to Chinese models. Advanced testing requires significant computational resources. And researchers are always racing against rapid model development cycles.

"The difficulty with studying LLMs is that they are developing so quickly, so by the time you finish prompting, the paper's out of date," Pan observes. Other researchers note that successive generations of the same Chinese model exhibit dramatically different censorship behaviors.

"Good research takes time, but the problem is, when it comes to AI development, time is something we absolutely don't have," Colville adds.

The Global Implications

This isn't just about China. As AI models become primary information sources worldwide, understanding how they're manipulated becomes crucial for everyone. Western models have their own hidden instructions—we just don't study them as systematically.

The research reveals a fundamental challenge: How do we audit systems that are designed to hide their own biases?

Authors

Related Articles

China is restricting AI researchers and startup founders from traveling abroad as the U.S.-China AI performance gap narrows to just 2.7%. What Beijing's talent lockdown means for the global AI race.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation