US Senate Passes DEFIANCE Act to Let Deepfake Victims Sue for Civil Damages

On Jan 13, 2026, the US Senate unanimously passed the DEFIANCE Act, allowing victims of non-consensual AI deepfakes to sue creators for civil damages. A major win for digital rights.

Justice just got a digital upgrade. The US Senate has unanimously cleared a path for victims of non-consensual AI-generated imagery to strike back at their creators in a court of law.

DEFIANCE Act: Empowering Victims Against AI Abuse

On January 13, 2026, the Senate passed the Disrupt Explicit Forged Images and Non-Consensual Edits Act (DEFIANCE Act) without a single objection. According to The Verge, this bill grants individuals who have had their likenesses deepfaked into sexually explicit content the right to sue the creators for civil damages.

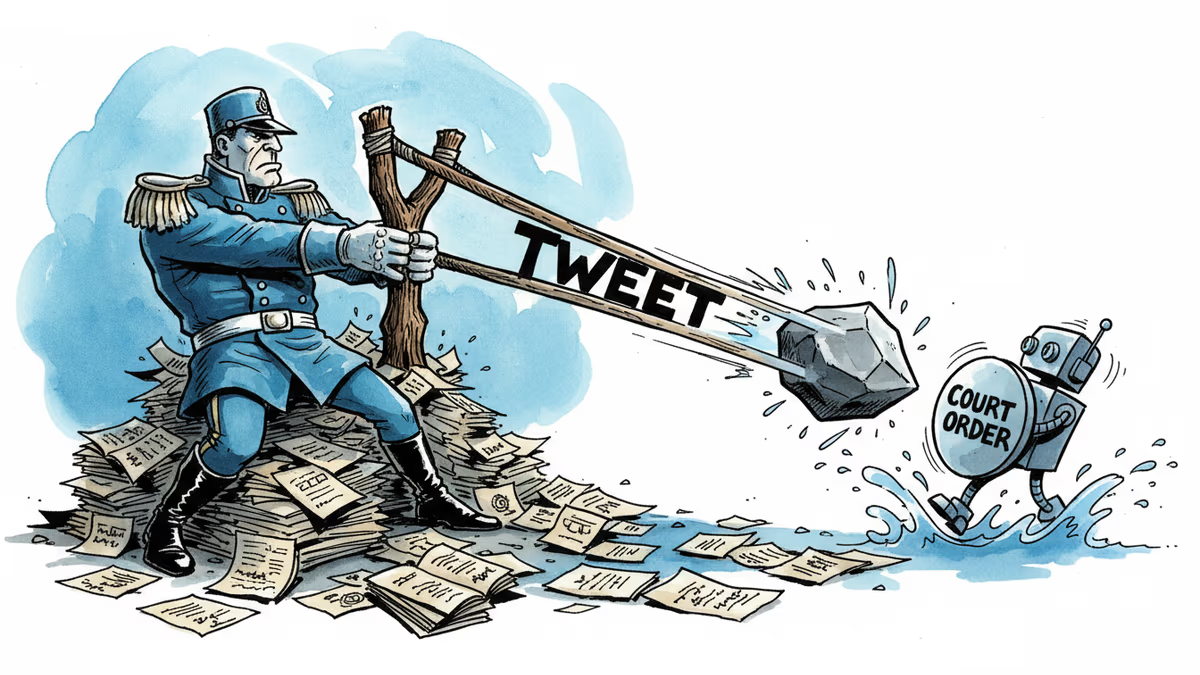

While previous legislation like the Take It Down Act focused on criminalizing distribution and mandating platform intervention, the DEFIANCE Act targets the source. It gives victims a direct legal sword to hold creators financially accountable for their actions.

A Unanimous Stand for Digital Safety

The lack of opposition in the Senate signals a major shift in how the US government views AI-generated harms. By allowing civil litigation, the law bypasses the high burden of criminal proof in some cases, offering a faster and more direct route to reparations for victims of digital violence.

Authors

Related Articles

Florida is investigating OpenAI over alleged links to a mass shooting. As AI firms quietly restrict their most powerful tools, a harder question is taking shape: who's legally responsible when AI helps someone plan violence?

Florida's AG is investigating OpenAI over a campus shooting, child safety risks, and national security concerns. What it means for AI regulation in America.

A California judge blocked the Pentagon from labeling Anthropic a supply chain risk. The 43-page ruling exposes a pattern: tweet first, lawyer later. What it means for AI governance and the limits of government leverage.

Three anonymous plaintiffs have filed a federal lawsuit against xAI, alleging Grok's image model generated sexual content from real photos of minors — and that the company skipped the safeguards every other major AI lab uses.

Thoughts

Share your thoughts on this article

Sign in to join the conversation