X Cuts Creator Cash for War Deepfakes

X targets war-related AI videos with revenue penalties. As platforms police AI content, who decides what's real in the creator economy?

$0 for 90 Days

X just hit creators where it hurts: their wallets. The platform announced Tuesday that anyone posting AI-generated videos of armed conflicts without proper disclosure will be suspended from its Creator Revenue Sharing Program for 90 days. Get caught twice? Permanent ban from monetization.

"During times of war, it is critical that people have access to authentic information on the ground," wrote Nikita Bier, X's head of product. "With today's AI technologies, it is trivial to create content that can mislead people."

The move targets a growing problem: realistic AI war footage spreading across social media, often designed to go viral and generate revenue.

The Incentive Problem

X's Creator Revenue Sharing Program splits advertising revenue with popular creators. More engagement equals more money. Critics have long argued this structure incentivizes "sensationalized content, like clickbait or other posts designed to spark outrage."

War content fits this formula perfectly. Dramatic footage from conflict zones naturally attracts attention, making AI-generated war videos a tempting revenue stream. Recent conflicts in Ukraine and Gaza have seen waves of deepfake content spreading across platforms.

X plans to identify violators through AI detection tools and its crowdsourced fact-checking system, Community Notes. But the company's track record on content moderation remains mixed.

Selective Enforcement

Here's the catch: X's new policy only covers armed conflict. Political misinformation, fake product endorsements, and other AI deception remain fair game for monetization.

This selective approach raises questions about platform responsibility. Why is war-related AI content treated differently than election deepfakes or fraudulent advertisements? The answer likely lies in public pressure and regulatory scrutiny around conflict misinformation.

YouTube, TikTok, and other platforms face similar challenges. As AI generation tools become more accessible, the line between authentic and artificial content continues to blur. Each platform is developing its own approach, creating an inconsistent patchwork of policies.

The Bigger Battle

X's move reflects a broader shift in how platforms handle AI content. Rather than blanket bans, companies are experimenting with targeted restrictions tied to specific harms or contexts.

But this approach creates new dilemmas. Who decides which topics deserve special protection? How do platforms balance free expression with information integrity? And can technical solutions keep pace with rapidly evolving AI capabilities?

The stakes extend beyond individual platforms. As AI-generated content becomes indistinguishable from reality, society's relationship with truth itself is being tested.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

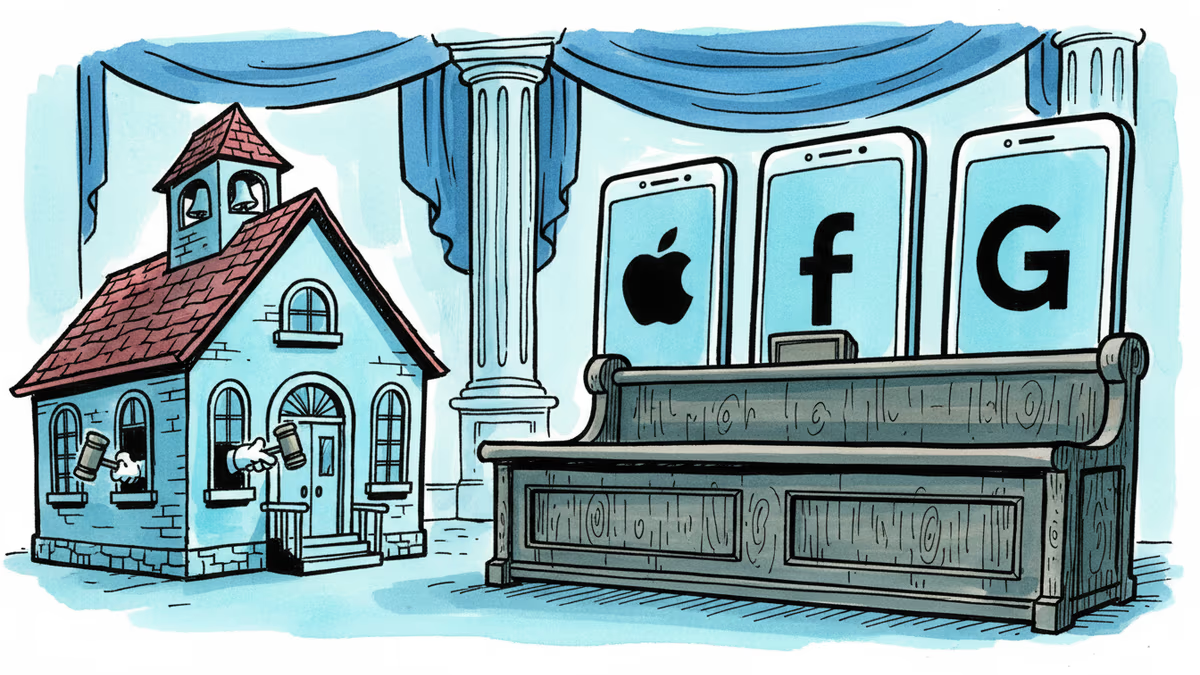

Snap, YouTube, and TikTok settled a landmark school district lawsuit over social media addiction. With 1,000+ similar cases pending, this could reshape Big Tech's liability landscape.

Thoughts

Share your thoughts on this article

Sign in to join the conversation