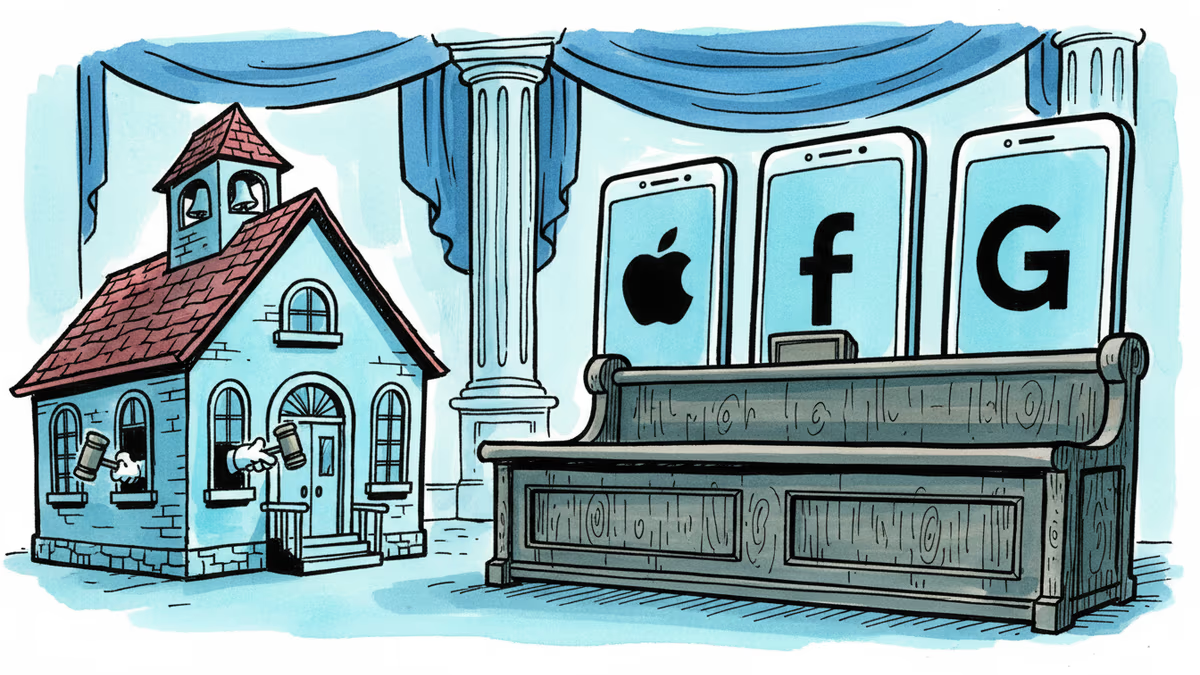

Schools Are Winning in Court Against Social Media

Snap, YouTube, and TikTok settled a landmark school district lawsuit over social media addiction. With 1,000+ similar cases pending, this could reshape Big Tech's liability landscape.

A Rural School District Just Made Big Tech Blink

Breathitt County School District — a small public school system in rural Kentucky — has done what years of parental complaints, congressional hearings, and op-eds could not: it extracted a settlement from Snap, YouTube, and TikTok over the harms of social media addiction. The dollar amount remains undisclosed. Meta has not settled and still faces trial in the same case.

What makes this matter beyond the headlines is the number sitting behind it: over 1,000 similar lawsuits are filed across the United States, all watching this case as a bellwether. If the legal theory holds — that social media companies bear financial responsibility for the disruption and mental health costs they impose on schools — the litigation wave has only just begun.

The school district's complaint argued that social media addiction has damaged students' ability to learn, triggered a mental health crisis requiring costly intervention, and strained already-tight public school budgets. Counselor hiring, crisis response programs, and administrative burden: costs that were once invisible have now been itemized on a legal claim.

Why This Case Is Structurally Different

Individual lawsuits against social media companies have struggled for years. Proving that a specific platform caused a specific harm to a specific person is legally difficult, and damages are limited. But when a public institution is the plaintiff, the calculus changes.

School districts can aggregate harm across thousands of students. They can present budget line items — documented expenditures directly tied to managing the fallout. And critically, they carry the moral weight of defending children with public money, not seeking personal compensation.

The timing isn't accidental. Australia enacted a law banning social media for children under 16 in late 2024. The U.S. Congress has debated similar restrictions. Courts and legislatures are applying pressure simultaneously. For the companies, settling before trial carries a specific strategic logic: trials require internal documents to be disclosed. Algorithm design files, internal research on user engagement and addiction, memos about teenage users — these are precisely what companies do not want entering the public record.

Three Perspectives That Don't Align

For educators and school administrators, this settlement is a validation of something they've been saying for years without anyone with legal authority agreeing. Teachers confiscating phones mid-lesson, counselors overwhelmed by anxiety and self-harm cases, principals managing crises that didn't exist a decade ago — none of that translated into accountability until now.

For the tech companies, a confidential settlement is the least damaging outcome available. No admission of liability, no public dollar figure, no precedent-setting verdict. But with 1,000+ cases in the queue, the question is whether settling each one individually is financially and reputationally sustainable — or whether it quietly validates the legal theory that plaintiffs are betting on.

For parents and students, the picture is more complicated. A settlement doesn't change an algorithm. Even if the money flows back into school budgets, the product that generated the harm remains on every teenager's phone. The legal action addresses the financial symptom; the design question — why these platforms are engineered to maximize time-on-screen for adolescents — remains largely untouched by litigation.

Investors in Snap, Alphabet (YouTube's parent), Meta, and ByteDance-linked entities are watching something more structural: whether social media companies are entering a liability era similar to what tobacco and opioid manufacturers experienced. That precedent didn't just produce settlements — it reshaped product formulation, marketing restrictions, and ultimately, business models.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

New Mexico already won $375 million from Meta. Now it wants something harder to give: a court order forcing Facebook, Instagram, and WhatsApp to redesign themselves. A three-week trial starts Monday.

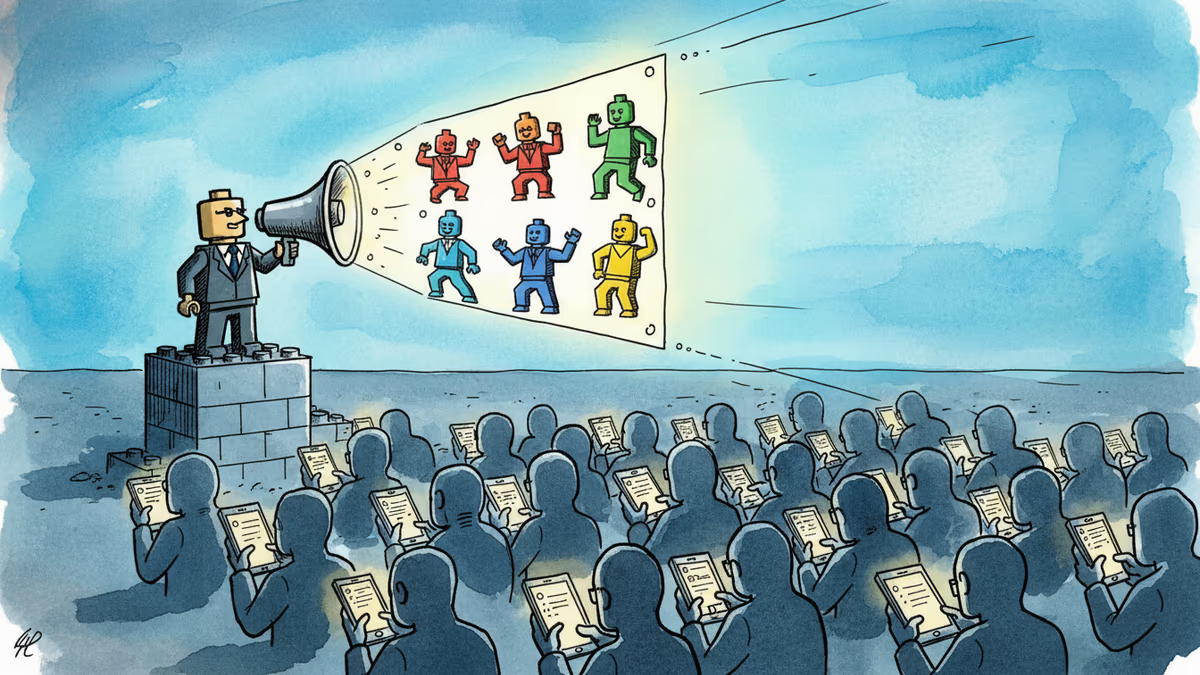

A small pro-Iran team is racking up millions of views with AI-generated Lego videos that mock Trump — and Americans are sharing them. What does that tell us about information warfare?

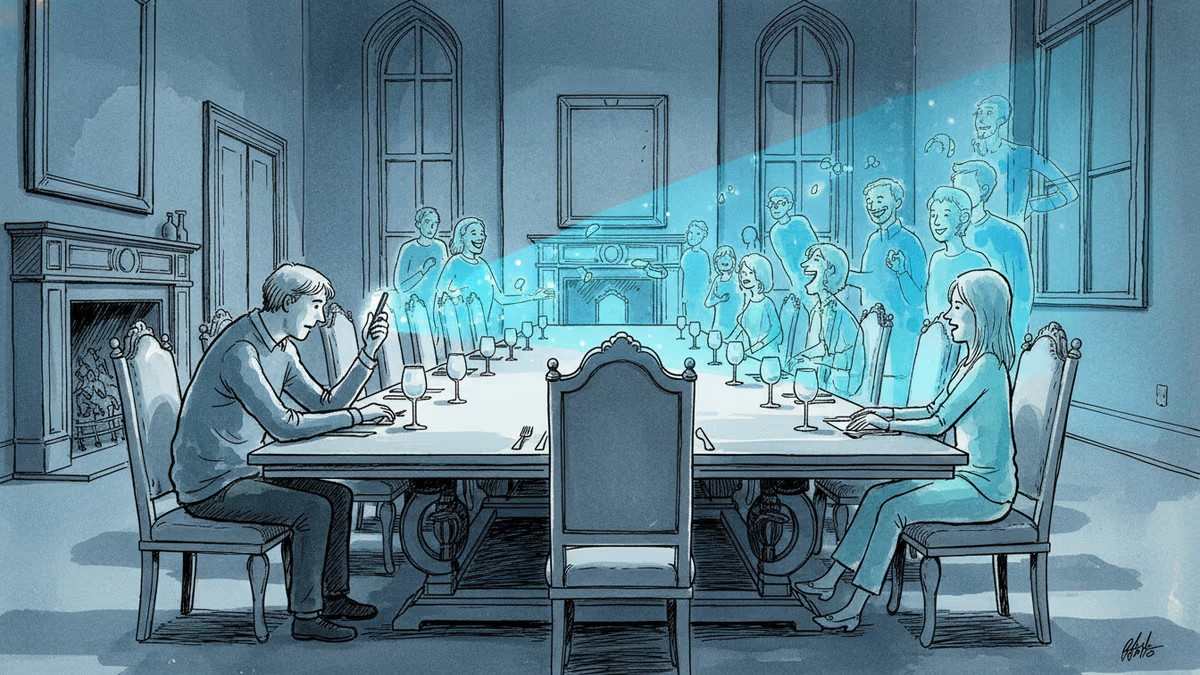

As loneliness hits crisis levels, a new wave of friendship apps is pulling in $16M and 4.3M downloads in the US alone. But can technology actually solve a human problem?

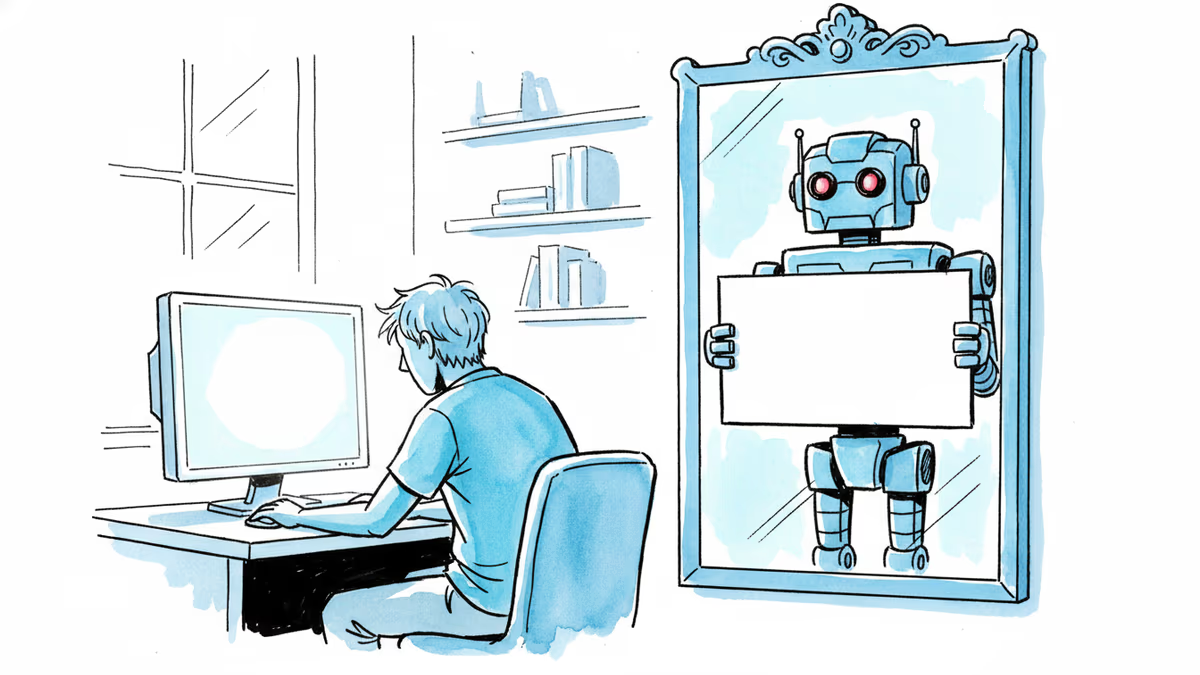

Reddit CEO Steve Huffman announced human verification for suspicious accounts. As AI bots flood the internet, one platform is drawing a line — but the line keeps moving.

Thoughts

Share your thoughts on this article

Sign in to join the conversation