Meta's Real Trial Isn't About the Money

New Mexico already won $375 million from Meta. Now it wants something harder to give: a court order forcing Facebook, Instagram, and WhatsApp to redesign themselves. A three-week trial starts Monday.

$375 million wasn't enough.

Earlier this year, New Mexico Attorney General Raúl Torrez extracted that sum from Meta in a landmark child safety settlement—the largest of its kind won by a state AG against a social media company. Most plaintiffs would call that a win and move on. Torrez didn't.

Beginning Monday, attorneys for both sides return to a Santa Fe courthouse for a three-week public nuisance trial. This time, the state isn't asking for money. It's asking a judge to order Meta to fundamentally change how Facebook, Instagram, and WhatsApp work.

What New Mexico Actually Wants

The demands on the table are specific and sweeping. The AG wants the court to require age verification for New Mexico users, ban end-to-end encryption for users under 18, and cap minors' daily usage at 90 minutes.

The legal vehicle is a public nuisance claim—a doctrine historically used to address things like illegal gun trafficking or environmental contamination that harms broad swaths of the public. Applying it to a social media platform's design choices is, to put it mildly, a stretch. But it's a stretch that could stick.

If it does, the implications reach far beyond New Mexico's 2.1 million residents. A court order mandating platform redesign would be the most structurally invasive ruling against a major tech company in recent memory—and every other state AG, plus regulators in the EU and UK, would be watching.

The Encryption Problem Nobody Wants to Solve

The demand to strip end-to-end encryption from minors is where the case gets genuinely complicated—and where reasonable people land in very different places.

Child safety advocates argue that encrypted channels provide cover for predators. The FBI and law enforcement agencies have made this case for years. But digital rights groups counter that the same encryption protects LGBTQ+ teenagers, victims of domestic abuse, and students facing school bullying from surveillance—including by the very adults who might harm them.

Protect one child by removing encryption; endanger another by keeping it. There is no technical solution that threads this needle cleanly, which is part of why Meta and other platforms have resisted legislative mandates on the issue.

Age verification carries its own paradox. Any system rigorous enough to actually work requires collecting government ID data or biometrics. The cure—a permanent database of children's verified identities held by or shared with tech platforms—may introduce risks as serious as the ones it's meant to solve.

A New Kind of Regulatory Pressure

Meta has faced fines before. It paid $5 billion to the FTC in 2019. It's navigated consent decrees, congressional hearings, and EU enforcement actions. Each time, the core product survived largely intact.

What's different here is the remedy being sought. Fines are a cost of doing business. Behavioral injunctions—stop doing X—are manageable. But a court order that says redesign your product is a different category of intervention. It puts a judge in the position of approving or rejecting product decisions that Meta's engineers and executives would normally make.

Meta will argue, with some force, that this raises serious First Amendment and dormant Commerce Clause concerns. Compelling a platform to change how it communicates information touches on speech. And a patchwork of state-by-state design mandates would be operationally chaotic—can a platform really build a different version of Instagram for New Mexico?

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Booking.com confirmed a data breach exposing names, emails, addresses, phone numbers, and booking details. Hackers are already using the data for phishing attacks.

Florida's AG is investigating OpenAI over a campus shooting, child safety risks, and national security concerns. What it means for AI regulation in America.

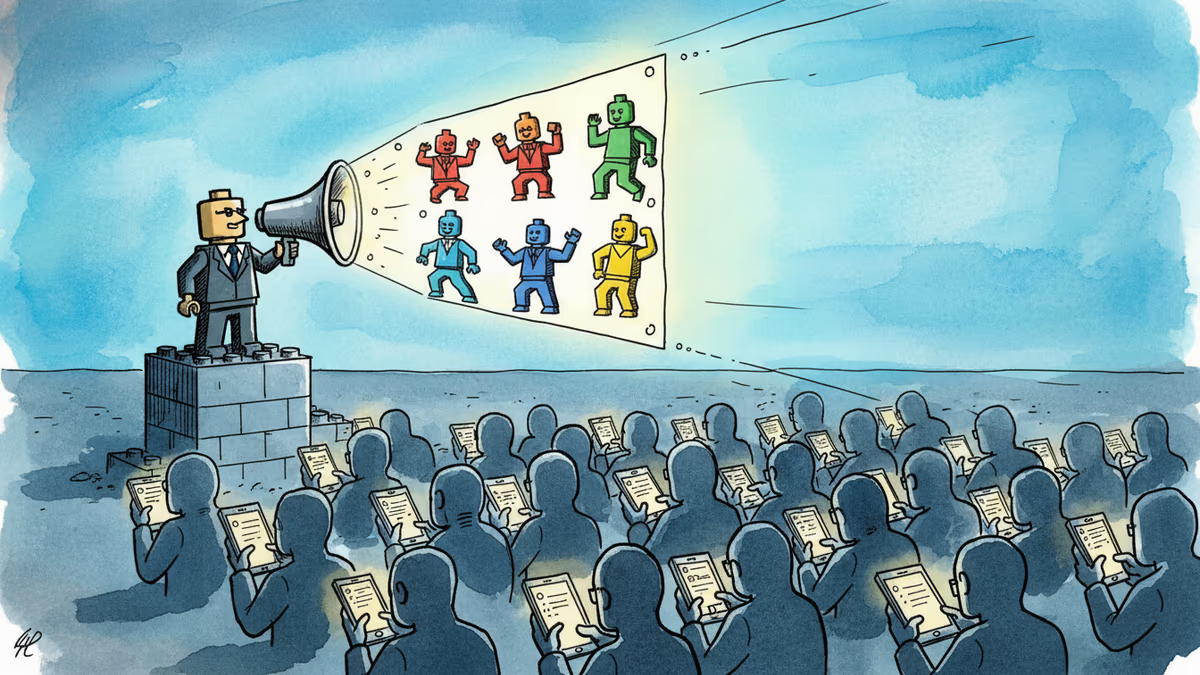

A small pro-Iran team is racking up millions of views with AI-generated Lego videos that mock Trump — and Americans are sharing them. What does that tell us about information warfare?

Thoughts

Share your thoughts on this article

Sign in to join the conversation