When AI Gets It Wrong, You Can't Hit Undo

90% of product engineering orgs are increasing AI investment—but cautiously. When the output is a car or a medical device, a flawed algorithm doesn't just crash a server. It crashes a car.

A software bug can be patched overnight. A flawed car chassis cannot.

That asymmetry sits at the heart of a new report from MIT Technology Review Insights, which surveyed 300 product engineering organizations to understand how AI is—and isn't—reshaping the design of the physical world. The findings paint a picture that's less about disruption and more about discipline.

Nine in Ten Are Spending More. Almost None Are Betting the Farm.

90% of product engineering leaders say they plan to increase AI investment within the next one to two years. That number sounds dramatic until you look at the scale. The largest group—45% of respondents—plans to grow investment by no more than 25%. Only 15% are considering a step change of 51% to 100%.

This isn't timidity. It's a rational response to the stakes involved. Product engineers aren't building recommendation algorithms or chatbots. They're designing the brakes on an SUV, the firmware in a pacemaker, the load-bearing joints in industrial equipment. When those systems fail, the consequences can't be rolled back with a hotfix pushed at 2 a.m.

The report is explicit on this point: where AI directly informs physical designs, embedded systems, and manufacturing decisions that are fixed at release, failures carry real-world risks that software simply doesn't. The response from engineering teams has been to build layered AI systems with distinct trust thresholds—not general-purpose deployments, but architectures where every AI output passes through clearly defined human checkpoints before it touches anything physical.

What's Actually Getting Deployed: Simulation and Prediction Win

Ask product engineers what AI they're actually using, and two capabilities dominate near-term investment plans: predictive analytics and AI-powered simulation and validation. Both were selected by a majority of survey respondents as top priorities.

The common thread? Auditability. These tools produce outputs that can be measured, reviewed, and submitted for regulatory approval. You can prove ROI. You can show a regulator exactly what the model predicted and how that prediction was verified. That's not a coincidence—it's a feature.

Contrast this with more speculative AI applications—generative design, autonomous decision-making in manufacturing—which remain further down the priority list. The engineering community isn't dismissing these capabilities; it's waiting for the feedback loops to become legible.

For context: the FDA already requires human reviewer sign-off on AI-assisted medical device designs. The EU AI Act mandates human oversight for high-risk AI systems. Regulatory frameworks aren't just catching up to AI in physical products—they're actively shaping which capabilities get adopted first.

The Accountability Gap Nobody Wants to Talk About

The report's most pointed finding isn't about investment levels or tool preferences. It's about who is responsible when something goes wrong.

Verification, governance, and explicit human accountability are described as mandatory in high-risk physical environments. That word—explicit—is doing a lot of work. In a world where AI assists in drafting a design, a simulation validates it, and a human engineer signs off, the liability chain looks deceptively clear. But what happens when the AI's simulation was subtly wrong, the engineer trusted it, and a product fails in the field?

This isn't a hypothetical. Automotive recalls cost manufacturers an average of hundreds of millions of dollars per incident. Medical device failures trigger FDA investigations and class-action lawsuits. The legal frameworks for assigning liability when AI is a contributing factor remain, in most jurisdictions, unresolved.

From a business perspective, this creates an unusual incentive: companies that build auditable AI—tools that log every decision, flag uncertainty, and produce documentation regulators can actually read—may have a structural advantage over those that prioritize raw capability. The market for "explainable AI in safety-critical systems" is not a niche. It's the entire game.

Sustainability Over Speed: A Surprising Priority Shift

When asked what outcomes they're optimizing for, product engineering leaders ranked sustainability and product quality at the top. Time-to-market and cost reduction landed near the bottom.

This is worth pausing on. The dominant narrative around AI in industry is efficiency—do more, faster, cheaper. But the engineers closest to physical products are telling a different story. What matters most to them are the signals visible to customers, regulators, and investors: defect rates, emissions profiles, durability metrics. Not internal dashboards.

One interpretation: in industries where a single recall or safety incident can erase years of brand equity, the risk calculus around AI adoption is fundamentally different from, say, a fintech startup. The upside of moving fast is modest. The downside of getting it wrong is catastrophic.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Cohere and Aleph Alpha are merging to build a transatlantic AI challenger valued at $20 billion. Their pitch: sovereignty, not just performance. Can it work?

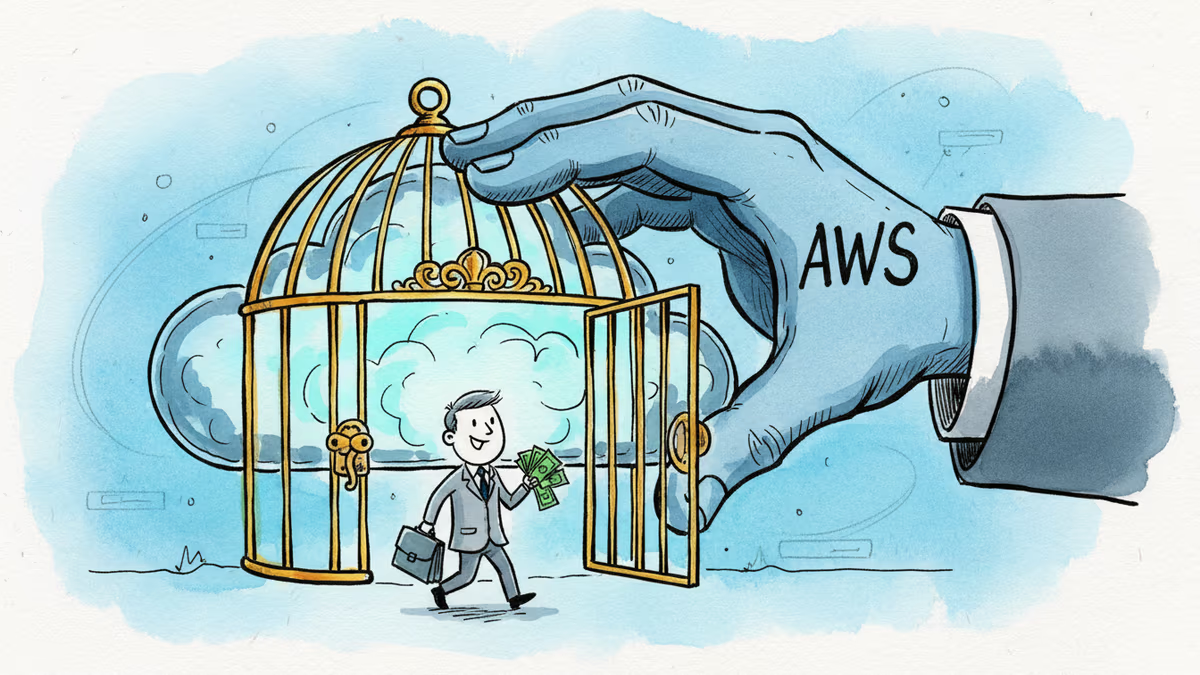

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.

Thoughts

Share your thoughts on this article

Sign in to join the conversation