When the Pentagon Labels Its Own AI Company a 'Supply Chain Risk

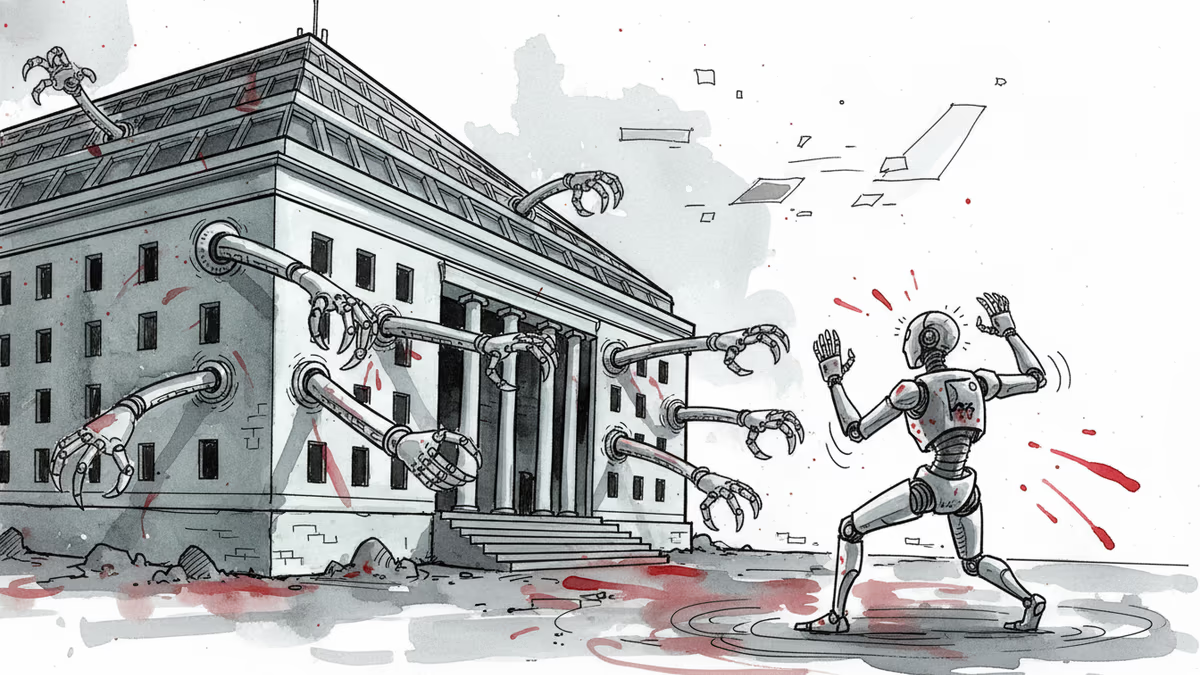

Defense Department designates Anthropic as supply chain risk over Claude usage policies. First time a US AI company faces this classification typically reserved for foreign adversaries.

The Pentagon just slapped a "supply chain risk" label on Anthropic, an American AI company. This designation is typically reserved for Chinese or Russian firms with ties to adversarial governments. It's the first time a US AI company has received this treatment.

Weeks of Failed Diplomacy

The conflict centers on Claude, Anthropic's AI model, and the company's acceptable use policies that restrict military applications. The Defense Department wanted to use Claude for military purposes, but Anthropic refused, citing their ethical guidelines.

According to The Wall Street Journal, negotiations dragged on for weeks without resolution. The Pentagon issued public ultimatums and threatened lawsuits. When diplomacy failed, they pulled the trigger on March 2nd with the formal risk designation.

The practical impact is severe: defense contractors can no longer use Claude-powered products in government work. It's essentially a ban that kicks Anthropic out of the lucrative defense market.

Silicon Valley's Split Reaction

The tech industry's response reveals deep fractures. Many executives are rallying behind Anthropic, arguing the government has overstepped by treating a domestic company like a foreign adversary.

"This sets a dangerous precedent," said one venture capitalist who requested anonymity. "If the government can weaponize supply chain designations against companies that won't compromise their values, where does it end?"

Defense contractors see it differently. They argue that national security trumps corporate ethics, especially as the US races against China in AI development. "We can't afford to have our own companies handicapping us," one defense industry executive told reporters.

Anthropic hasn't issued a public statement yet, but industry insiders expect a court battle.

The Bigger Stakes

This confrontation reflects a broader tension in American AI policy. The government wants to maintain technological superiority while tech companies increasingly assert their right to set ethical boundaries on their products.

The timing is particularly sensitive. With $50 billion in AI-related defense contracts expected over the next five years, the stakes couldn't be higher. Other AI companies are watching closely, knowing they could face similar pressure.

Meanwhile, competitors like OpenAI and Google may benefit from Anthropic's exclusion from defense work, potentially gaining market share in government contracts.

International Implications

Allies are taking notes too. If the US government can override a company's usage policies through regulatory pressure, what does that mean for international AI governance? European regulators, already skeptical of American tech dominance, may see this as validation of their more restrictive approach.

The move also sends mixed signals about American values in technology. While the US criticizes authoritarian governments for controlling their tech companies, this case suggests the line between national security and corporate autonomy isn't as clear as previously thought.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation