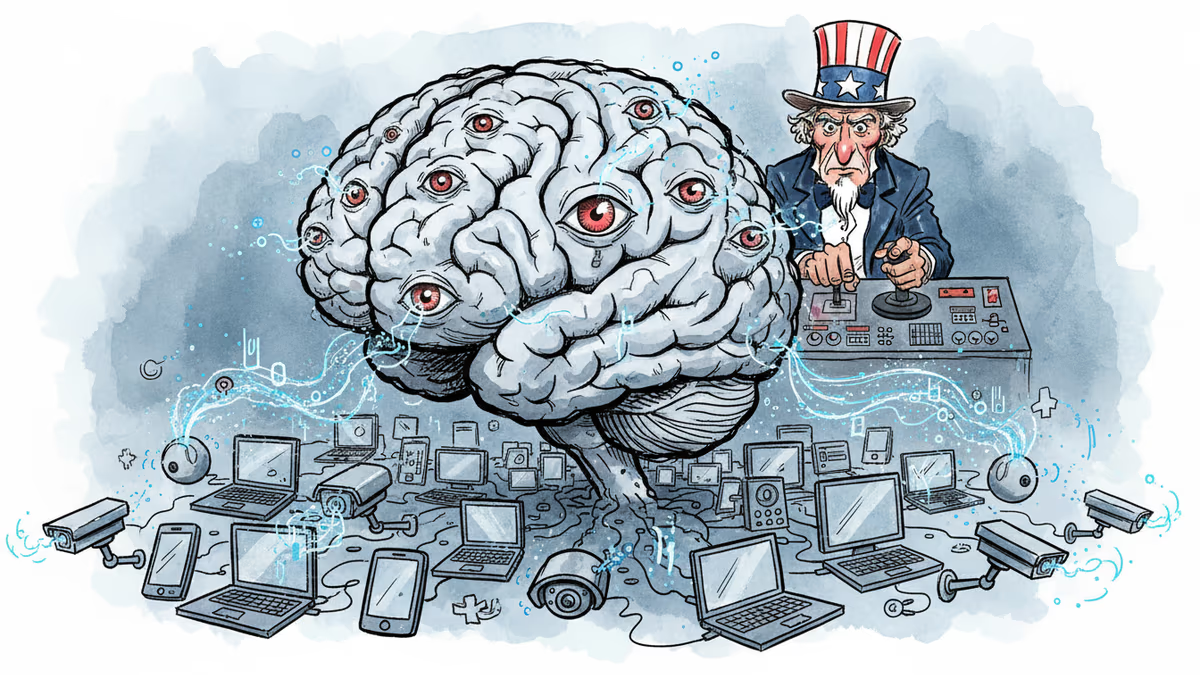

Can the US Government Use AI to Spy on Americans?

The Pentagon's clash with AI companies exposes a legal gray area around mass surveillance. OpenAI and Anthropic's opposing choices reveal deeper questions about privacy in the AI age.

A Contract Rewritten in 48 Hours

OpenAI just rewrote its Pentagon deal. Last week, the company agreed to let the military use ChatGPT for "all lawful purposes." This week, after users deleted the app en masse and protesters chalked "What are your redlines?" outside OpenAI's San Francisco headquarters, the company added explicit language: no domestic surveillance.

But this corporate drama exposed a deeper question that most Americans probably assume has a clear answer: Can the US government legally use AI to conduct mass surveillance on its own citizens?

Surprisingly, the answer isn't straightforward.

What Counts as Surveillance?

"A lot of stuff that normal people would consider a search or surveillance... is not actually considered a search or surveillance by the law," explains Alan Rozenshtein, a law professor at the University of Minnesota.

Right now, the US government can collect—without a warrant—massive amounts of information about Americans:

- Public information like social media posts and surveillance camera footage

- Data on Americans "incidentally" collected while surveilling foreign nationals

- Commercial data purchased from companies, including sensitive location and browsing records

That third category is the kicker. Agencies from ICE to the NSA are increasingly buying personal data from commercial brokers—information that might require a warrant if collected directly.

"There's a huge amount of information that the government can collect on Americans that is not itself regulated either by the Constitution... or statute," says Rozenshtein. The Fourth Amendment was written when collecting information meant entering someone's home, not analyzing their digital exhaust.

AI Changes Everything

Here's where AI transforms the game. Traditional surveillance collected individual pieces of information. AI can aggregate thousands of data points to build detailed profiles and spot patterns—at massive scale.

Consider this: Your credit card transactions, phone location data, and web browsing history might each seem innocuous. But feed them to an AI system, and it can infer your political beliefs, health conditions, and personal relationships. All from "lawfully" collected data.

"What AI can do is take a lot of information, none of which is by itself sensitive... and give the government a lot of powers that the government didn't have before," explains Rozenshtein.

The Great AI Divide

Faced with Pentagon requests to analyze bulk commercial data, AI companies chose different paths:

Anthropic refused to let its Claude AI be used for mass domestic surveillance. CEO Dario Amodei argued that "such surveillance is currently legal only because the law has not yet caught up with the rapidly growing capabilities of AI." The Pentagon's response? It designated Anthropic a "supply chain risk"—a label typically reserved for foreign threats like Chinese companies.

OpenAI initially agreed to "all lawful purposes," then backtracked after public backlash. CEO Sam Altman claimed existing law already prohibits domestic surveillance by the Defense Department, so the contract just needed to reference it.

But legal experts aren't convinced the contract changes much. "OpenAI can say whatever it wants in its agreement... but the Pentagon's gonna use the tech for what it perceives to be lawful," says Jessica Tillipman, a law professor at George Washington University.

The Technical Band-Aid

OpenAI promises technical safeguards: a "safety stack" to monitor and block prohibited uses, plus company employees embedded with the Pentagon. But how would a safety stack actually constrain military use of AI? And what happens when there's disagreement about what's legal?

More fundamentally, should private companies have the power to pull the plug on government technology during operations? "You wouldn't want the US military to ever be in a situation where they legitimately needed to take actions to protect this country's national security, and you had a private company turn off technology," notes former Pentagon intelligence officer Loren Voss.

Beyond Corporate Contracts

The real issue isn't what OpenAI writes in its contracts—it's that we're regulating AI surveillance through corporate negotiations instead of democratic legislation.

Senator Ron Wyden is pushing for bipartisan support of bills like the Fourth Amendment Is Not For Sale Act, which would restrict government purchases of commercial data. "Creating AI profiles of Americans based on that data represents a chilling expansion of mass surveillance that should not be allowed," he said.

But such legislation has languished since 2021. Meanwhile, AI capabilities advance every month.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation