Prove You're Human — With Your Eyeball

Tinder now rewards users who scan their irises at a World orb with free in-app boosts. As AI agents flood dating apps, 'being human' is becoming a verified status — and a business model.

At some point, swiping right wasn't enough. Now you have to show up in person — and let a machine scan your eyes.

Tinder has announced it's expanding its partnership with World, the identity verification company co-founded by OpenAI CEO Sam Altman, to "select markets including Japan and the United States." The deal: users who physically visit a World orb, submit to a facial and iris scan, and pass verification will receive five free boosts inside the app. Boosts are a paid feature that temporarily elevate a user's profile in the discovery queue — the kind of perk that usually costs real money.

What the Orb Actually Does

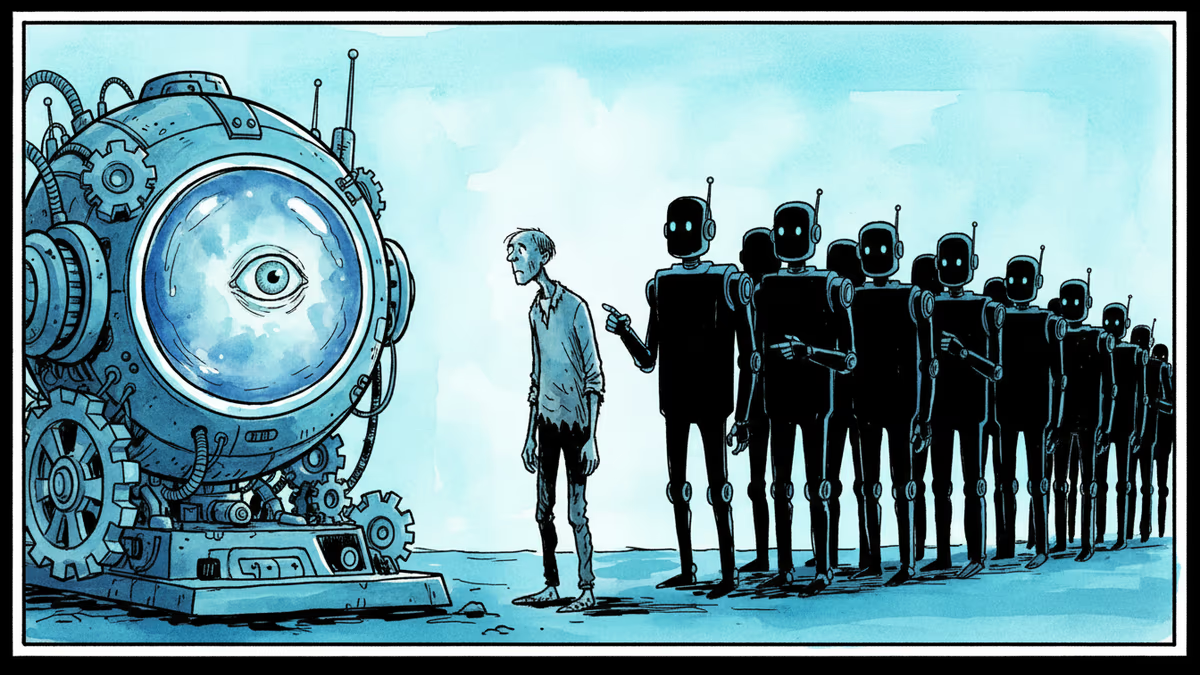

The process isn't seamless. You can't verify from your couch. You have to find a World orb — a physical device installed at select locations — stand in front of it, and let it photograph your face and eyes. World says the data is encrypted and stored securely. The point is to confirm that the account is operated by a real, living human being — not a bot, not an AI agent, not a script running on a server farm.

World first piloted this with Tinder in Japan last year. The expansion to the US signals that the pilot produced results worth scaling. Beyond Tinder, World has been steadily signing up other platforms, building what amounts to a private registry of verified humans.

The reason this exists is straightforward: generative AI has made it genuinely difficult to tell humans from machines in text, voice, and increasingly video. Dating apps are acutely exposed to this problem. The entire value proposition of a platform like Tinder rests on the assumption that there's a real person on the other side of the screen.

The Altman Paradox

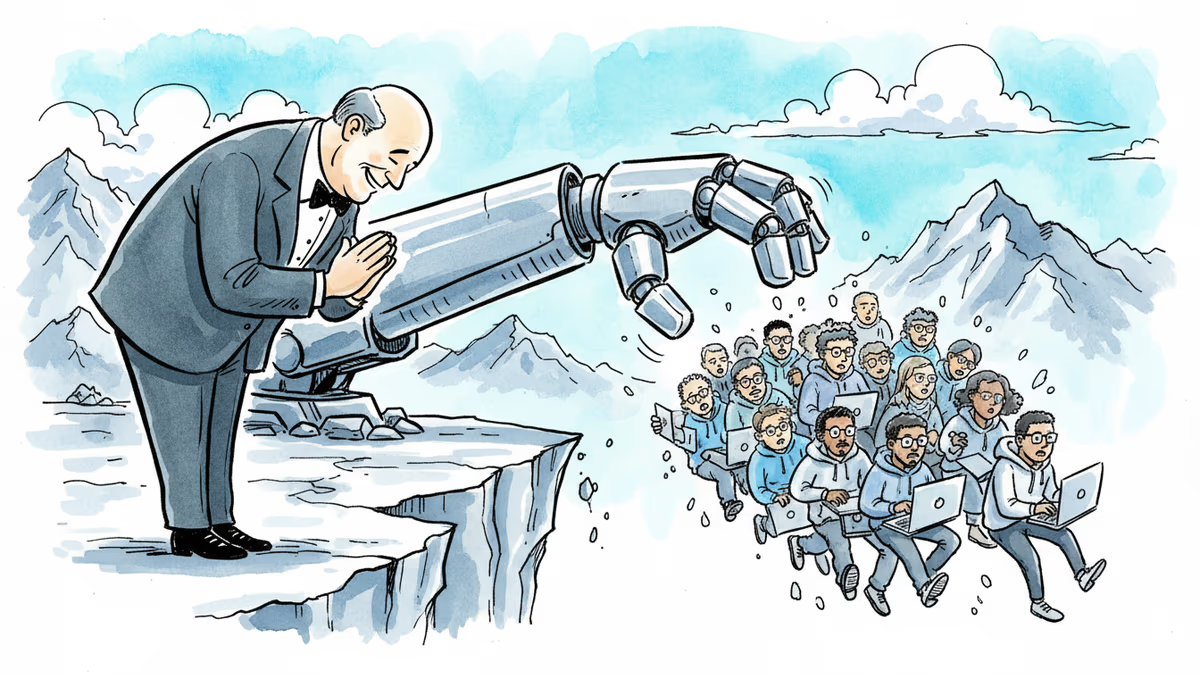

Here's where it gets philosophically interesting. Sam Altman runs OpenAI, one of the most aggressive developers of the AI systems that make human impersonation possible. He also co-founded World, which profits from proving you're not one of those AI systems. The more convincing AI becomes, the more valuable World's verification infrastructure gets.

This isn't necessarily cynical — someone has to build the authentication layer for the AI age. But it does raise a pointed question about who benefits from the problem remaining unsolved.

For Tinder, the calculus is clear. Offering five free boosts is a low-cost acquisition tool that also functions as a trust signal: this platform is working to keep it real. For users willing to make the trade, it's a reasonable deal. For users who aren't — and there will be many — the friction is the point. The orb weeds out the uncommitted.

Privacy: The Part That Doesn't Go Away

Iris data isn't a password. You can reset a password. You cannot reset your eyes. If biometric data from World's orbs were ever compromised, the damage would be permanent and irreversible for every affected user.

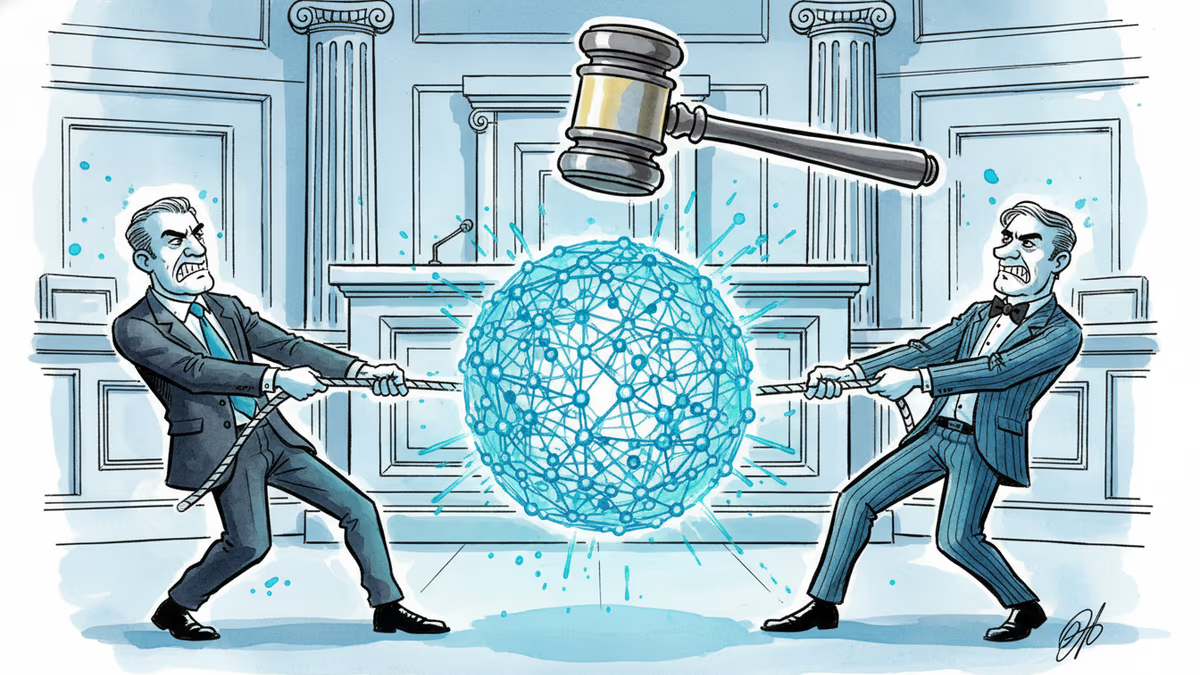

World maintains that data is encrypted and that it doesn't sell biometrics to third parties. But privacy advocates have been skeptical since the company's earliest orb deployments, and regulators in multiple jurisdictions have taken notice. In several European countries, World has already faced restrictions or investigations under GDPR. The US expansion will likely draw fresh scrutiny, particularly as state-level biometric privacy laws — Illinois' BIPA being the most prominent — set increasingly strict standards for how companies can collect and store this kind of data.

For crypto and Web3 enthusiasts, World has a different pitch: the verification generates a "World ID," a privacy-preserving credential stored on a blockchain, giving users a portable proof-of-humanity that doesn't require sharing personal details with every platform. Whether that framing holds up under regulatory pressure remains an open question.

Who's Watching the Orb Keepers?

Beyond Tinder, the broader pattern matters. World is positioning itself as foundational infrastructure for the post-AI internet — the layer that answers "is this a human?" across social media, finance, healthcare, and beyond. If that vision succeeds, a single private company would effectively control the global registry of verified human identities.

Governments issue passports. Banks issue credit scores. World wants to issue proof of humanity. The difference is accountability. Passports come with legal frameworks, appeals processes, and democratic oversight. A private orb network comes with a terms of service.

Authors

Related Articles

Elon Musk and Sam Altman head to trial this week in a case that could determine whether OpenAI survives as a for-profit company—and who leads it. Here's what's really at stake.

OpenAI CEO Sam Altman's San Francisco residence was attacked twice in three days — first a Molotov cocktail, then a shooting. What does this say about tech power, public anger, and the real-world risks facing AI leaders?

Wisconsin Governor Tony Evers vetoed an age verification bill for adult sites, citing privacy concerns. With 25+ states going the other way, the debate cuts to the heart of online freedom vs. child protection.

Sam Altman thanked developers for writing code the hard way. The same week, Amazon cut 16,000 jobs. What does gratitude mean when the grateful party built the replacement?

Thoughts

Share your thoughts on this article

Sign in to join the conversation