Thank You. We No Longer Need You.

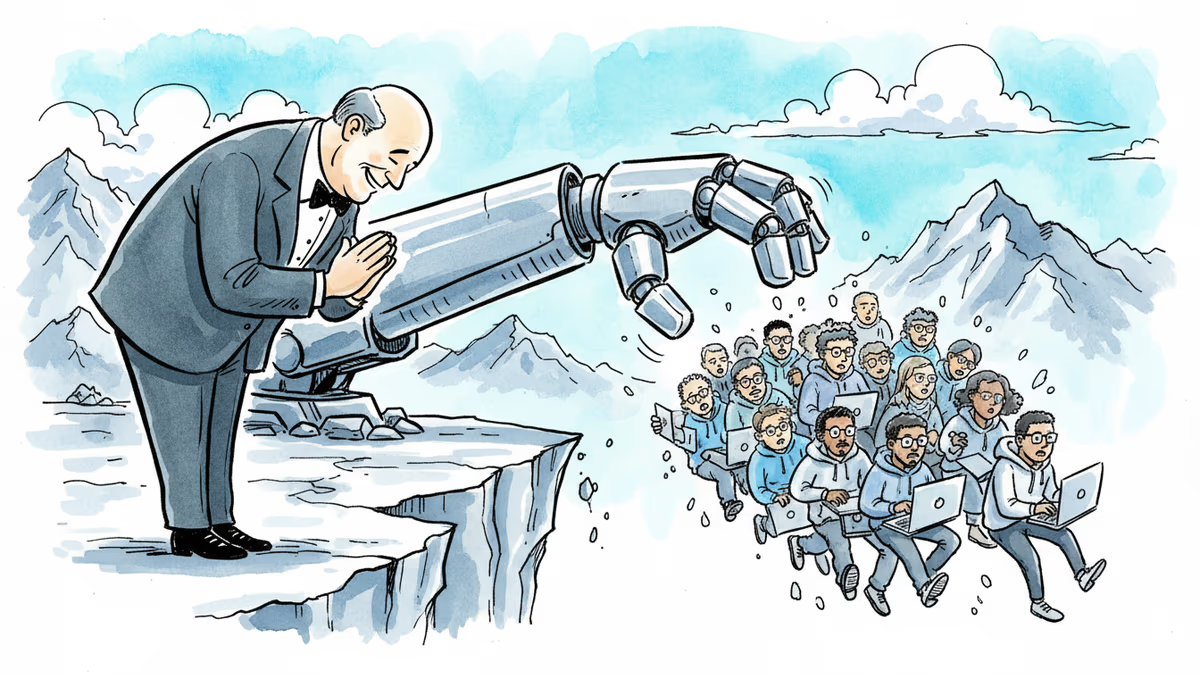

Sam Altman thanked developers for writing code the hard way. The same week, Amazon cut 16,000 jobs. What does gratitude mean when the grateful party built the replacement?

What does a eulogy look like when the person delivering it is also the one who pulled the plug?

The Tweet That Broke the Internet's Irony Meter

On March 17, Sam Altman, CEO of OpenAI, posted this on X: "I have so much gratitude to people who wrote extremely complex software character-by-character. It already feels difficult to remember how much effort it really took. Thank you for getting us to this point."

Read in isolation, it sounds generous. Read in context, it landed like a condolence card signed by the drunk driver.

The same week: Amazon announced 16,000 layoffs. Block cut nearly half its workforce. Atlassian trimmed 10% of its staff. Meta is reportedly weighing cuts that could affect 20% of the company. The stated rationale across the board? AI efficiency. The AI at the center of that efficiency story? Built largely by OpenAI — trained on the very code written by the developers Altman was now thanking.

The structure is almost poetic in its cruelty: the tool was built from their labor, and now the tool is the reason they're being shown the door.

The Internet Responded the Only Way It Knows How

Thousands of replies poured in. Some were direct: "You're welcome. Nice to know that our reward is our jobs being taken away." But the dominant register wasn't rage. It was the kind of dark, exhausted humor that emerges when anger has nowhere useful to go.

"Sam's eulogy for software engineers.""Billion dollar app idea: AI that reads billionaire tweets before they post them and says 'this is going to make you sound incredibly out of touch, are you sure?'""This reads like something the Mayans would say right before the ceremony starts." One commenter simply noted they were grateful to OpenAI for doing the AI work so they could use free Chinese open-source models instead — a jab that managed to sting Altman and geopolitics simultaneously.

The fact that memes outpaced manifestos in the replies says something about where developer culture is right now: too tired to march, too sharp to stay silent.

Why This Moment Is More Than a PR Fumble

It would be easy to dismiss Altman's post as a tone-deaf mistake by someone who spends too much time on X. But the discomfort runs deeper than optics.

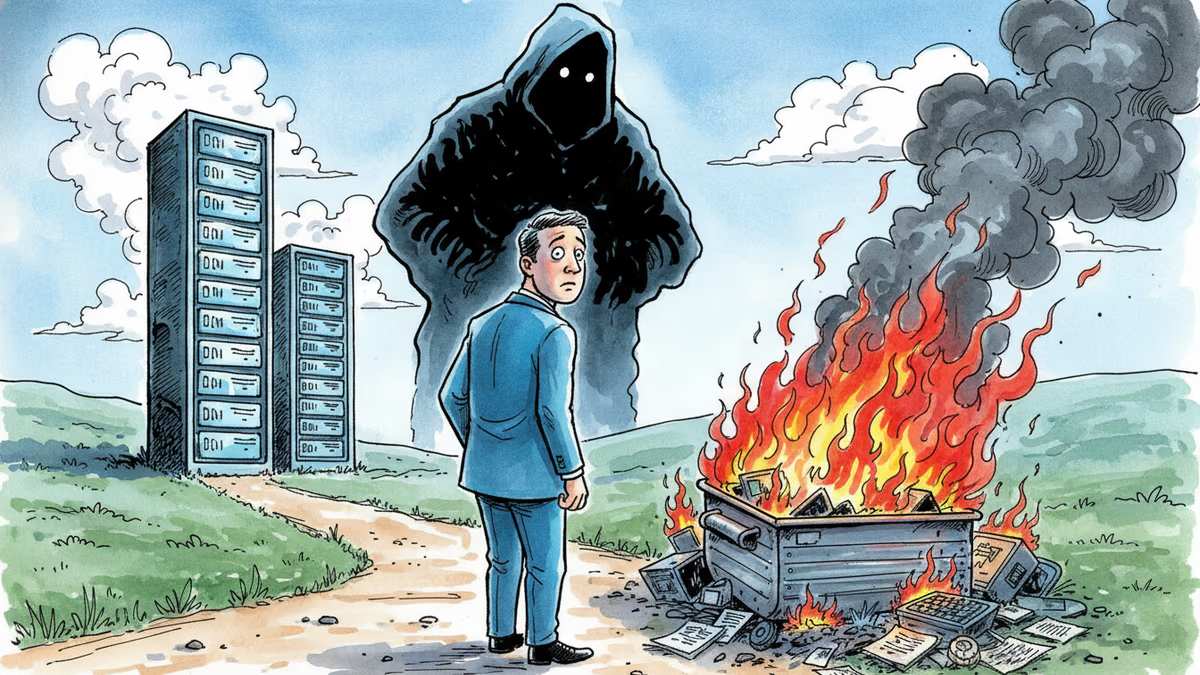

The post carries an embedded assumption: that character-by-character coding is now a historical artifact, something to be remembered fondly, like punch cards or dial-up modems. That framing matters. It's not just a sentiment — it's a narrative that normalizes the transition, softens the edges of displacement, and asks the displaced to feel good about their contribution to the machine that replaced them.

For senior engineers, the picture is complicated but survivable. AI tools arguably make experienced developers more powerful — better at architecture, faster at prototyping. But the junior developer pipeline is a different story. Junior roles are precisely the ones evaporating fastest. And junior roles are how senior engineers are made. If the entry ramp disappears, the expertise doesn't just pause — it eventually stops being replenished.

Three Ways to Read the Same Story

From a shareholder perspective, this is an unambiguous win. Fewer engineers, same (or greater) output, lower burn rate. Several companies saw their stock tick up on layoff announcements. The market is pricing in efficiency, not sentiment.

From a developer perspective, the frustration isn't just about job security. It's about the social contract of a craft. Developers spent years — sometimes decades — building the internet, the apps, the infrastructure. OpenAI trained on that work without explicit consent in many cases, built a product that now competes with the people who created the training data, and the CEO's response is a thank-you post.

From a policy perspective, governments are still largely playing catch-up. "AI investment" and "workforce reskilling" are the talking points, but the specifics of who retrains, into what roles, at what cost, and with what timeline remain frustratingly vague — especially when the displacement is happening at a pace that makes five-year plans feel like fiction.

Authors

Related Articles

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Week two of Musk v. Altman revealed a 2017 power struggle over AGI control, a stormed-out Tesla painting, and a diary entry asking 'what will take me to $1B?

Emails revealed in the Musk v. Altman trial show Microsoft executives were deeply skeptical of OpenAI in 2017–2018. What actually changed their minds?

Moonshot AI raised $2B at a $20B valuation. Its Kimi models rank second on OpenRouter. What China's open-weight AI surge means for the global LLM market.

Thoughts

Share your thoughts on this article

Sign in to join the conversation