OpenAI Training Data Contractor Controversy 2026: Real Work Uploads Requested

On January 10, 2026, reports surfaced that OpenAI is asking contractors for real-world work files to train AI. Explore the legal and IP implications of this move.

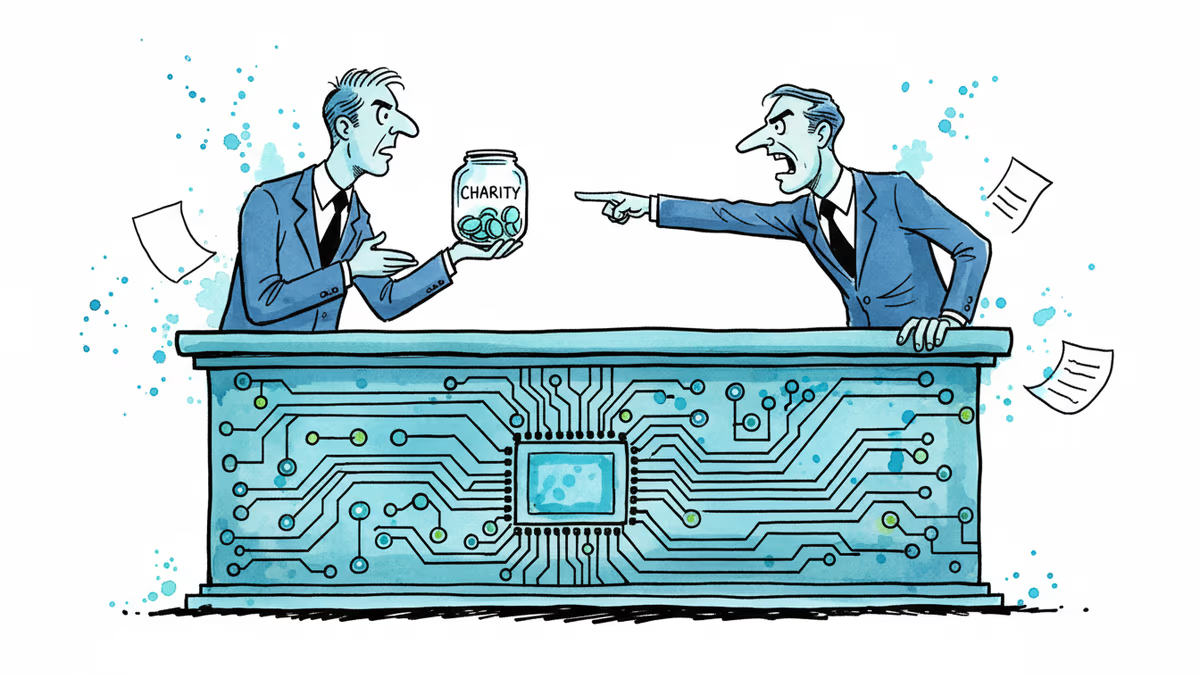

They don't just want your skills; they want your files. According to a report by Wired, OpenAI and training data firm Handshake AI are reportedly asking third-party contractors to upload actual work samples from their past and current jobs. This aggressive move highlights a shift in AI strategy as companies scramble for high-quality, specialized data to automate complex white-collar tasks.

The OpenAI Training Data Contractor Controversy 2026: What's Being Asked

OpenAI's internal presentation reportedly instructs contractors to provide 'real, on-the-job work' examples. These aren't just summaries; they're requesting actual files, including Word documents, PDFs, PowerPoint slides, Excel sheets, images, and code repositories. By analyzing how professionals structure their work, OpenAI aims to refine its models' reasoning and execution capabilities beyond what public internet data can provide.

Superstar Scrubbing and the Legal Grey Area

To mitigate privacy concerns, contractors are told to delete proprietary and personally identifiable information (PII). OpenAI even pointed them to a ChatGPT-based tool called Superstar Scrubbing to assist in the cleaning process. However, intellectual property lawyer Evan Brown warned that this approach is fraught with risk, noting that it relies heavily on the judgment of individual contractors to distinguish what's confidential. OpenAI has currently declined to comment on the matter.

Authors

Related Articles

The FTC fined Cox Media and two ad firms $930,000 — not for actually eavesdropping on users, but for falsely claiming they could. The case raises uncomfortable questions about surveillance capitalism.

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

A Utah woman was sentenced to life in prison partly because of her Google searches and deleted texts. The Kouri Richins case reveals how digital footprints have become the courtroom's most reliable witness.

After two weeks of witnesses calling him a liar, OpenAI CEO Sam Altman testified in his own defense, claiming Elon Musk tried to kill the company twice.

Thoughts

Share your thoughts on this article

Sign in to join the conversation