OpenAI Fires Employee for Insider Trading on Prediction Markets

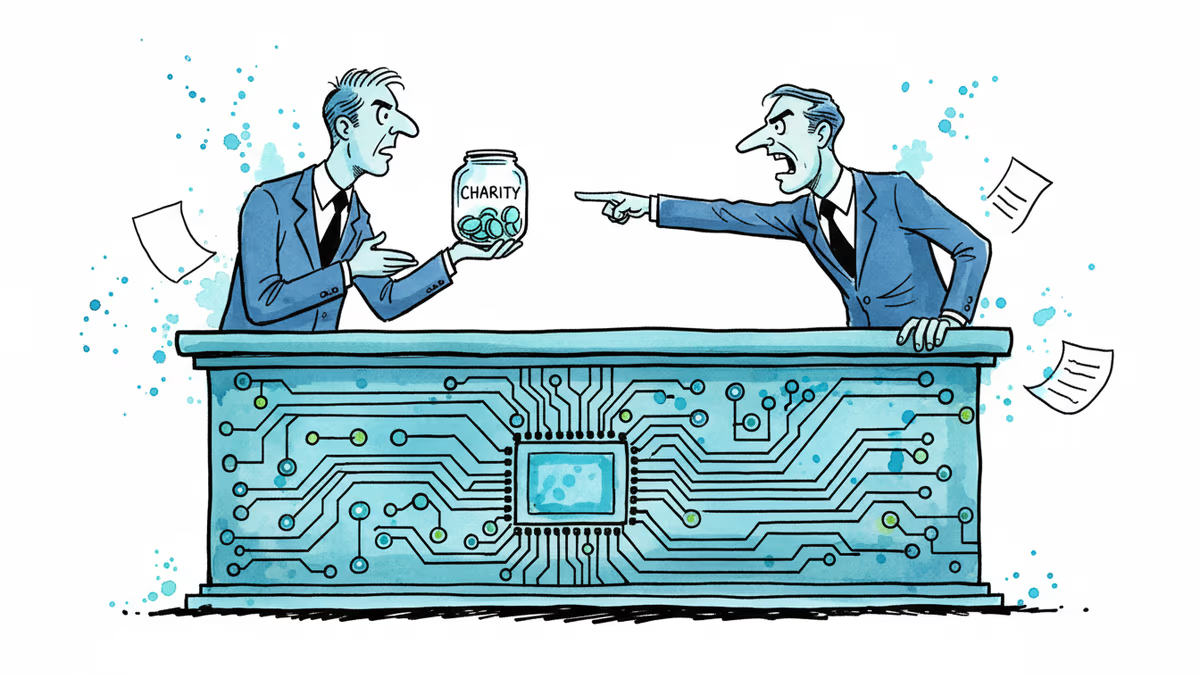

OpenAI terminated an employee for using confidential information to trade on prediction markets like Polymarket. As these platforms grow, insider trading concerns multiply.

From $470K Winnings to Corporate Firing: Prediction Markets Heat Up

Just weeks after an accountant won a $470,300 jackpot betting against DOGE believers, OpenAI has fired an employee for using company secrets to trade on prediction markets.

The AI giant confirmed on February 27 that it terminated a worker who allegedly used confidential OpenAI information for trades on platforms like Polymarket. The company didn't name the employee but said the actions violated policies banning workers from using inside information for personal gain.

"Such actions violated a company policy that bans workers from using inside information for personal gain, including on prediction markets," a spokesperson explained.

The Blurry Line Between Finance and Gambling

Prediction markets like Polymarket and Kalshi let people wager on real-world events. Current bets include what products OpenAI will announce in 2026 and when the company might go public. The stakes can be enormous.

These platforms insist they're financial platforms, not gambling sites. Kalshi operates as a regulated exchange and recently fined and banned a MrBeast editor for similar alleged insider trading. The distinction matters for both regulation and legitimacy.

Tech Companies Face a New Dilemma

The OpenAI firing highlights a growing challenge for tech companies. As prediction markets expand, how do you prevent employees from monetizing their insider knowledge?

This isn't just about obvious cases like trading on earnings before they're public. Employees at AI companies know about model releases, partnership deals, and strategic pivots weeks or months in advance. When betting markets exist for these exact events, the temptation is real.

The Regulatory Response

Kalshi's quick action against the MrBeast editor shows how seriously regulated platforms take insider trading. But enforcement remains patchy across the prediction market ecosystem. Some platforms operate in regulatory gray areas, making consistent enforcement difficult.

The OpenAI case could signal stricter corporate policies ahead. Other tech giants are likely reviewing their own employee trading rules as prediction markets mainstream.

Authors

Related Articles

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

After two weeks of witnesses calling him a liar, OpenAI CEO Sam Altman testified in his own defense, claiming Elon Musk tried to kill the company twice.

Sam Nelson, 19, died after following ChatGPT's advice to mix Kratom and Xanax. His parents are suing OpenAI for wrongful death, raising urgent questions about AI trust, liability, and design.

OpenAI's new Daybreak initiative uses the Codex AI agent to find and patch security vulnerabilities before attackers do—putting it in direct competition with Anthropic's secretive Claude Mythos.

Thoughts

Share your thoughts on this article

Sign in to join the conversation