Why OpenAI Just Declared War on DeepSeek's Ghost Model

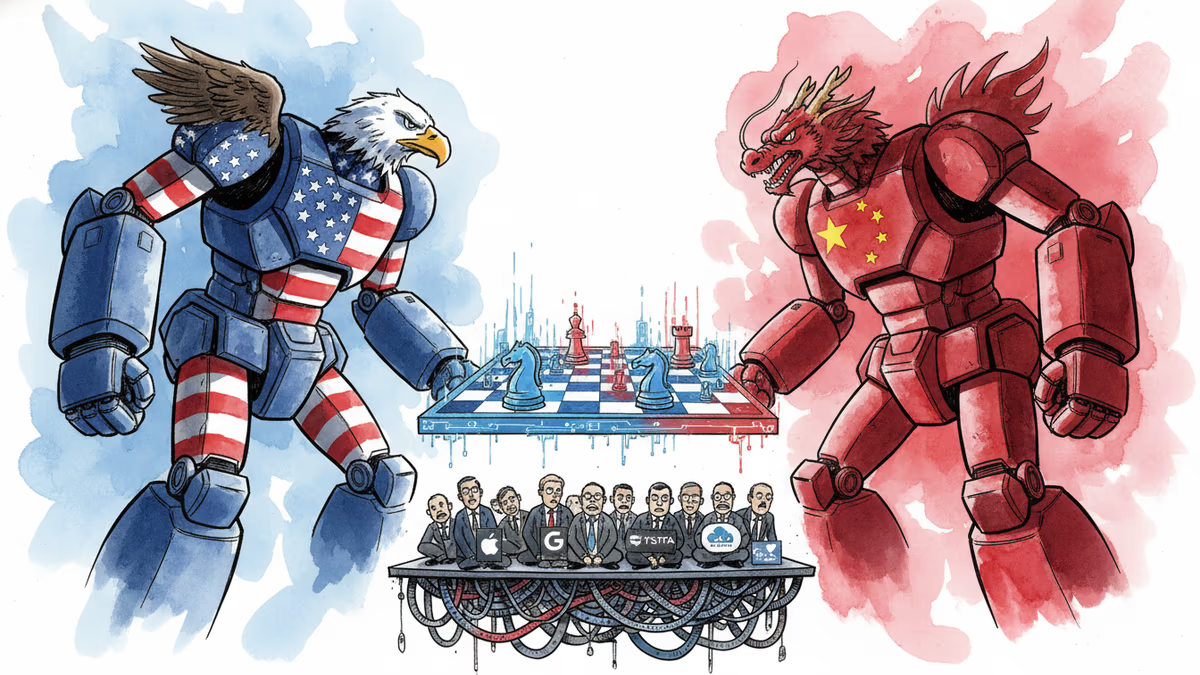

OpenAI accused DeepSeek of stealing AI capabilities before any new model launch. The real battle isn't about copying—it's about who controls AI's future.

They're Fighting Over a Model That Doesn't Exist Yet

OpenAI just threw the first punch in what might be 2026's biggest AI showdown. The target? A DeepSeek model that hasn't even been announced.

On February 12, OpenAI told the U.S. House Select Committee on China that "DeepSeek's next model (whatever its form) should be understood in the context of its ongoing efforts to free-ride on the capabilities developed by OpenAI and other US frontier labs."

The timing screams strategy. With China's Lunar New Year approaching next week, industry watchers expect DeepSeek might pull another surprise launch—just like last year when their R1 model blindsided Silicon Valley overnight.

That launch didn't just introduce a new AI model. It rewrote the rules of the global AI race, proving that Chinese companies could match U.S. performance with far fewer advanced chips.

The 'Distillation' Accusation That Everyone Uses

OpenAI's core complaint centers on "distillation"—a technique where smaller models learn from larger ones' outputs to replicate their capabilities. It's like studying someone's homework to understand the subject.

"We have observed accounts associated with DeepSeek employees developing methods to circumvent OpenAI's access restrictions," the memo detailed, "accessing models through obfuscated third-party routers and other ways that mask their source."

But here's the twist: distillation is standard industry practice. Neil Shah from Counterpoint Research puts it bluntly: "The reality is none of the models is an island and the entire industry has mostly evolved based on recursive learning."

So why is OpenAI crying foul now?

Open Source vs. Closed Gardens: The Real Battle

DeepSeek's success sparked something bigger than technical achievement—it catalyzed China's embrace of open-weight AI models. These systems let developers worldwide download, modify, and deploy AI capabilities freely.

This directly challenges the closed-system approach favored by U.S. tech giants like Google and OpenAI, who tightly control access to their models, data, and architecture.

The past month has seen Chinese tech companies racing to release open models ahead of any potential DeepSeek announcement. It's not just competition—it's ecosystem warfare.

Austin Horng-En Wang from RAND Corporation suggests a strategic motive: "One possible reason for the accusation is to prevent DeepSeek and China companies from acquiring more chips to distill the U.S. model, so that the U.S. models can keep their leading position."

What This Means for Everyone Else

For developers and businesses caught between these AI superpowers, the implications are stark. Choose the closed U.S. ecosystem, and you're locked into expensive, controlled access. Embrace China's open approach, and you might face regulatory backlash.

The semiconductor export controls that followed DeepSeek's breakthrough last year show how quickly technical disputes become geopolitical weapons. Companies worldwide are now forced to navigate not just technical choices, but diplomatic minefields.

India's recent AI Impact Summit proposed a "third way"—AI development focused on public good rather than corporate control. But can smaller players really chart an independent course when the two AI superpowers are drawing battle lines?

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

OpenAI has reorganized for the second time in a month, merging ChatGPT and Codex into a single agentic platform under president Greg Brockman's unified product leadership.

Thoughts

Share your thoughts on this article

Sign in to join the conversation