Why Nvidia Just Bet $4B on Light-Speed Data

Nvidia's $2B investments in Lumentum and Coherent signal a shift from GPU wars to data transmission battles. The real bottleneck in AI isn't compute—it's connection.

The $4 Billion Question

Nvidia dropped $4 billion on Monday—$2 billion each into Lumentum and Coherent, two companies most people have never heard of. They make optical transceivers, circuit switches, and lasers. Not exactly the flashy stuff that grabs headlines, but here's the thing: without them, AI's future hits a wall.

The chip giant's bet isn't really about photonics technology. It's about solving the problem that's keeping Jensen Huang awake at night—what happens when thousands of GPUs need to talk to each other at once, and copper wires just can't keep up?

When Speed Hits Physics

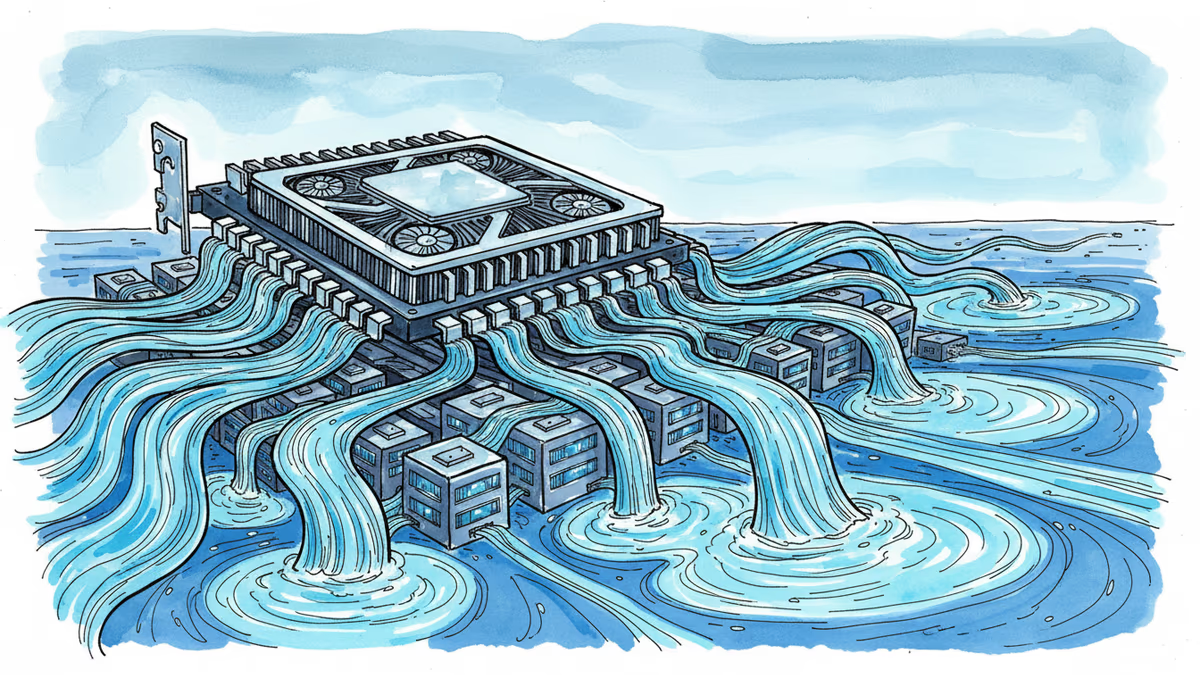

Picture this: you've got 10,000 GPUs working on the next breakthrough AI model. Each chip is a computational beast, but they're only as fast as the slowest link between them. Traditional copper connections are hitting their physical limits—they generate too much heat, consume too much power, and simply can't move data fast enough.

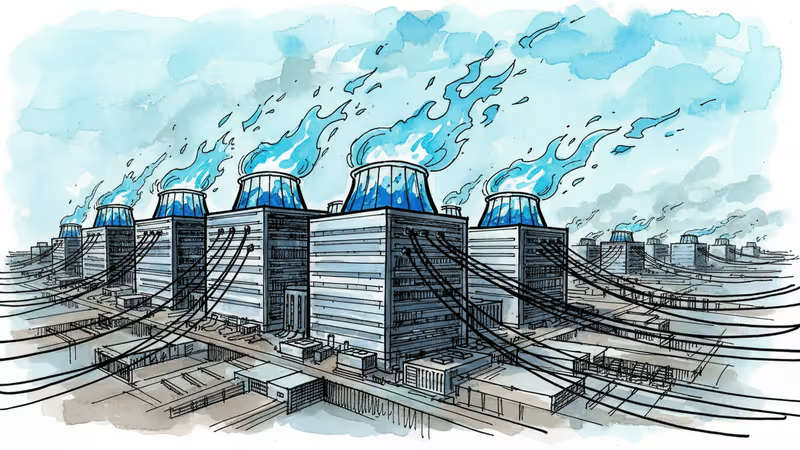

That's where photonics comes in. Instead of electrons crawling through copper, you're sending data at light speed through optical fibers. It's faster, uses less power, and generates less heat. For AI data centers that already consume as much electricity as small cities, that efficiency matters.

Nvidia's deal with Lumentum includes a "multibillion purchase commitment" over multiple years. This isn't just investment—it's a guarantee they'll buy massive quantities of optical gear.

The Infrastructure Arms Race

This move builds on Nvidia's2020 acquisition of Mellanox, which strengthened their NVLink technology for GPU-to-GPU connections. Back then, they focused on making individual connections faster. Now they're reimagining the entire data center's circulatory system.

Amazon, Microsoft, and Google are watching closely. They've built their cloud empires on traditional networking, but if optical connections become the new standard, they'll need to adapt fast. The question isn't whether they'll follow—it's how quickly they can catch up.

The Power Problem

Here's a number that should worry everyone: ChatGPT reportedly consumes 500,000 kWh daily—enough to power 170,000 homes. As AI models grow larger and more complex, that energy demand will explode.

Photonics offers a partial solution. Optical connections are more energy-efficient than copper, generate less heat, and reduce cooling costs. For an industry facing increasing scrutiny over its environmental impact, that's not just a nice-to-have—it's essential.

The Skeptics' View

Not everyone's convinced this is the right bet. Some industry veterans argue that Nvidia's throwing money at a problem that could be solved more elegantly through better software optimization or chip design. "They're building highways when they should be making cars more efficient," one former Intel executive told me off the record.

There's also the timing question. Photonics technology is still expensive and complex to manufacture at scale. Nvidia is essentially betting that costs will come down fast enough to make economic sense before the next major technological shift.

Authors

Related Articles

As Europe faces a power crisis, the Nordic countries have become the unexpected hotbed for AI data centers. What's driving this Arctic gold rush?

Anthropic promises to cover 100% of power grid upgrade costs for its data centers, but the debate over how AI companies should handle electricity expenses continues to spark controversy.

Jensen Huang's casual China tour coincides with approval of 400,000+ H200 chip sales to Chinese companies. A stunning reversal of Biden-era export controls raises questions about US tech strategy.

Data center boom drives 252 GW of new gas power development in the US, equivalent to 50% expansion of current gas fleet. The hidden energy cost of AI revealed.

Thoughts

Share your thoughts on this article

Sign in to join the conversation