AI Can Now Hunt Down Your Secret Online Accounts

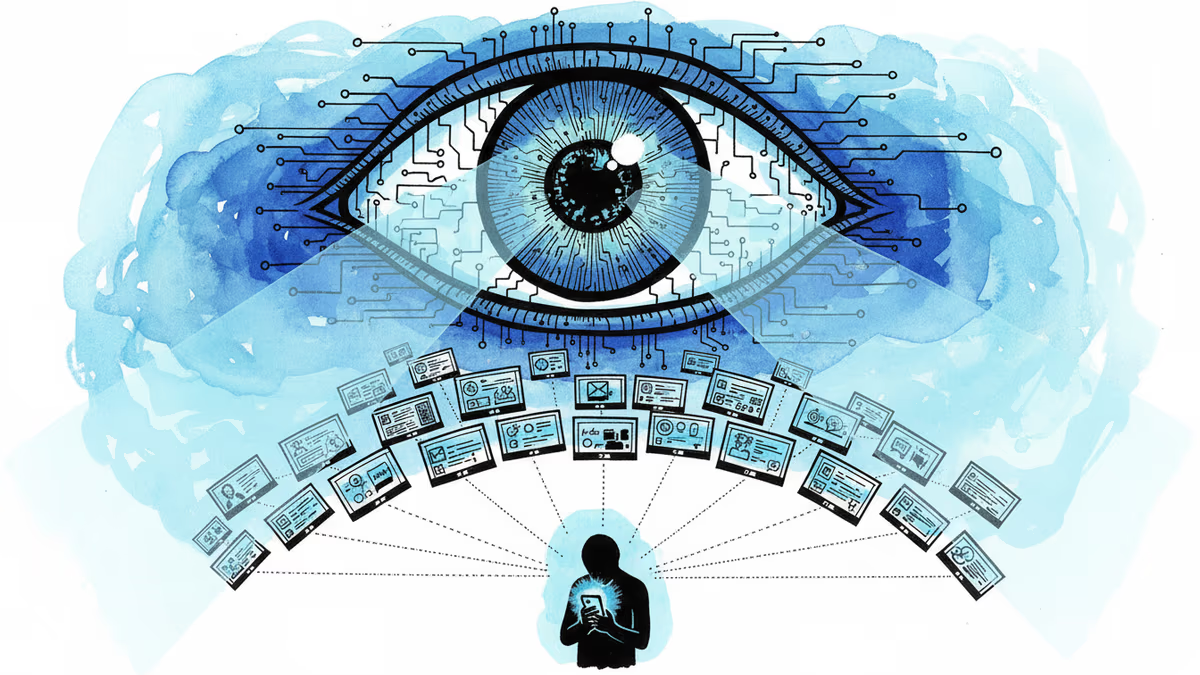

Researchers from ETH Zurich developed an AI system capable of linking anonymous online accounts to real identities. What does this mean for online privacy?

That Reddit alt where you vent about your boss? Your secret Twitter account for controversial takes? Your anonymous Glassdoor review trashing company culture? AI might have just made them a lot less secret.

Researchers from ETH Zurich, Anthropic, and the Machine Learning Alignment and Theory Scholars program have developed an automated system that can hunt down your digital breadcrumbs across the internet. While the study hasn't been peer-reviewed yet, it's raising uncomfortable questions about the future of online anonymity.

How the Digital Detective Works

The AI system operates like a tireless digital sleuth, using automated agents that can search the web and interact with information across platforms. Though the researchers haven't disclosed which specific AI models power the system, the concept is both elegant and unsettling.

It's all about pattern recognition. Every time you type, you leave behind a unique digital fingerprint - your writing style, the topics you care about, when you're active online, even your typos. The AI pieces together these subtle signals to connect seemingly unrelated accounts to a single person.

Consider this scenario: You maintain a professional LinkedIn presence by day, but blow off steam on anonymous forums by night. If both accounts show activity during your lunch breaks, use similar phrases, and reference the same local events, that's enough for AI to start connecting the dots.

The Privacy Paradox

Here's the thing - most of us aren't as anonymous as we think we are. We unconsciously maintain consistent patterns across platforms. The way we structure sentences, our favorite words, even our emoji habits create a behavioral signature that's surprisingly unique.

Privacy advocates are sounding alarms about potential misuse. Imagine authoritarian governments using this technology to unmask dissidents, or corporations silencing whistleblowers by threatening to expose their anonymous complaints.

Cybersecurity professionals see both threat and opportunity. While the technology could help track bad actors across platforms, it also represents a massive privacy vulnerability that could be exploited by malicious entities.

Legal experts are scrambling to understand the implications. Current privacy laws weren't written with this level of cross-platform tracking in mind. The legal framework is playing catch-up with technological reality.

Corporate Calculations

For businesses, this technology presents a double-edged sword. HR departments might salivate at the prospect of screening job candidates' "real" online personas or identifying internal critics. But legal teams would likely flag massive compliance risks under privacy regulations like GDPR.

Marketing departments see dollar signs - imagine the targeting precision possible when you can link customers' professional, personal, and anonymous accounts. But PR teams know the backlash would be swift and severe if such practices became public.

The tech industry itself faces a credibility crisis. After years of promising users control over their data, companies now have tools that could make that promise meaningless.

Fighting Back Against the Machines

Complete anonymity might be getting harder, but it's not game over yet. Security researchers suggest several countermeasures:

Style switching: Deliberately alter your writing patterns, sentence structure, and vocabulary across accounts Temporal dispersion: Vary your activity times to break behavioral patterns Technical barriers: VPNs, Tor browsers, and other privacy tools remain effective Topic segregation: Keep completely different interests and discussion topics on separate accounts

But here's the catch - maintaining multiple digital personas requires constant vigilance and significant effort. Most people won't bother, which means the technology will work on the majority of users.

The Bigger Question

This isn't just about technology - it's about the kind of society we're building. Some argue that increased accountability online could reduce harassment, misinformation, and toxic behavior. Others warn we're sleepwalking into a surveillance state where every opinion can be traced back to its source.

AI researchers themselves are divided. Some see this as a necessary tool for combating online abuse and fraud. Others worry about the chilling effect on legitimate anonymous speech - the kind that has historically protected whistleblowers, activists, and marginalized voices.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

Microsoft is letting Windows users delay updates indefinitely — 35 days at a time, as many times as they want. A long-overdue fix, or a security risk hiding in plain sight?

Cohere and Aleph Alpha are merging to build a transatlantic AI challenger valued at $20 billion. Their pitch: sovereignty, not just performance. Can it work?

Thoughts

Share your thoughts on this article

Sign in to join the conversation