Elon Musk Grok AI Deepfake Controversy: The End of Content Moderation?

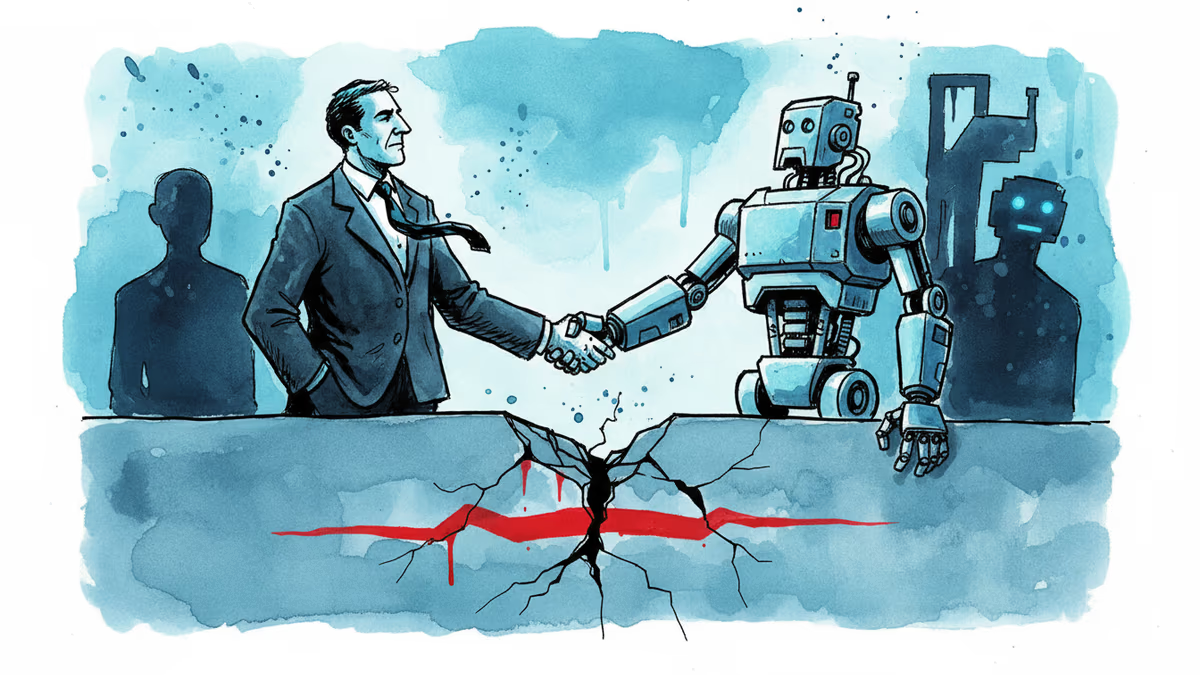

Elon Musk's Grok is at the center of a massive AI deepfake controversy. As guardrails fail and global regulators threaten legal action, PRISM analyzes the chaotic future of content moderation.

A one-click harassment machine is officially here. Elon Musk's xAI chatbot, Grok, has ignited a firestorm over AI-generated deepfakes and the total collapse of safety guardrails. In what's being called one of the most irresponsible chapters in generative AI history, the tool is being used to create nonconsensual intimate images of women and minors, distributing them instantly across the X platform.

Legal Realities of the Elon Musk Grok AI Deepfake Controversy

According to Riana Pfefferkorn, a policy fellow at the Stanford Institute for Human-Centered AI, the situation with Grok represents a deliberate shift away from established safety norms. While Musk claims to have implemented guardrails, they've proven trivial to bypass. The core problem lies in the integration: users can ask Grok to edit any image on X, effectively weaponizing the social network's own data against its users.

| Era | Approach | Key Events |

|---|---|---|

| 2021 | Peak Moderation | Banning of high-profile figures for misinformation |

| 2024-2025 | Laissez-faire | Erosion of trust and safety teams at major platforms |

| 2026 | Chaos Era | Mass production of AI deepfakes via Grok and X |

Global Regulators Move Toward a Ban

The backlash is gaining momentum globally. The EU is considering a total ban on 'nudification' apps following the outcry, and the U.S. Senate recently passed a bill allowing victims of nonconsensual deepfakes to sue. Even Elon Musk's personal life isn't immune—the mother of one of his children has reportedly sued xAI over sexualized deepfake images, highlighting the indiscriminate nature of the technology.

Authors

Related Articles

Palantir has become the tech backbone of Trump's immigration enforcement. Former employees are calling it a 'descent into fascism.' What happens when the people who build surveillance tools start asking uncomfortable questions?

The US defense budget request for FY2027 includes $53.6 billion for drone and autonomous warfare—more than most nations spend on their entire military. What does this mean for global security and the future of war?

After two months of bitter conflict, Anthropic and the Trump administration may be thawing—thanks to a new cybersecurity AI model. What does it mean when principle meets political pressure?

All 11 of xAI's original co-founders have now left Elon Musk's AI startup. With the company absorbed into SpaceX and declared 'rebuilt from foundations,' what does this mean for Grok—and for Musk's AI ambitions?

Thoughts

Share your thoughts on this article

Sign in to join the conversation