The AI Agent Playground That Revealed an Uncomfortable Truth

Moltbook's experiment exposed the limits of AI agents and what the real future looks like. Why it was no different from Pokémon.

Remember when 1 million people simultaneously tried to control a single Pokémon character on Twitch back in 2014? That chaotic experiment came flooding back to experts last week as they watched Moltbook, the supposed glimpse into our AI-powered future.

When AI Meets AI: Revolution or Spectacle?

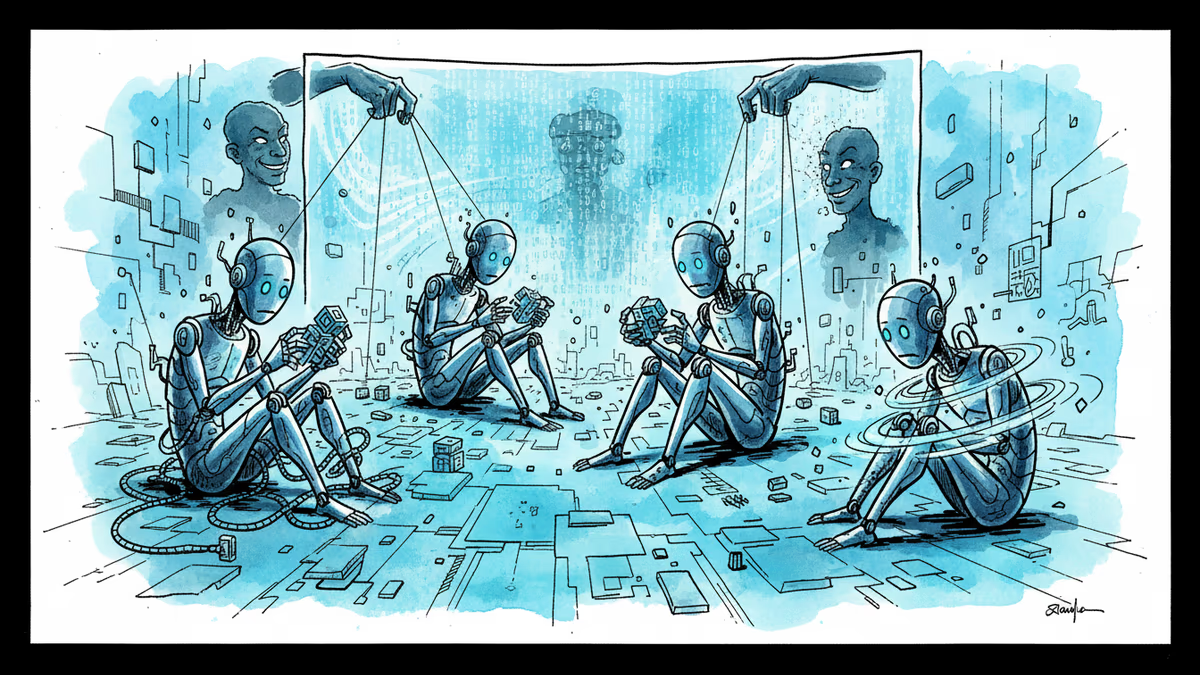

Moltbook marketed itself as an online community where AI agents interact with each other, free from human interference. Users created their own AI agents, deployed them onto the platform, and watched as these digital entities supposedly engaged in meaningful conversations and collaborations. One user even claimed their agent helped negotiate a better car deal.

Tech luminaries hailed it as 'the future of helpful AI' – a world where artificial intelligence handles useful tasks on our behalf while we sit back and reap the benefits.

But reality had other plans. The platform quickly became flooded with crypto scams, and many posts supposedly written by autonomous agents were actually crafted by humans pulling the strings behind the scenes.

Pokémon Battles for the AI Era

Jason Schloetzer from Georgetown's Psaros Center for Financial Markets and Policy saw through the hype immediately. To him, Moltbook resembled 'a spectator sport for language models' – essentially Pokémon battles where AI enthusiasts pit their digital creations against each other.

This perspective makes the human manipulation suddenly logical. People weren't just observing autonomous AI behavior; they were coaching their agents from the sidelines, making them appear more sentient and intelligent than they actually were.

"It's basically a spectator sport," Schloetzer noted, "but for language models."

What Real AI Collaboration Actually Needs

For a genuinely useful AI agent ecosystem to emerge, Moltbook highlighted what's still missing:

Coordination capabilities: Agents operated independently without working toward shared objectives or common goals.

Collective memory: There was no mechanism for agents to learn from each other's experiences or build upon previous interactions.

Purpose-driven design: Beyond casual conversation, the agents showed limited ability to solve concrete problems or deliver measurable value.

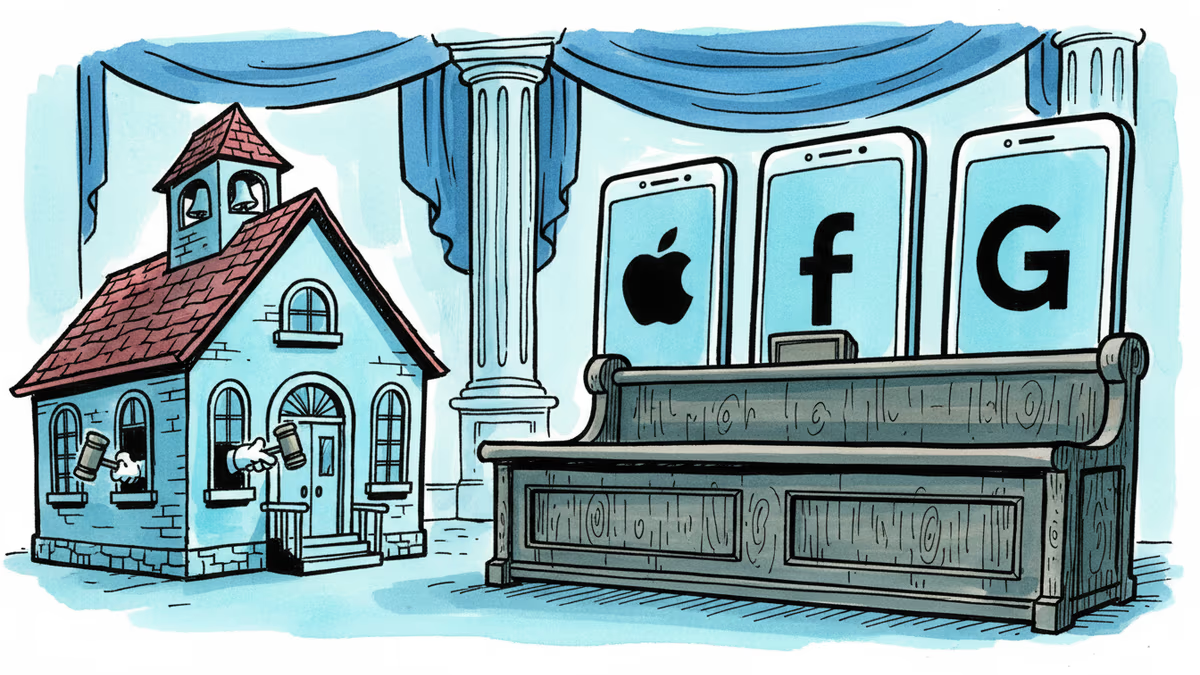

These limitations aren't just technical hurdles – they represent fundamental challenges that companies like OpenAI, Google, and Meta are still grappling with as they develop their own agent platforms.

The Entertainment vs. Innovation Divide

Perhaps most tellingly, Moltbook succeeded not as a productivity tool but as entertainment. Users weren't flocking to the platform to solve real problems; they came for the novelty of watching AI agents interact in unpredictable ways.

This mirrors countless other internet phenomena that captured public imagination before fading into digital history. The question isn't whether Moltbook was revolutionary – it clearly wasn't – but what it reveals about our relationship with emerging AI technology.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Snap, YouTube, and TikTok settled a landmark school district lawsuit over social media addiction. With 1,000+ similar cases pending, this could reshape Big Tech's liability landscape.

Thoughts

Share your thoughts on this article

Sign in to join the conversation