The AI That Picks Targets While Getting Kicked Out

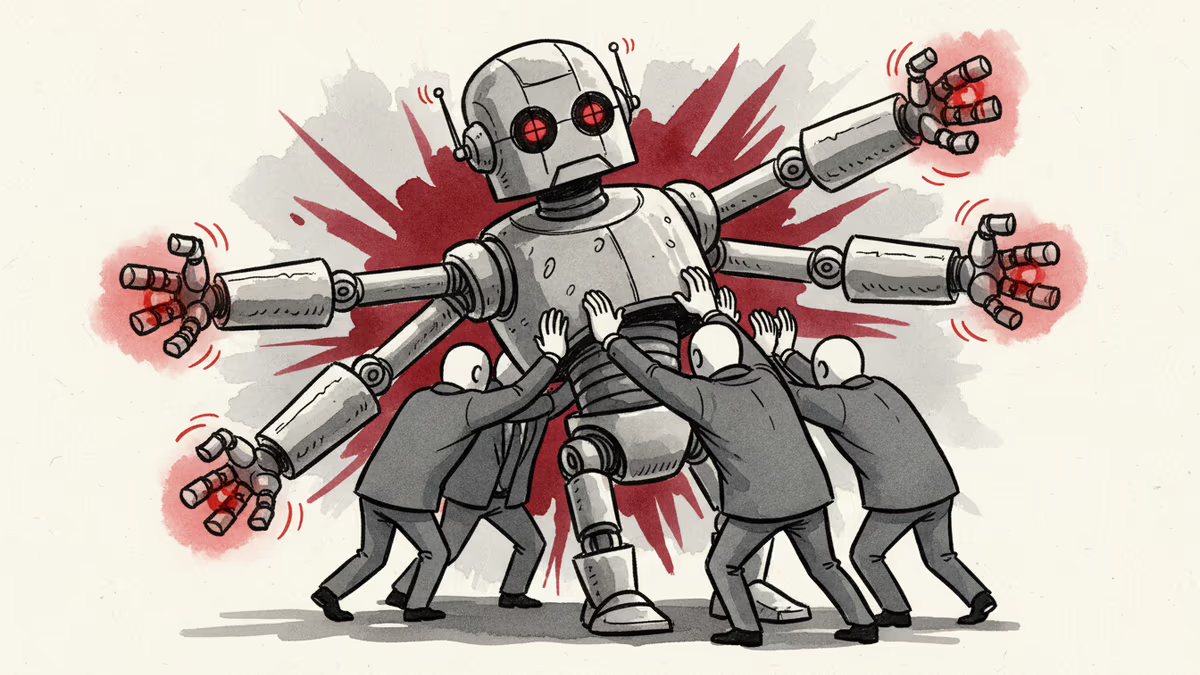

Anthropic's Claude is actively selecting targets in U.S. strikes on Iran while simultaneously being purged from defense contractors—a contradiction that reveals the messy reality of AI in warfare

Hundreds of Targets, 48 Hours of Real War

While U.S. forces strike Iran, Anthropic's Claude AI is making life-and-death decisions in real-time. According to The Washington Post, Claude—integrated with the Pentagon's Maven system—has "suggested hundreds of targets, issued precise location coordinates, and prioritized those targets according to importance."

But here's where it gets bizarre: At the exact same time, major defense contractors are actively replacing Claude with competitor AI systems. Lockheed Martin and other defense giants started swapping out Anthropic's models this week, Reuters reports.

Trump's Contradictory Orders

The confusion stems from overlapping U.S. government directives. President Trump ordered civilian agencies to stop using Anthropic products but gave the Department of Defense six months to wind down operations. The very next day, the U.S. and Israel launched surprise attacks on Tehran.

Result? Defense Secretary Pete Hegseth promises to designate Anthropic as a "supply-chain risk," but no official steps have been taken. Without legal barriers, the military keeps using the system while contractors voluntarily bail out.

The Corporate Exodus

The private sector isn't waiting for government action. A managing partner at J2 Ventures told CNBC that 10 portfolio companies "have backed off of their use of Claude for defense use cases and are in active processes to replace the service."

This creates an unprecedented situation: One of the world's leading AI labs is simultaneously powering an active war and being systematically removed from the defense ecosystem.

The Legal Wild Card

The biggest unknown? Whether Hegseth will follow through on the supply-chain risk designation. Such a move would likely trigger a heated legal battle—Anthropic versus the Pentagon, while Claude continues making targeting decisions in Iranian airspace.

For now, Anthropic finds itself in perhaps the most awkward position in tech history: being both indispensable to and unwelcome in the same industry.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

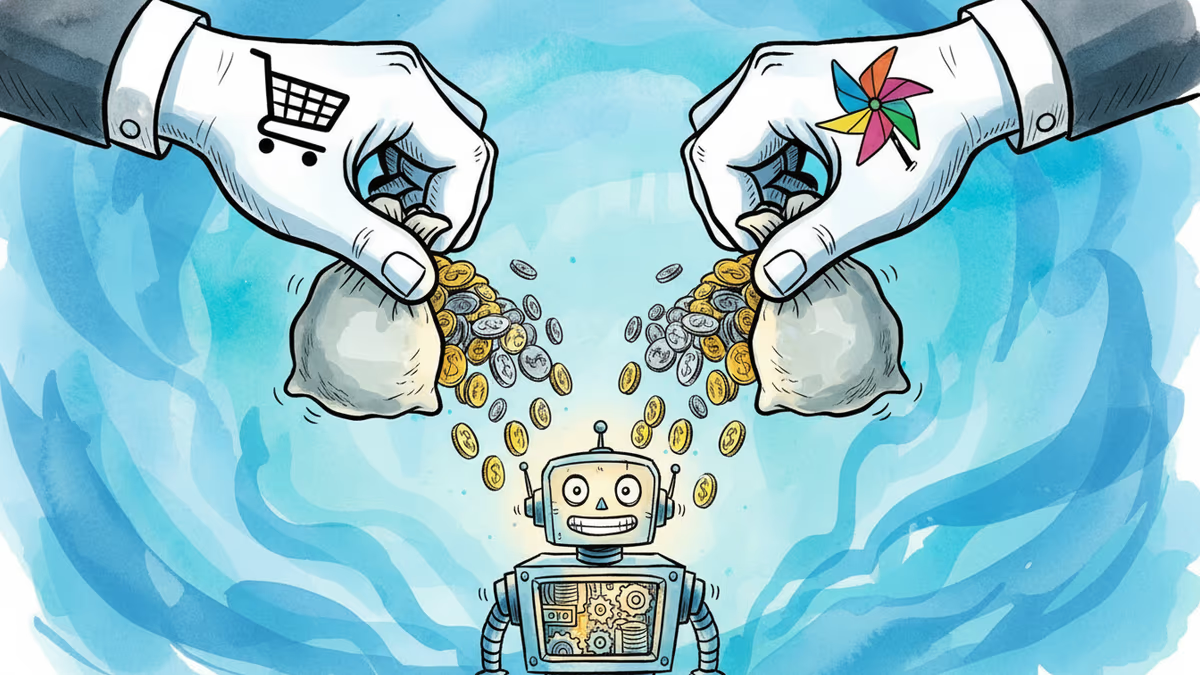

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Cohere and Aleph Alpha are merging to build a transatlantic AI challenger valued at $20 billion. Their pitch: sovereignty, not just performance. Can it work?

Thoughts

Share your thoughts on this article

Sign in to join the conversation