Meta New Mexico trial evidence exclusion: Tech giant fights to keep suicide cases and Harvard past out of court

Meta is fighting to exclude suicide cases, mental health research, and Mark Zuckerberg's Harvard history from the upcoming New Mexico trial regarding minor safety.

They’re heading to court, but the defense is already building a wall. As Meta prepares to face trial in New Mexico over allegations that it failed to protect minors from sexual exploitation, the company is making an aggressive push to scrub sensitive context from the proceedings.

Meta New Mexico Trial Evidence Exclusion Strategy

According to Reuters, Meta has petitioned the judge to exclude several key pieces of information. The company wants to bar research studies on youth mental health, any mention of high-profile teen suicide cases linked to social media, and even details about the company's massive financial resources.

Interestingly, Meta’s legal team is also seeking to block references to Mark Zuckerberg’s undergraduate years at Harvard University and the personal activities of its employees. These requests, known as motions in limine, are standard pretrial maneuvers designed to ensure that a jury isn't swayed by irrelevant or prejudicial information, ensuring a fair trial for the defendant.

Protecting a Fair Trial vs. Hiding Corporate Liability

While Meta argues these exclusions are necessary to keep the focus on the actual facts of the New Mexico case, critics view the move as an attempt to distance the company from the systemic issues its platforms may have caused. The judge's decision on what stays and what goes will define how much of Meta's internal awareness can be used against it in court.

Authors

Related Articles

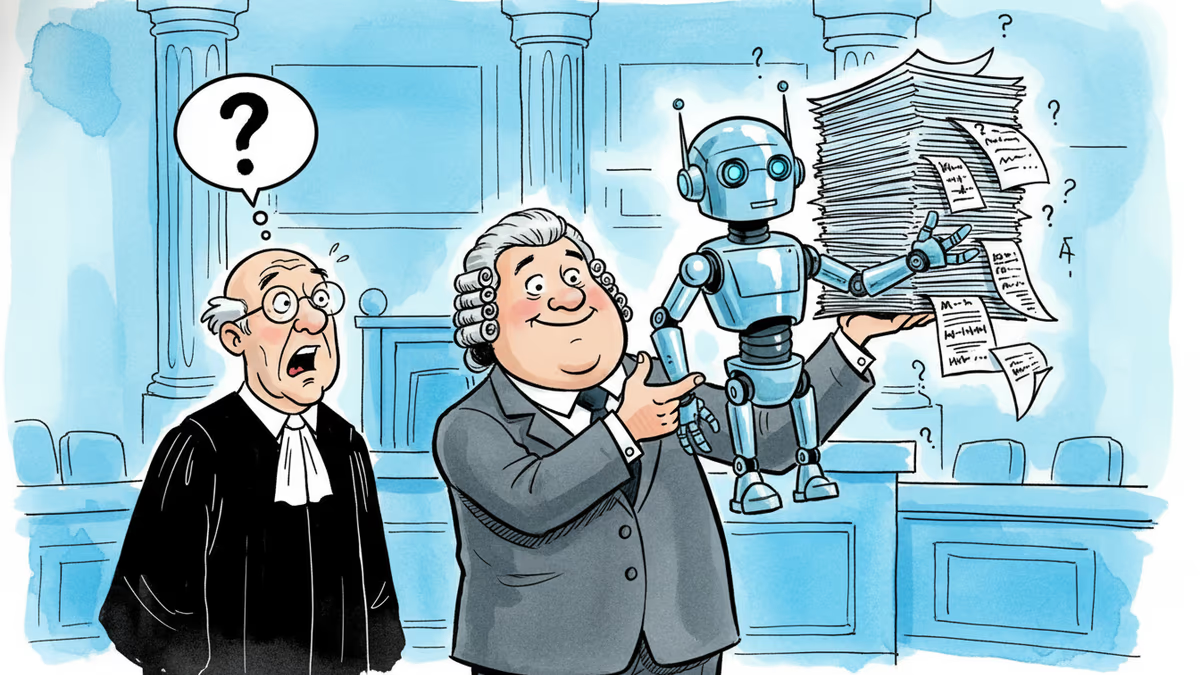

A law firm marketing itself on AI-powered legal success submitted fake citations in a federal appeal. Now its lawyers face sanctions — and the broader AI legal industry faces a credibility crisis.

Five major publishers and author Scott Turow have filed a class action lawsuit against Meta, alleging the company used illegal pirate sites like LibGen to train its Llama AI models without permission.

New Mexico already won $375 million from Meta. Now it wants something harder to give: a court order forcing Facebook, Instagram, and WhatsApp to redesign themselves. A three-week trial starts Monday.

Meta acquired humanoid robotics startup ARI, adding to a Big Tech arms race in physical AI. The real prize isn't a robot product — it's a new way to train intelligence.

Thoughts

Share your thoughts on this article

Sign in to join the conversation