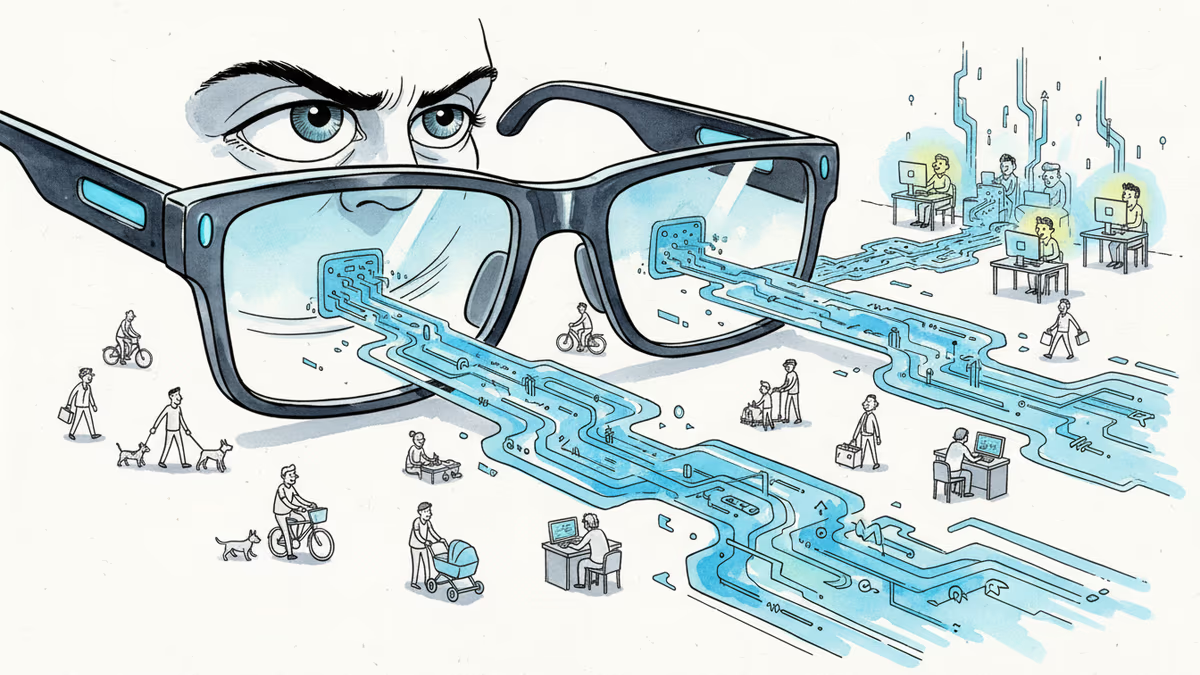

Your Ray-Ban Smart Glasses Were Secretly Watched by Strangers

Meta subcontractor employees in Kenya have been viewing sensitive footage captured by Ray-Ban Meta smart glasses for AI training. What does this mean for smart glasses privacy?

What if the private moments you captured with your Ray-Ban smart glasses were being watched by complete strangers halfway around the world?

That's exactly what's been happening, according to a Swedish investigation. Employees at Sama, a Kenya-based subcontractor, have been viewing sensitive user content captured by Ray-Ban Meta smart glasses as part of their data annotation work for Meta's AI systems.

The revelation raises uncomfortable questions about what happens to our most personal moments when they're fed into the AI training machine.

Over 30 Workers Speak Out

The investigation by Swedish newspapers Svenska Dagbladet and Göteborgs-Posten, along with Kenya-based freelance journalist Naipanoi Lepapa, interviewed more than 30 Sama employees at various levels. These workers handle video, image, and speech annotation for Meta's AI systems – including footage from smart glasses.

While investigators couldn't access the actual materials or work areas, they also spoke with former Meta US employees who witnessed live data annotation for several Meta projects. The picture that emerges is one of routine human review of what users might assume is private content.

The workers aren't just processing random data – they're viewing real footage captured by real people wearing smart glasses in their daily lives. Intimate moments, private conversations, personal spaces – all potentially visible to annotators whose job is to teach AI systems how to understand the world.

The AI Training Dilemma

From Meta's perspective, this isn't malicious surveillance – it's necessary AI development. Training sophisticated AI models requires massive amounts of labeled data, and complex real-world footage from smart glasses needs human eyes to properly categorize and understand.

But here's where it gets murky: How many users actually understood this when they bought their smart glasses?

Privacy advocates argue this represents a fundamental breach of user expectations. When someone puts on smart glasses to capture a family dinner or a walk in the park, they're not typically thinking about Kenyan data workers reviewing that footage.

The issue becomes even more complex when you consider that smart glasses capture not just the wearer's perspective, but everyone around them. Bystanders never consented to having their images processed by overseas contractors.

A Global Privacy Reckoning

This controversy hits at a critical moment for the smart glasses market. Apple is rumored to be developing its own smart glasses, Google has ongoing AR projects, and dozens of startups are betting big on wearable cameras becoming mainstream.

Each of these companies will face the same fundamental challenge: How do you train AI on real-world data while respecting user privacy?

European regulators, already aggressive about data protection through GDPR, are likely watching closely. The US Congress, which has been increasingly skeptical of Big Tech's data practices, may also take notice.

For investors, this represents both risk and opportunity. Companies that can solve the privacy-AI training puzzle first may gain a significant competitive advantage.

The Transparency Test

What's particularly striking about this case is how it exposes the gap between user expectations and corporate reality. Most people buying smart glasses probably assume their data stays within Meta's systems, not that it's being reviewed by third-party contractors in different countries.

This isn't necessarily illegal – it likely falls within the terms of service users agreed to. But it highlights how those terms of service often don't match user intuition about privacy.

The solution isn't necessarily to stop human review of AI training data – that would likely harm AI development. Instead, companies need radically better transparency about what happens to user data and clearer consent mechanisms.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Booking.com confirmed a data breach exposing names, emails, addresses, phone numbers, and booking details. Hackers are already using the data for phishing attacks.

Two ex-Apple engineers built an AI puck that only listens when you press it. At $179, Button is a deliberate bet that dedicated AI hardware beats the Swiss Army knife approach of smartphones.

Two class action lawsuits allege LinkedIn secretly scanned users' browsers to identify installed extensions. Here's what happened, who's behind it, and why it matters.

Granola's AI meeting app claims notes are "private by default," but anyone with a link can view them—and your data trains their AI unless you opt out. Here's what that means.

Thoughts

Share your thoughts on this article

Sign in to join the conversation