Meta AI Character Access for Teens Paused Globally Amid Legal Battles

Meta is pausing AI character access for teens globally as it faces lawsuits over child safety and addiction. A new PG-13 version with parental controls is in development.

The conversation between teens and AI just hit a pause button. Meta has exclusively told TechCrunch that it's disabling access to its AI characters for teenagers across all its platforms worldwide. While the company says it's not abandoning the project, it's taking a step back to rebuild the experience with safety at the forefront.

Meta AI Character Access for Teens Halted Under Legal Pressure

This sudden shutdown arrives just days before a high-stakes trial in New Mexico where Meta faces accusations of failing to protect children from sexual exploitation. Additionally, next week, CEO Mark Zuckerberg is expected to testify in a case regarding social media addiction. The regulatory walls are closing in, forcing a massive pivot in how AI interacts with younger demographics.

Teens will no longer be able to access AI characters across our apps until the updated experience is ready... this applies to anyone who has given us a teen birthday or those we suspect are teens via age prediction tech.

Building a Digital Sandbox: PG-13 AI

The upcoming version of these AI characters will reportedly follow a PG-13 rating model. Parents will soon have the power to monitor chat topics or shut down AI interactions entirely. The AI's scope will be narrowed to safe zones like education, sports, and hobbies, strictly avoiding extreme violence or graphic content.

| Feature | Current Status | Future Update |

|---|---|---|

| Teen Access | Paused Globally | Restricted Access |

| Parental Control | Limited | Full Monitoring & Toggle |

| Content Scope | Open-ended | Education & Hobbies (PG-13) |

| Verification | Self-declared | AI Age Prediction |

Authors

Related Articles

Florida is investigating OpenAI over alleged links to a mass shooting. As AI firms quietly restrict their most powerful tools, a harder question is taking shape: who's legally responsible when AI helps someone plan violence?

Florida's AG is investigating OpenAI over a campus shooting, child safety risks, and national security concerns. What it means for AI regulation in America.

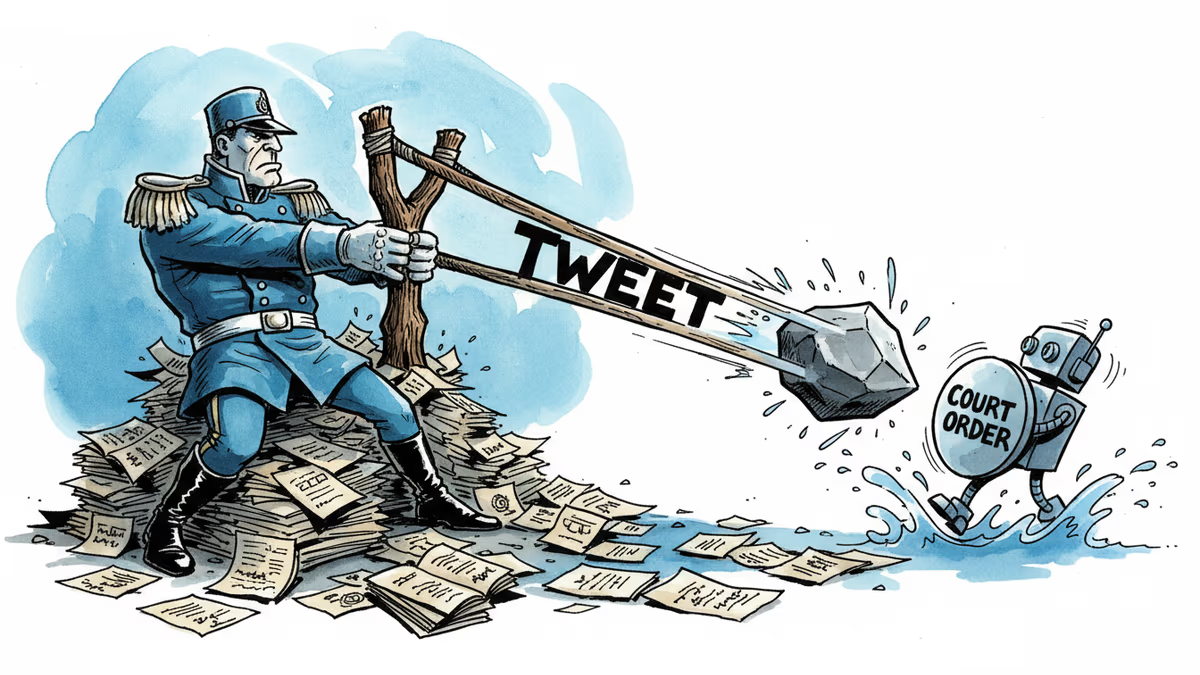

A California judge blocked the Pentagon from labeling Anthropic a supply chain risk. The 43-page ruling exposes a pattern: tweet first, lawyer later. What it means for AI governance and the limits of government leverage.

Pinterest's CEO is calling for a government ban on social media for under-16s — and using his own platform's data to prove it won't hurt business. What happens next?

Thoughts

Share your thoughts on this article

Sign in to join the conversation