Beyond Words: Decoding Brain-Computer Interface facial gestures for Future Neural Prosthetics

BCI technology is evolving to decode facial gestures. Researchers at UPenn use macaque models to map neural circuitry, aiming to restore emotional nuance for paralysis patients.

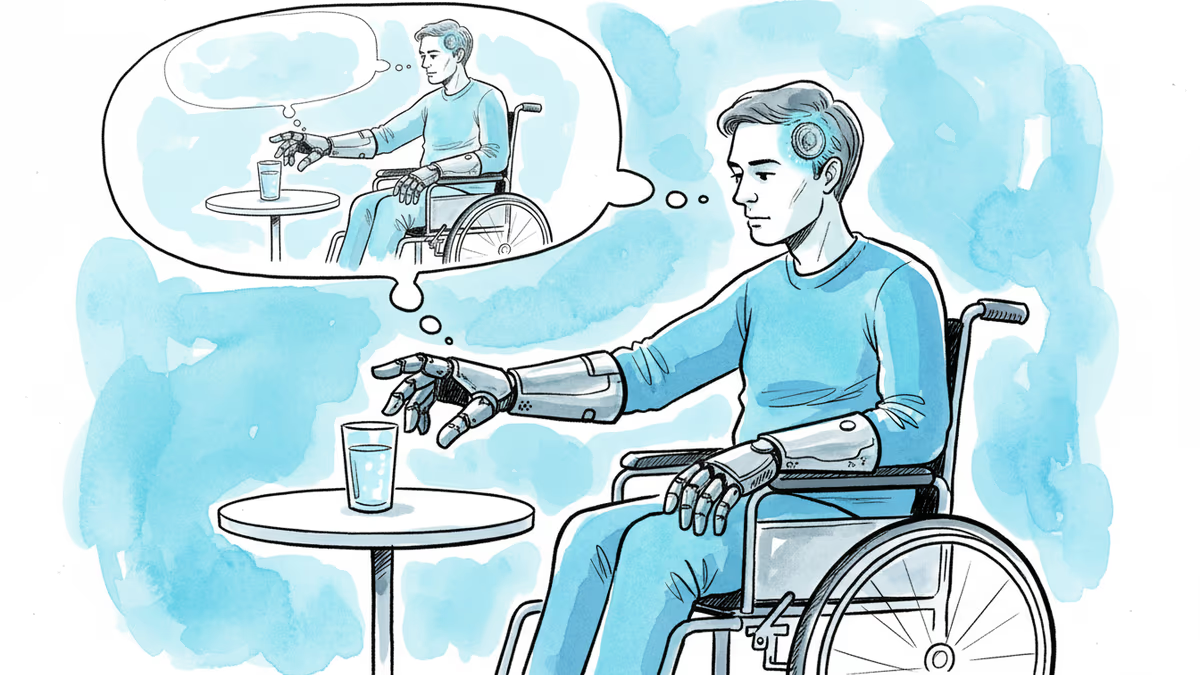

Your brain says 'no' with more than just words. While Brain-Computer Interface (BCI) technology has made massive strides in extracting speech from neural signals, language is only half the story. Geena Ianni, a neuroscientist at the University of Pennsylvania, argues that the true meaning of communication is often punctuated by a smirk or a frown—nuances that current technology can't yet capture.

Advancing Brain-Computer Interface facial gestures via Macaque Studies

To bridge this gap, Ianni's team is looking at how the brain generates facial expressions. While neuroscience has a firm grip on how we perceive faces, the 'generation' side remains a mystery. By studying macaques—social primates with facial musculature strikingly similar to humans—researchers found that long-held assumptions about the brain's "division of labor" were wrong.

- Old Theory: Distinct regions for emotional vs. volitional (speech) movements.

- New Finding: Overlapping neural circuitry responsible for complex expressions.

The Future of Neural Prostheses

This research lays the groundwork for a new generation of neural decoders. For patients with stroke or paralysis, being able to project their facial gestures alongside synthetic speech could restore the emotional richness of their interactions. By mapping these movements down to individual neurons, the team is closer to building a prosthetic that feels truly human.

Authors

Related Articles

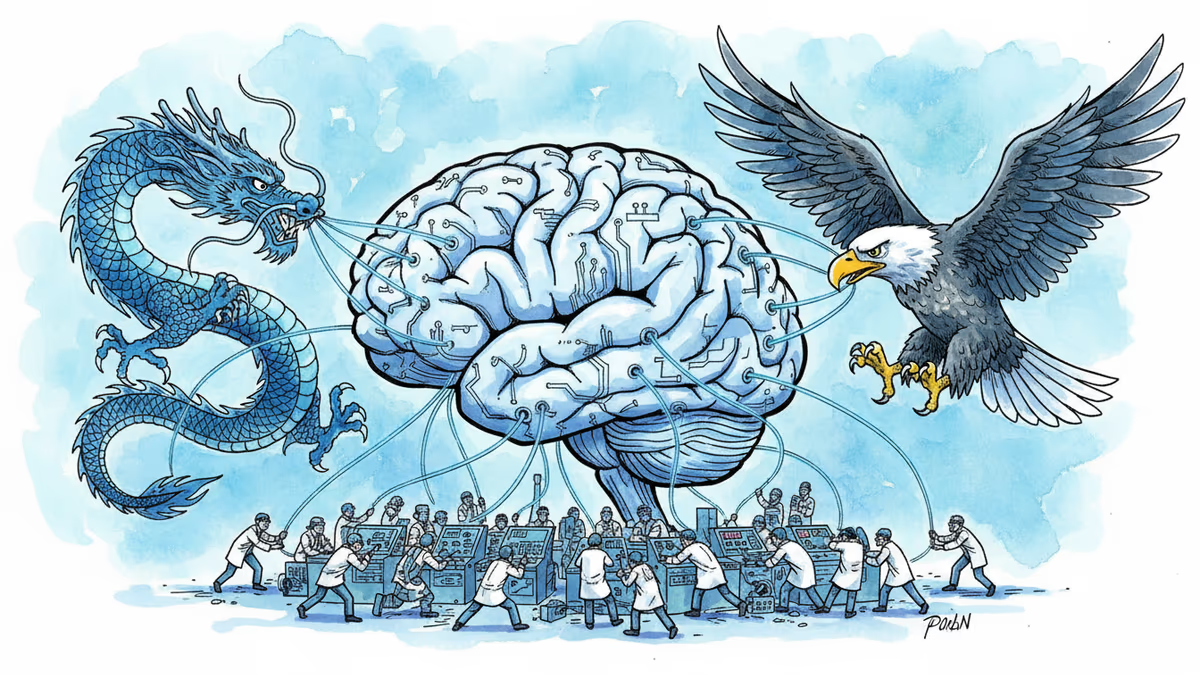

China's NEO brain implant is now a legally sold medical device — the world's first commercial BCI. What this means for Neuralink, global regulators, and the future of human-machine interfaces.

Max Hodak's Science Corp raises $230M for rice-grain sized chip that restores vision to blind patients. Could beat Neuralink as first BCI company to commercialize

China's BCI industry rapidly scales from research to commercialization with strong policy support, insurance coverage, and growing investment, challenging US leaders like Neuralink.

OpenAI has invested $252 million in Sam Altman's neurotech startup Merge Labs. Discover how they plan to use ultrasound and AI to link brains to computers without surgery.

Thoughts

Share your thoughts on this article

Sign in to join the conversation