The Lawyer Who Asked AI to Interrogate a Surgeon

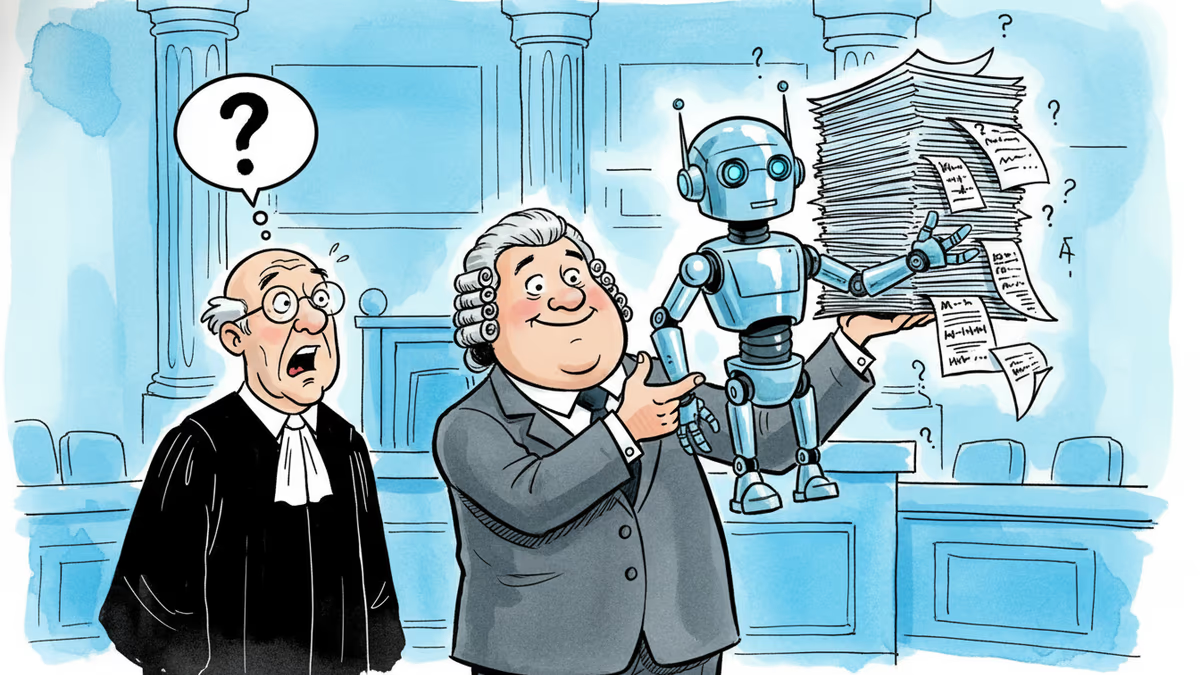

When a coroner refused an independent expert report after a cardiac patient's unexpected death, a barrister turned to AI. What that quiet decision reveals about law, medicine, and access to justice.

To cross-examine a surgeon, you first need to know what questions a surgeon would dread.

In spring 2024, a man in his mid-70s underwent complex cardiac surgery at a hospital in the Midlands. Two days later, he deteriorated unexpectedly and died. The cause was unclear. The hospital referred the case to the coroner's service — standard protocol — and the family, devastated and searching for answers, instructed clinical negligence barrister Anthony Searle to represent them.

Searle knew what he needed: probing, technically precise questions to put to the surgeons involved. But when the coroner declined his request for an independent expert report, he was left without his usual roadmap. So he turned to AI.

A Small Decision With Large Implications

This isn't a story about AI replacing lawyers. It's a story about what happens when a professional, facing a constraint, reaches for a new tool — and what that quiet, pragmatic choice signals about where an entire industry is heading.

In medical negligence cases, the architecture of expertise has always been the same: instruct a specialist, wait weeks or months for their report, then build your questions around their findings. It's thorough. It's also expensive and slow. In England and Wales, the average clinical negligence claim takes three to five years to resolve, and expert fees alone can run into the tens of thousands of pounds.

AI doesn't replace that process. But in Searle's case, it appears to have helped him navigate around a specific bottleneck — the absence of an expert report — and still arrive at medically informed questions. Whether those questions matched what a consultant cardiologist would have produced remains unpublished. What matters is that a practising barrister thought it was worth trying, and found it useful enough to discuss publicly.

Who Benefits, and Who Bristles

For families like the one Searle represented, the implications are straightforward: lower barriers to meaningful legal challenge. Medical negligence cases have long been the preserve of those who can afford the front-loaded costs of expert opinion. If AI can compress that cost — even partially — it shifts the balance toward claimants who would otherwise be priced out.

For clinicians, the picture is more uncomfortable. Cardiac surgery is inherently high-risk. A patient dying two days post-operatively is not, by itself, evidence of negligence. The concern within medical circles is that AI-generated questions, however technically fluent, may lack the contextual judgment to distinguish between a tragic outcome and a preventable one. The British Medical Association has been pushing for clearer guidance on AI use in legal proceedings precisely because of this — the fear that sophisticated-sounding questions could subject surgeons to scrutiny that a human expert would have quickly dismissed as unfounded.

For the courts, the questions are procedural and philosophical at once. Does a barrister have a duty to disclose that a line of questioning was AI-assisted? Does it matter? English courts have not yet ruled on this. The Solicitors Regulation Authority updated its AI guidance in 2024, but the specifics of courtroom use remain a grey area.

The Deeper Disruption

Zoom out, and this case sits inside a much larger shift. AI tools are already being used by lawyers in the US and UK to draft pleadings, summarise disclosure documents, and identify case precedents. Firms like Allen & Overy and Linklaters have rolled out internal AI platforms. Startups like Harvey and Clio are building tools specifically for smaller practices and solo practitioners.

But using AI to generate expert-level questions in a live legal proceeding — where the stakes are a family's understanding of why their father died — is a different kind of use case. It's not administrative. It's epistemic. It's asking AI not just to find information, but to reason about what a specialist would find significant.

That's the line that's quietly being crossed, in courtrooms and consulting rooms and boardrooms, without much public debate about where it leads.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

A law firm marketing itself on AI-powered legal success submitted fake citations in a federal appeal. Now its lawyers face sanctions — and the broader AI legal industry faces a credibility crisis.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation