When Parents Get the Alert, Where Do Teens Go?

Instagram's new suicide search alerts for parents raise questions about whether surveillance-based solutions truly protect teens or simply push their struggles underground.

Every 90 Seconds, A Teen Attempts Suicide

In the United States, a teenager attempts suicide every 90 seconds. Instagram's new alert system, announced this week, is Meta's response to this crisis. When teens repeatedly search for suicide or self-harm terms, parents will now get notified.

But the more pressing questions lie elsewhere: What should parents do when they get that alert? And where will teens go when they realize they're being watched?

Uncomfortable Truths From the Courtroom

The timing of this announcement isn't coincidental. This week, in a California federal courthouse, Instagram head Adam Mosseri faced intense grilling from prosecutors over the platform's delayed rollout of basic safety features, including nudity filters for teen private messages.

Even more revealing was internal Meta research disclosed in a separate Los Angeles County lawsuit: parental supervision and controls had little impact on kids' compulsive social media use. The study found that children facing stressful life events were actually more likely to struggle with regulating their social media consumption.

Yet Meta's solution? More parental alerts.

Mental Health Experts Are Divided

The professional community's response has been mixed. Dr. Sarah Chen, a adolescent psychiatrist in San Francisco, sees potential: "Early intervention opportunities are crucial. Sometimes parents miss critical warning signs."

But teen advocates worry about unintended consequences. "Surveillance breaks trust," argues Maya Rodriguez, director of the Youth Mental Health Alliance. "When teens feel monitored, they don't seek help—they hide better."

The concern isn't theoretical. Research from the University of Washington found that 68% of teens who felt surveilled by parents were less likely to disclose mental health struggles, even when experiencing suicidal ideation.

The Platform Migration Problem

Here's what Meta's announcement doesn't address: platform switching. If Instagram becomes the "watched" platform, where do struggling teens go? TikTok? Snapchat? Discord? The dozens of smaller platforms that lack any safety infrastructure?

John Shehan, vice president of the National Center for Missing & Exploited Children, calls this the "whack-a-mole problem." "You can't solve a systemic issue by making one platform more restrictive. You just push the behavior elsewhere."

The alerts will initially roll out in the US, UK, Australia, and Canada next week, expanding to other regions later this year. But without industry-wide coordination, the effectiveness remains questionable.

What Parents Actually Need

The notification will include "resources designed to help parents approach conversations with their teen." But early previews suggest these are generic mental health resources—hardly the nuanced guidance parents need when confronting a child's suicidal ideation.

Dr. Lisa Damour, adolescent psychologist and author, points to the real gap: "Getting an alert is the easy part. Knowing how to respond without pushing your teen further away? That's where parents need actual support."

Meta consulted with their Suicide and Self-Harm Advisory Group to set thresholds for alerts, aiming to avoid notification fatigue. But they acknowledge they "may sometimes notify parents when there may not be a real cause for concern."

Authors

Related Articles

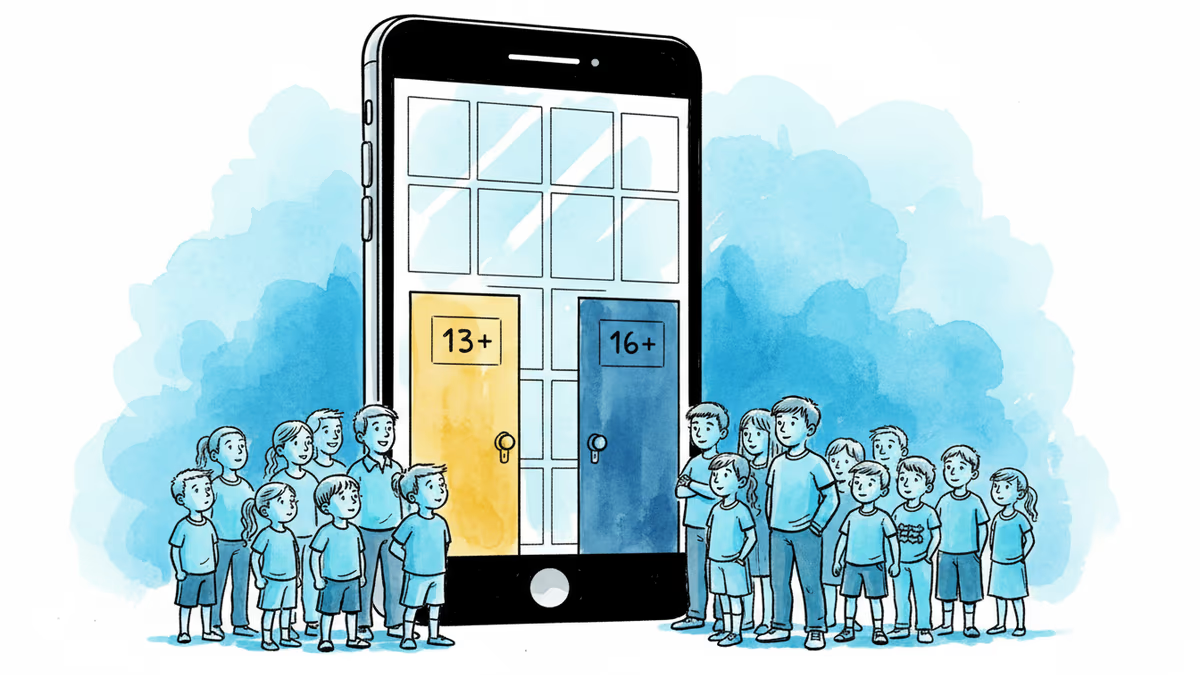

Indonesia introduces age-tiered social media restrictions, allowing 13+ for low-risk platforms and 16+ for high-risk ones. How does this compare to Australia's blanket ban?

From Australia's $34M fines to France's parliamentary vote, 10 countries are restricting teen social media access. A global experiment in digital parenting begins.

Middle East missile strikes trigger global doomscrolling epidemic. Neuroscience reveals why we can't stop scrolling through bad news and how to break free.

Governments worldwide are mandating social media age checks, but the technology is easily fooled, creating new privacy risks while failing to protect children effectively.

Thoughts

Share your thoughts on this article

Sign in to join the conversation