Instagram Will Snitch on Your Teen's Dark Searches

Meta introduces alerts to parents when teens search self-harm content. The line between protection and privacy just got blurrier.

When Your Phone Becomes a Tattletale

Starting next week, Instagram will do something unprecedented: it'll alert parents when their teen repeatedly searches for suicide or self-harm content. This isn't just another safety feature—it's a fundamental shift in how we think about digital privacy and parental oversight.

Meta's new system will monitor search patterns and send alerts to parents, but only for families who've opted into supervision tools. Rolling out first in the US, UK, Australia, and Canada, with plans to expand globally later this year. A similar alert system for AI chatbots is coming too.

The company says most teens don't search for this content. But when they do—and do it repeatedly within a short timeframe—that's when parents get the call.

The New Digital Dilemma

For decades, teenagers' private thoughts and struggles remained largely invisible to parents. Sure, there were warning signs, but the inner world of adolescent crisis often stayed hidden. Now, algorithms are changing that equation.

The feature raises immediate questions: Where do we draw the line? If Instagram alerts parents about self-harm searches, what about eating disorder content? Substance abuse? Sexual health questions? Once the surveillance door opens, it's hard to control what walks through.

Mental health professionals are divided. Some see early intervention opportunities. Others worry about teens losing their last private space to explore difficult topics safely.

Parents vs. Privacy: The Eternal Struggle

American families already struggle with screen time battles and social media boundaries. This feature adds a new dimension to that tension. Parents want to protect their kids. Teens want autonomy and privacy. Technology companies want to avoid liability.

The opt-in requirement is crucial here. Families must actively choose supervision, meaning both parents and teens theoretically consent to monitoring. But how many teens really understand what they're agreeing to? How many parents will pressure reluctant kids to opt in?

Consider the unintended consequences: teens might simply switch platforms, use incognito mode, or find other ways to seek help that don't trigger alerts. The most vulnerable kids might become even more isolated.

The Bigger Picture: Tech as Family Mediator

This isn't just about Instagram. It's about technology increasingly mediating family relationships. Apps now track our kids' locations, monitor their driving, and flag their mental health searches. We're outsourcing parental intuition to algorithms.

Regulators are watching closely. The EU's Digital Services Act and various state-level initiatives in the US are pushing platforms toward greater youth protection measures. Meta's move might be proactive compliance as much as genuine safety concern.

Competitors like TikTok, Snapchat, and YouTube will likely face pressure to implement similar systems. The question isn't whether this trend will spread—it's how far it'll go.

Authors

Related Articles

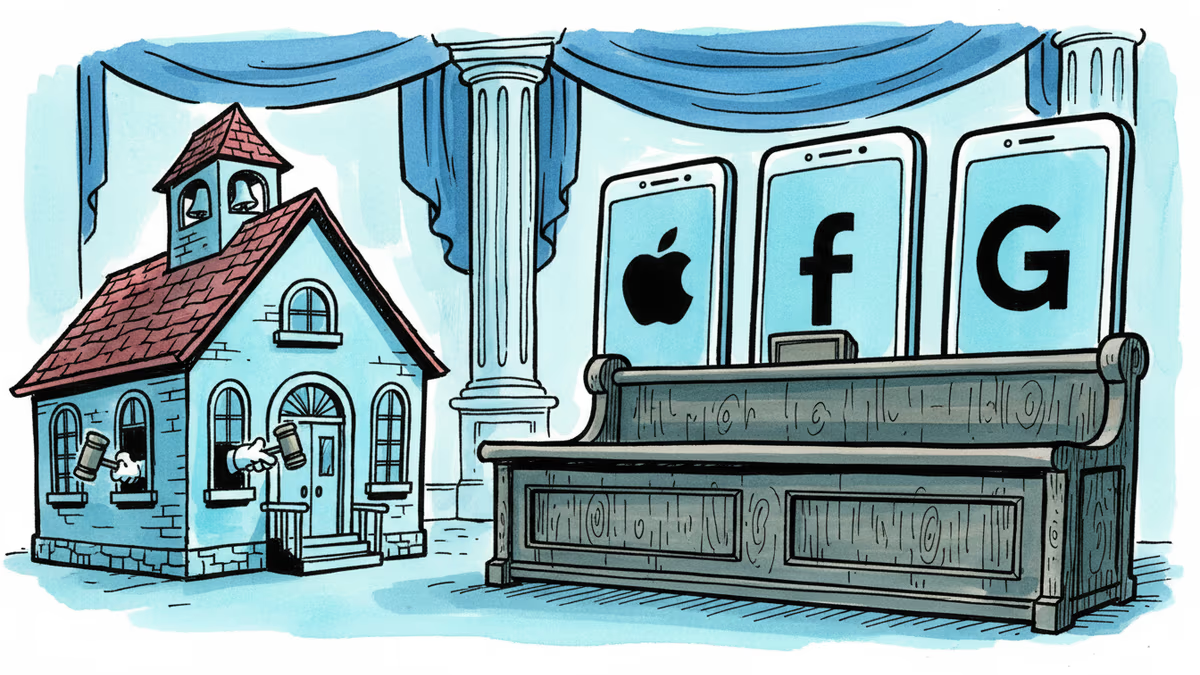

Snap, YouTube, and TikTok settled a landmark school district lawsuit over social media addiction. With 1,000+ similar cases pending, this could reshape Big Tech's liability landscape.

New Mexico already won $375 million from Meta. Now it wants something harder to give: a court order forcing Facebook, Instagram, and WhatsApp to redesign themselves. A three-week trial starts Monday.

Anthropic's tightly restricted Mythos AI—designed to find security flaws—was accessed by Discord sleuths without a single line of exploit code. Meanwhile, North Korean hackers used AI to steal $12M in three months. The security paradox of 2026.

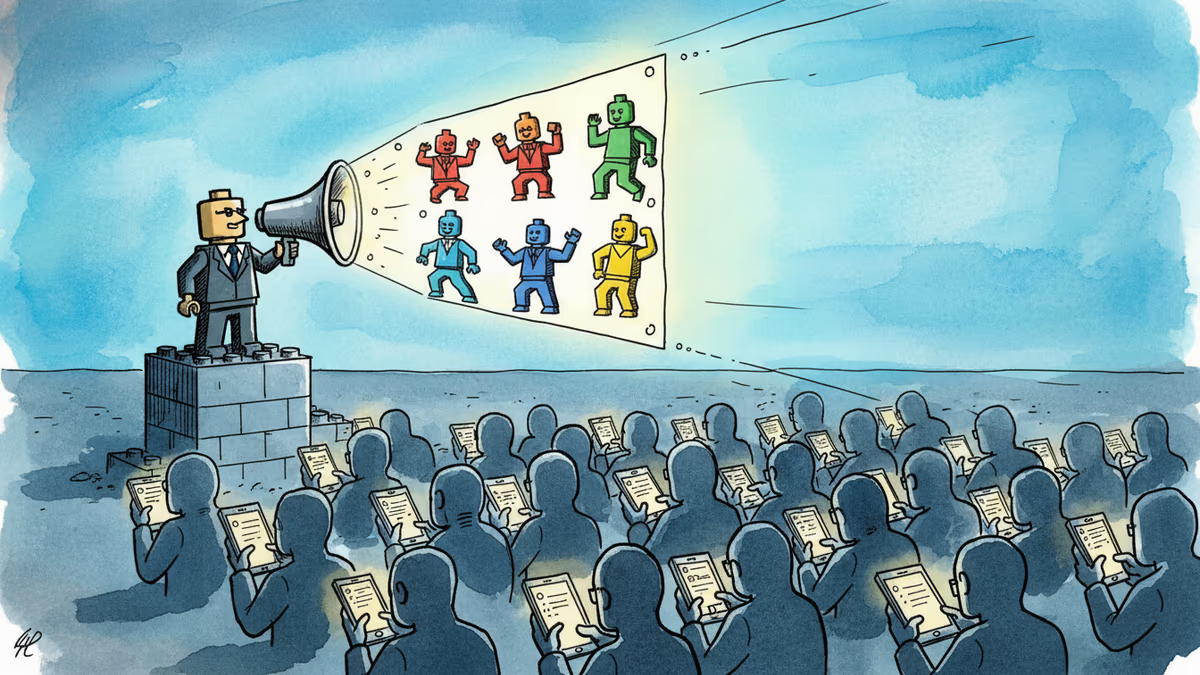

A small pro-Iran team is racking up millions of views with AI-generated Lego videos that mock Trump — and Americans are sharing them. What does that tell us about information warfare?

Thoughts

Share your thoughts on this article

Sign in to join the conversation