When Robots Do Your Dishes, Who's Really Watching?

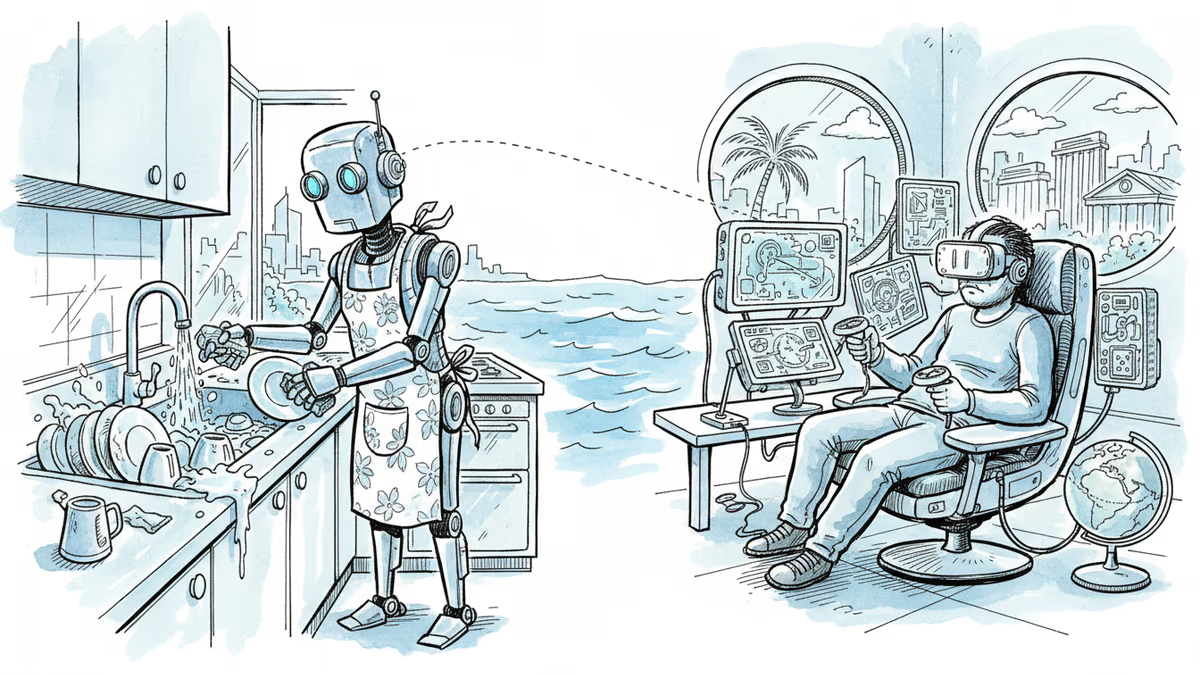

Behind the flashy demos of humanoid robots lie hidden human workers. Exploring new forms of labor and privacy concerns in the age of physical AI.

A worker in Shanghai spent an entire week wearing a VR headset and exoskeleton, opening and closing a microwave door hundreds of times a day. The robot beside him was learning every movement. This is the reality behind Nvidia's Jensen Huang's proclaimed era of "physical AI."

It's a far cry from the slick demos we see of humanoid robots gracefully putting away dishes or assembling cars. But it reveals something crucial: the human labor powering our robot future remains largely invisible.

The Hidden Workforce Behind Robot Demos

Consider Figure, a robotics company that announced a partnership last September with investment firm Brookfield, which manages 100,000 residential units. Their goal? Capturing "massive amounts" of real-world data "across a variety of household environments." Translation: your daily routines could become training data for the next generation of home robots.

Roboticist Aaron Prather recently worked with a delivery company that had workers wear motion-tracking sensors while moving boxes. The collected data will train robots to replicate those movements. "It's going to be weird," Prather admits. "No doubts about it."

This isn't just about data collection—it's about redefining work itself. Just as our words became training fodder for large language models, our physical movements are now following the same path. Except this time, humans might get an even worse deal.

Remote Control: The New Gig Economy

1X's $20,000 humanoid robot Neo is shipping to homes this year, but here's the catch: founder Bernt Øivind Børnich won't commit to any prescribed level of autonomy. When the robot gets stuck or faces a tricky task, a tele-operator from the company's Palo Alto headquarters will pilot it remotely, using the robot's cameras to iron clothes or unload your dishwasher.

The company gets customer consent before switching to tele-operation mode, but let's be honest—privacy as we know it won't exist when remote operators are doing chores in your house through a robot. And if home humanoids aren't genuinely autonomous, we're looking at a new form of wage arbitrage that recreates gig work dynamics while, for the first time, allowing physical tasks to be performed wherever labor is cheapest.

The Tesla Precedent

We've seen this movie before. When Tesla marketed its driver-assistance software as "Autopilot," it inflated public expectations about what the system could safely do. A Miami jury recently found this distortion contributed to a crash that killed a 22-year-old woman—Tesla was ordered to pay $240 million in damages.

The pattern is eerily familiar: invisible human workforces don't mean AI is vaporware, but when they remain hidden, the public consistently overestimates machines' actual capabilities. That's great for investors and hype, but it has real consequences for everyone else.

The Regulatory Blind Spot

Robotics companies remain as opaque about training and tele-operation as AI firms are about their training data. This opacity matters more than ever as physical AI moves into our workplaces, homes, and public spaces. If Huang is right about the coming robot revolution, how we describe and scrutinize this technology will shape how safely and fairly it's deployed.

Yet current AI regulations barely address the physical realm. The EU's AI Act focuses primarily on algorithmic decision-making, not the human labor that makes those decisions possible. In the US, workplace safety regulations haven't caught up to scenarios where workers wear exoskeletons to train their robot replacements.

Authors

Related Articles

The FTC fined Cox Media and two ad firms $930,000 — not for actually eavesdropping on users, but for falsely claiming they could. The case raises uncomfortable questions about surveillance capitalism.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation