The Great AI Migration: Why Users Are Fleeing ChatGPT for Claude

Anthropic's refusal to work with Pentagon surveillance sparks user exodus from ChatGPT. Daily signups hit record highs as Claude tops App Store charts.

60% Surge in Signups: When Ethics Moves Markets

The AI user base is voting with their feet—and their data. Claude has rocketed to the top of Apple's US App Store free app rankings, dethroning ChatGPT in a dramatic reversal. Anthropic reports daily signups hitting record highs, with free users jumping over 60% since January and paid subscribers more than doubling this year.

The catalyst? A stark ethical divide. Anthropic refused to let the Department of Defense use its AI models for mass domestic surveillance or fully autonomous weapons. Meanwhile, OpenAI signed a Pentagon deal, claiming "safeguards" that have convinced few critics. President Trump's order to designate Anthropic a supply-chain threat only amplified the controversy.

The Great Data Migration: Easier Than You Think

Switching AI assistants doesn't mean starting from scratch. ChatGPT users can export their digital memories through Settings → Personalization → Memory, copying stored preferences, or via Settings → Data Controls → "Export Data" for complete chat histories in text or JSON format.

Transferring to Claude is straightforward once you enable Memory (Pro plan required). Start a new conversation with prompts like "Here's important context I'd like you to remember" and paste your data. Pro tip: don't dump raw chat logs—ask Claude to "review this and summarize my key preferences" for better integration.

Breaking Up Is Hard to Do: The Complete Deletion Guide

Canceling your subscription isn't enough for a clean break. To permanently delete your ChatGPT account, first purge stored data through Settings → Personalization → Memory. Send a final chat command: "Delete all my memory and personalized data." Then navigate to account management settings for complete account deletion.

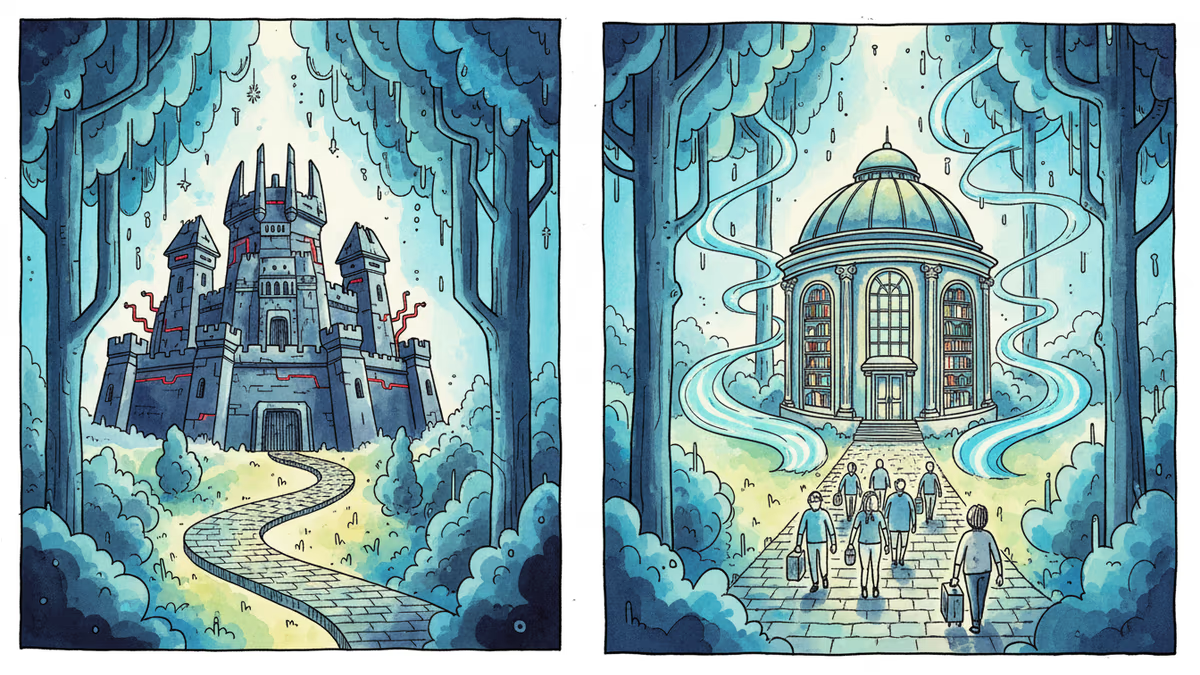

The Pentagon Papers of AI

This migration reflects deeper tensions about AI's role in society. Anthropic's stance resonates with users increasingly concerned about surveillance capitalism and military applications of AI. The company's "Constitutional AI" approach—training models with explicit ethical principles—offers an alternative to OpenAI's more commercially aggressive strategy.

But the divide isn't just philosophical. It's business. OpenAI's Pentagon contract could unlock billions in government revenue, while Anthropic's principled stance may limit growth but builds user trust. The question is which approach proves more sustainable.

Beyond the Binary Choice

The ChatGPT-to-Claude migration isn't just about two companies—it's about what kind of AI future we're building. Other players are watching closely. Google's Gemini faces similar ethical questions, while smaller AI companies are positioning themselves as "ethical alternatives."

For businesses, the choice involves more than features and pricing. It's about brand alignment, regulatory risk, and employee values. As AI becomes more integrated into daily workflows, the ethical stance of your AI provider becomes part of your corporate identity.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation