Your Writing Coach Just Got Replaced by a Dead Professor

Grammarly's AI feature uses deceased academics and living experts without permission to provide writing advice, sparking privacy and consent concerns in the AI age.

When Your Boss Becomes an AI Writing Coach

A journalist testing Grammarly's new "expert review" feature got more than they bargained for. The AI-powered tool claimed to offer writing advice "inspired by" their own editor-in-chief, along with several colleagues. There was just one problem: none of them had given permission.

Launched in August, the feature promises to deliver personalized feedback from subject matter experts across various fields. But as Wired reported and The Verge confirmed through testing, many of these "experts" include recently deceased professors and living professionals who never consented to their expertise being digitized and commercialized.

The implications go far beyond a simple privacy breach. This represents a fundamental shift in how AI companies view intellectual property, professional reputation, and the commoditization of human expertise.

The Consent Crisis in AI Development

The Verge's testing revealed AI-generated feedback attributed to editor-in-chief Nilay Patel and several senior editors, including David Pierce, Sean Hollister, and Tom Warren. None had authorized Grammarly to use their names or expertise.

This isn't an isolated incident of poor data hygiene. It appears to be a deliberate strategy: analyze public writing samples from recognized experts, train AI models on their style and knowledge, then present the output as if these experts personally reviewed your work.

The practice becomes even more ethically murky when applied to deceased academics. Using someone's life work to train commercial AI systems without family consent raises questions about digital estate rights and posthumous intellectual property protection.

Legal and Professional Liability Concerns

Beyond the obvious consent issues, this practice creates a web of potential legal problems. When AI generates advice under a real person's name, who bears responsibility for the consequences?

Consider a scenario where Grammarly's AI, speaking as a renowned journalism professor, advises a student to use a particular reporting technique that later leads to legal trouble. The professor's reputation suffers despite never providing that specific guidance.

Professional liability insurance typically doesn't cover AI-generated advice given under someone else's name. This creates a gap where real experts could face reputational or legal consequences for advice they never actually gave.

The Broader AI Attribution Problem

This case highlights a growing tension in AI development. Companies argue they're democratizing expertise, making high-quality advice accessible to everyone. Critics counter that this is sophisticated identity theft dressed up as innovation.

The practice reflects how AI companies increasingly view public content as free training data, regardless of the creators' intentions or rights. Every published article, academic paper, or professional blog post becomes potential fodder for commercial AI systems.

This approach fundamentally misunderstands the nature of expertise. True professional advice isn't just about pattern matching from previous work—it involves real-time assessment, contextual understanding, and professional judgment that current AI systems can't replicate.

What This Means for Users and Professionals

For users, the immediate concern is transparency. How can you evaluate advice when you don't know its true source? Grammarly markets this as expert guidance, but it's actually pattern matching from scraped content.

For professionals, the implications are more serious. Your professional reputation could be tied to AI-generated advice you never provided. Your expertise becomes a product sold without your knowledge or consent.

The precedent this sets is troubling. If companies can freely appropriate professional identities for AI training, what stops them from creating AI versions of doctors, lawyers, or financial advisors without consent?

The answer may determine whether AI becomes a tool that amplifies human expertise or one that replaces it entirely, with or without permission.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.

Memory makers can't build fabs fast enough. By end of 2027, supply will cover just 60% of demand. Here's why the shortage could last until 2030—and what it means for AI, your devices, and the chip industry.

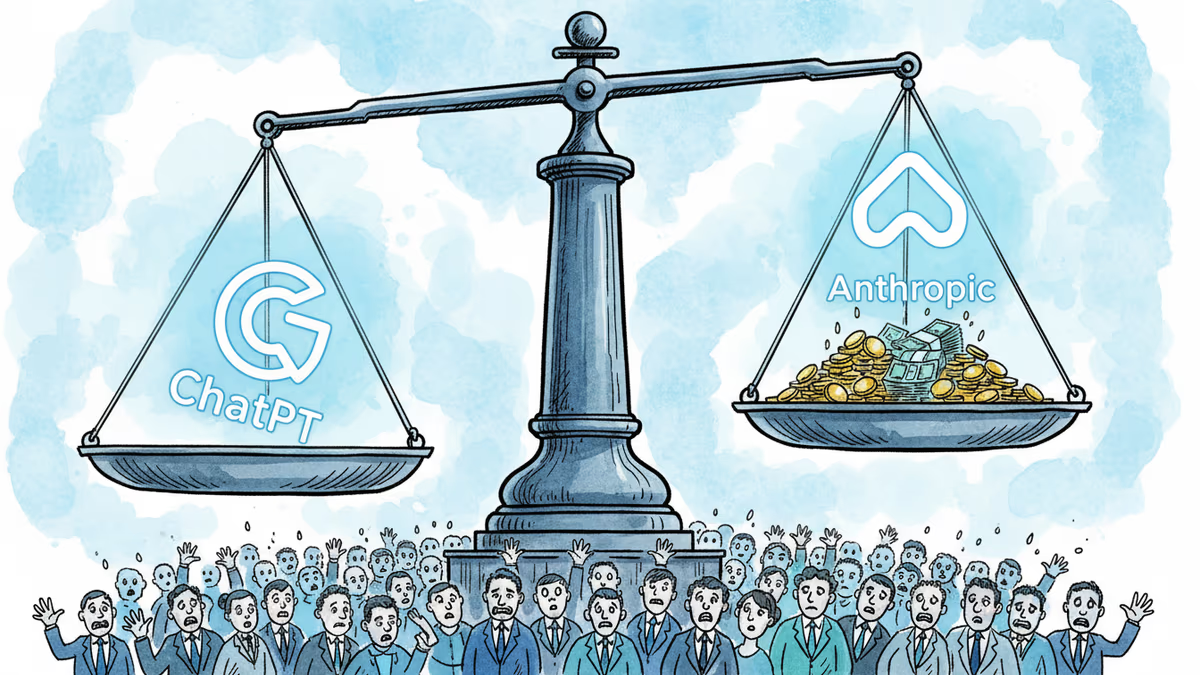

OpenAI's $852B valuation is drawing skepticism from its own backers as Anthropic's ARR tripled in three months. The secondary market is already voting with its feet.

Machine-translated junk is flooding minority-language Wikipedia pages. AI learns from that junk. The result could accelerate the extinction of thousands of languages.

Thoughts

Share your thoughts on this article

Sign in to join the conversation