AI Resurrects Dead Authors to Grade Your Writing (Without Permission)

Grammarly's new Expert Review feature uses AI versions of Stephen King, Carl Sagan, and other writers to provide feedback - but none of them agreed to this digital afterlife.

What Happens When AI Puts Words in Dead Authors' Mouths?

Imagine getting writing feedback from Stephen King or Carl Sagan—except they never actually read your work. That's exactly what Grammarly's new "Expert Review" feature offers: AI-powered critiques from famous writers and scholars, both living and dead, none of whom consented to this digital resurrection.

The writing tool, used by over 200 million people globally, has quietly transformed from a simple grammar checker into something far more ambitious—and ethically questionable. Users can now request feedback from AI versions of literary giants like William Zinsser or scientists like Neil deGrasse Tyson, with the platform cheerfully noting it's taking "inspiration" from their life's work.

The Fine Print That Changes Everything

Buried in the disclaimers, Grammarly admits these "experts" have no actual involvement: "References to experts in this product are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities."

But the damage may already be done. When WIRED tested the feature, it found AI agents based on recently deceased academics still offering advice, including historian David Abulafia, who died in January 2025. The system casually suggested feedback from the late sociologist Pierre Bourdieu and Gone with the Wind author Margaret Mitchell, treating their intellectual legacy as raw material for algorithmic processing.

Academic Outrage Meets Silicon Valley Indifference

Vanessa Heggie, an associate professor at the University of Birmingham, called the practice "obscene" on LinkedIn, accusing the company of "creating little LLMs" from "scraped work" of scholars. Yale postdoctoral fellow C.E. Aubin was even more direct: "These are not expert reviews, because there are no 'experts' involved in producing them."

The criticism touches a nerve in academia, where humanities scholars already face budget cuts and questions about their relevance. Now they're watching their life's work get compressed into chatbots that can supposedly replace their expertise.

The Cheating Paradox

For students, this creates a perfect storm of confusion. They're already navigating murky waters around AI-assisted writing, with teachers struggling to distinguish between legitimate tool use and academic dishonesty. Now Grammarly offers the illusion of expert validation—a digital stamp of approval from intellectual giants.

But here's the twist: Grammarly's own plagiarism detector failed to catch a direct quote from The Simpsons during testing, while flagging common AI-generated phrases. If the tool can't reliably detect copying, how trustworthy are its "expert" evaluations?

The Bigger Question Silicon Valley Won't Answer

This isn't just about Grammarly. It's about a fundamental shift in how tech companies view intellectual property and human expertise. When CEO Shishir Mehrotra rebranded the company as "Superhuman," he promised technology that "starts to feel ordinary"—but at what cost to the actual humans whose work powers these systems?

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

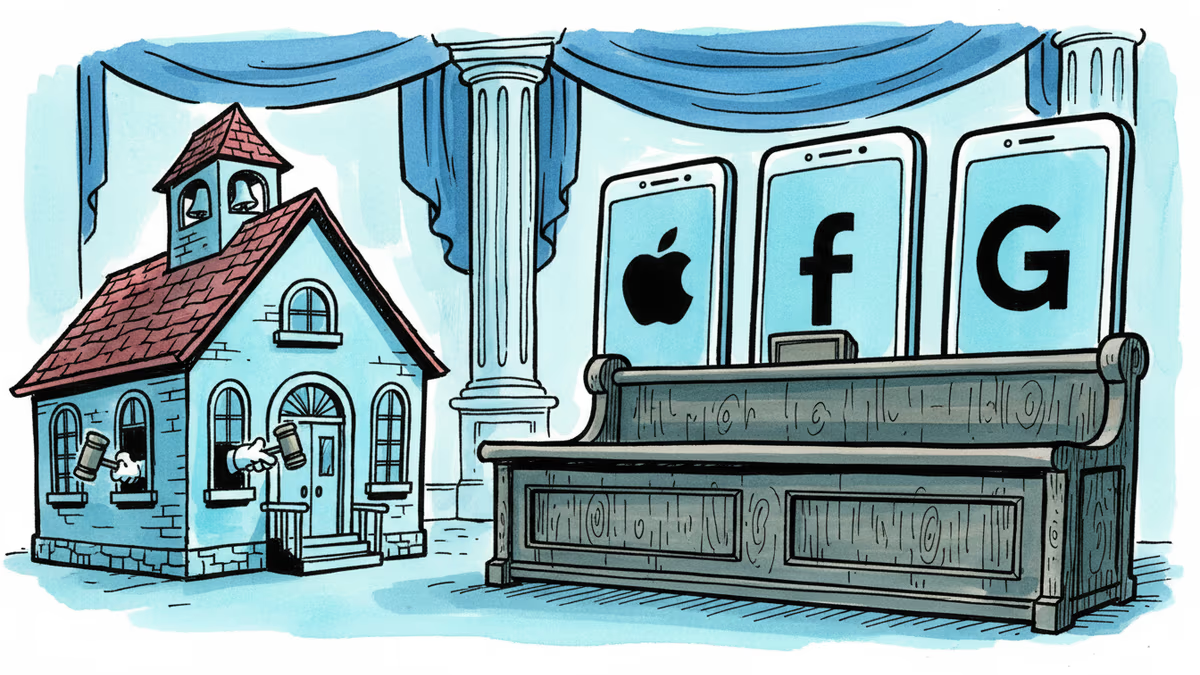

Snap, YouTube, and TikTok settled a landmark school district lawsuit over social media addiction. With 1,000+ similar cases pending, this could reshape Big Tech's liability landscape.

Thoughts

Share your thoughts on this article

Sign in to join the conversation