FTC Says It's OK to Collect Kids' Data—With a Catch

Federal Trade Commission announces enforcement pause on children's privacy law for websites using age verification tech. Child protection vs privacy rights debate intensifies.

The 25-Year Rule Just Changed

The Federal Trade Commission just announced it won't enforce America's primary children's privacy law against websites that collect minors' personal data—if they're using it for age verification. It's a stunning reversal for an agency that's spent decades protecting kids online.

Since 1998, the Children's Online Privacy Protection Act (COPPA) has been the digital world's equivalent of "stranger danger." No collecting names, addresses, or even cookies from kids under 13 without explicit parental consent. Now the FTC is essentially saying: collect first, verify later.

"Age verification technologies are some of the most child-protective technologies to emerge in decades," said Christopher Mufarrige, director of the Bureau of Consumer Protection. But critics aren't buying it.

The Privacy Paradox Gets Personal

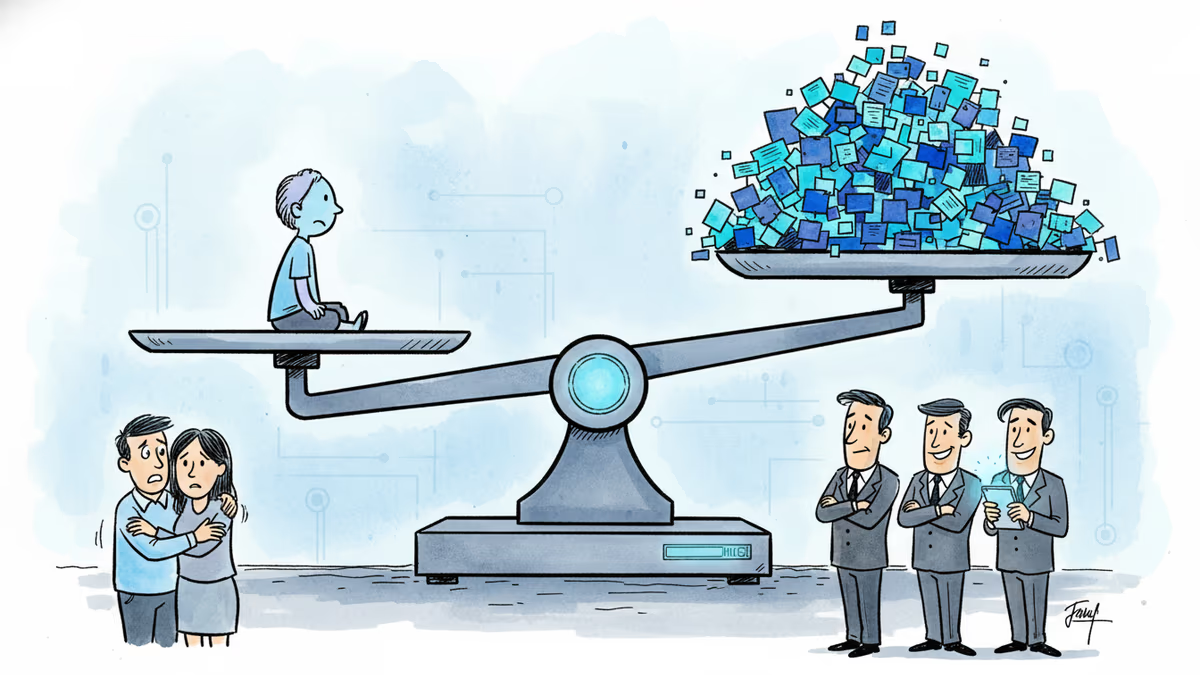

Here's the uncomfortable truth: protecting children online requires knowing who they are. Age verification systems need birthdates, government IDs, sometimes even biometric data. We're asking kids to surrender more personal information than ever—all in the name of protecting their privacy.

It's like requiring a blood test to prove you're healthy enough to avoid medical surveillance.

Meta, Google, and other tech giants are celebrating. They've been pushing for this kind of regulatory flexibility for years, arguing that rigid privacy rules actually make children less safe. A YouTube spokesperson called it "a balanced approach that prioritizes both innovation and protection."

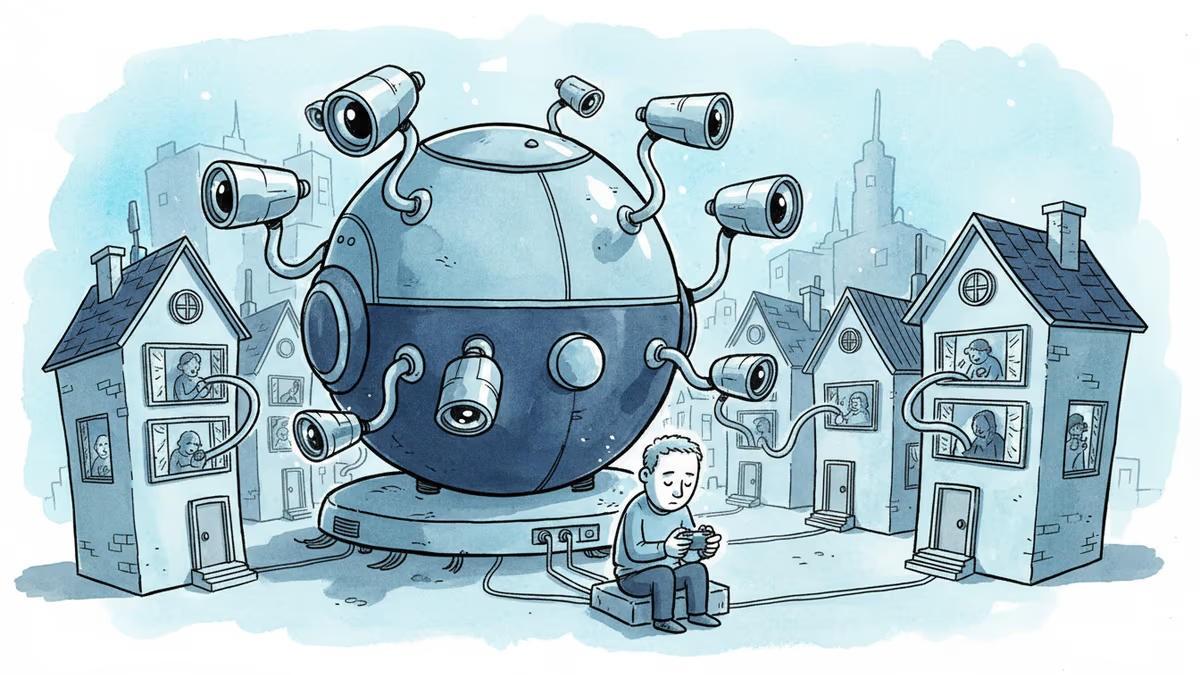

But privacy advocates are sounding alarms. The Electronic Frontier Foundation warns this could create a "surveillance infrastructure" that follows children into adulthood. Once collected, that verification data doesn't just disappear.

Parents Caught in the Middle

Sarah Chen, a mother of two from Portland, captures the dilemma perfectly: "I want my 10-year-old protected from inappropriate content, but I also don't want tech companies building a dossier on her before she's old enough to understand what that means."

The parental consent process under COPPA was already broken. Studies show fewer than 3% of parents actually read privacy policies before clicking "agree." Now they're being asked to navigate an even more complex landscape of age verification technologies they barely understand.

Meanwhile, educators worry about the classroom implications. If age verification becomes standard, will schools need to collect biometric data from students just to access educational platforms? The line between protection and surveillance keeps blurring.

The Global Domino Effect

This isn't just an American story. The EU's Digital Services Act already requires platforms to implement "appropriate and proportionate" age verification. The UK's Online Safety Act goes even further, potentially requiring age checks for all users—not just children.

But here's what regulators aren't saying: current age verification technology is only 85-90% accurate. That 10-15% error rate means millions of children could slip through cracks or adults could face unnecessary restrictions.

Yoti, a leading age verification company, admits their facial analysis can be fooled by makeup, lighting, or even a good night's sleep. Jumio relies on document scanning that struggles with international IDs. The technology simply isn't ready for the responsibility we're placing on it.

The Business of Childhood

Follow the money, and the FTC's decision makes more sense. The global age verification market is projected to reach $16.9 billion by 2027. Companies like Aristotle and LexisNexis are pivoting from adult identity verification to child protection—a more palatable sell to privacy-conscious consumers.

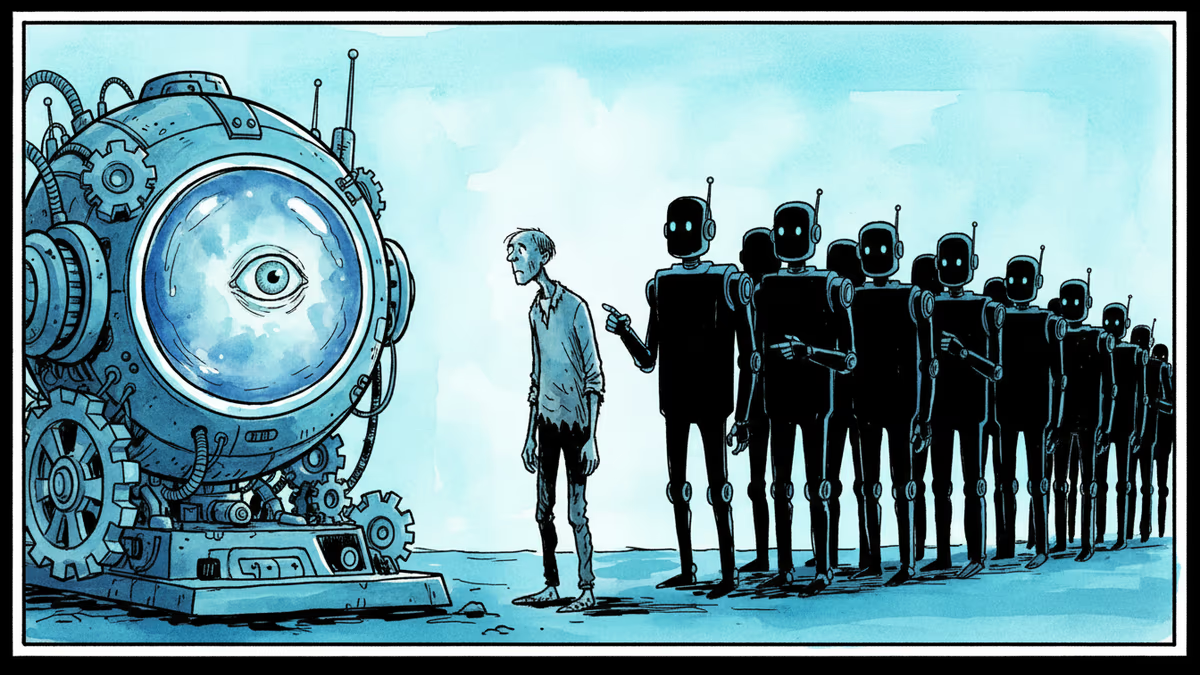

But there's a darker calculation at play. Every age verification system creates what privacy experts call "honey pots"—centralized databases of verified personal information that become prime targets for hackers and authoritarian governments.

China already uses similar systems for social control. What happens when that technology becomes standard in democratic societies?

The FTC's decision might protect some children, but it also normalizes a future where privacy is the price of participation in digital life—starting from birth.

Authors

Related Articles

Tinder now rewards users who scan their irises at a World orb with free in-app boosts. As AI agents flood dating apps, 'being human' is becoming a verified status — and a business model.

Wisconsin Governor Tony Evers vetoed an age verification bill for adult sites, citing privacy concerns. With 25+ states going the other way, the debate cuts to the heart of online freedom vs. child protection.

CBP admits buying location data from ad industry while Meta contractors watch users in bathrooms through smart glasses. The digital privacy boundary is crumbling.

A security researcher discovered he could access 7,000 DJI robot vacuums and peek into strangers' homes. This Valentine's Day revelation exposes the hidden privacy risks of our smart home obsession.

Thoughts

Share your thoughts on this article

Sign in to join the conversation