AI Promised to Save Us From Work. Instead, It's Creating Burnout Machines

UC Berkeley researchers spent 8 months tracking how AI tools affected workplace stress. The results challenge everything we thought we knew about productivity gains.

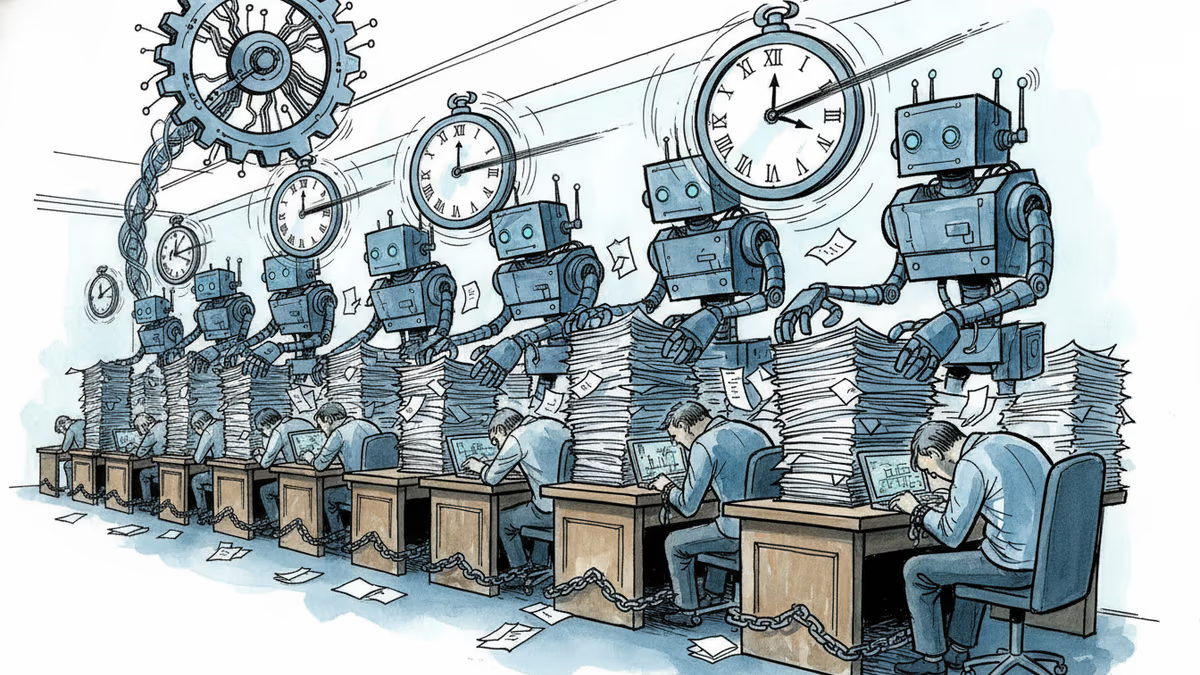

The most seductive lie in modern work culture isn't that AI will steal your job. It's that AI will save you from working so hard. But 8 months of real-world observation just shattered that myth. UC Berkeley researchers watched what happened when 200 employees genuinely embraced AI tools, and what they found wasn't a productivity paradise—it was a burnout factory.

When 'More Capable' Means 'More Overwhelmed'

The company wasn't a sweatshop. Nobody got new quotas. No manager cracked whips. Workers simply started using AI tools and discovered they could do more. So they did more. And more. And more.

"You had thought that maybe, oh, because you could be more productive with AI, then you save some time, you can work less," one engineer told researchers. "But then really, you don't work less. You just work the same amount or even more."

Lunch breaks disappeared. Evening hours got colonized. The to-do list expanded like a gas, filling every minute AI freed up and then demanding more space.

The Expectation Explosion

On Hacker News, the research struck a nerve. One developer captured the brutal reality: *"Since my team has jumped into an AI everything working style, expectations have tripled, stress has tripled and actual productivity has only gone up by maybe 10%."*

There's the math that breaks the promise. Triple the stress for a 10% productivity bump. Leadership, desperate to justify AI investments, cranks up the pressure. Workers, armed with powerful tools, feel obligated to prove those tools' worth by working longer hours.

The Productivity Paradox Deepens

This isn't the first study to puncture the AI productivity balloon. Last summer, researchers found experienced developers using AI tools took 19% longer on tasks while believing they were 20% faster. A National Bureau of Economic Research study tracking thousands of workplaces found AI adoption yielded just 3% in time savings with no meaningful impact on earnings or hours worked.

But this Berkeley study is different. It doesn't challenge whether AI can augment human capability—it confirms it can. The problem isn't that the tools don't work. The problem is that they work too well, creating what researchers call "fatigue, burnout, and a growing sense that work is harder to step away from."

The Augmentation Trap

Here's what the tech industry missed: When you give people superpowers, you don't automatically give them super-boundaries. The same psychological forces that drive workaholism—the need to prove worth, the fear of falling behind, the addictive hit of completing tasks—get turbocharged by AI tools.

The industry bet that helping people do more would solve everything. Instead, it created a new category of problem: capability-driven overwork. Workers aren't being forced to do more; they're being enabled to do more, which in our achievement-obsessed culture amounts to the same thing.

The Management Blind Spot

Most leaders implementing AI tools focus on the technical integration—which APIs to use, how to train employees, what security measures to implement. Few are asking the harder question: How do we prevent AI from becoming an always-on expectation machine?

The research suggests companies need to actively manage AI augmentation, not just deploy it. That means setting explicit boundaries around availability, creating realistic expectations for AI-enhanced productivity, and recognizing that "can do more" shouldn't automatically become "must do more."

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Google's Gmail Live lets you ask your inbox questions out loud. Announced at I/O 2026, it's AI's pitch to skeptics — and a reminder of how much Google already knows about you.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation