When AI Steals Your Voice, Who's Really Listening?

A radio host is suing Google over AI voice similarities, raising questions about consent, ownership, and the future of synthetic speech technology.

The Voice That Launched a Lawsuit

David Greene spent 15 years as the distinctive voice of NPR's Morning Edition. So when he tried Google's NotebookLM AI tool recently, he was stunned. The AI-generated podcast voice sounded eerily similar to his own—complete with his particular cadence and vocal quirks.

Greene is now suing Google, claiming the company used his voice as training data without consent. Google maintains it never intended to mimic any specific individual, but Greene argues the similarities are too precise to be coincidental. "It's not just similar," he says. "It's my vocal DNA."

This isn't an isolated incident. Scarlett Johansson publicly criticized OpenAI for using a voice suspiciously similar to hers, and voice actors are filing class-action suits across the industry.

The Consent Conundrum

Here's where it gets complicated: Greene's NPR broadcasts were publicly available. Does that make them fair game for AI training? Google argues it only used publicly accessible data, but legal experts say public availability doesn't equal consent for commercial AI development.

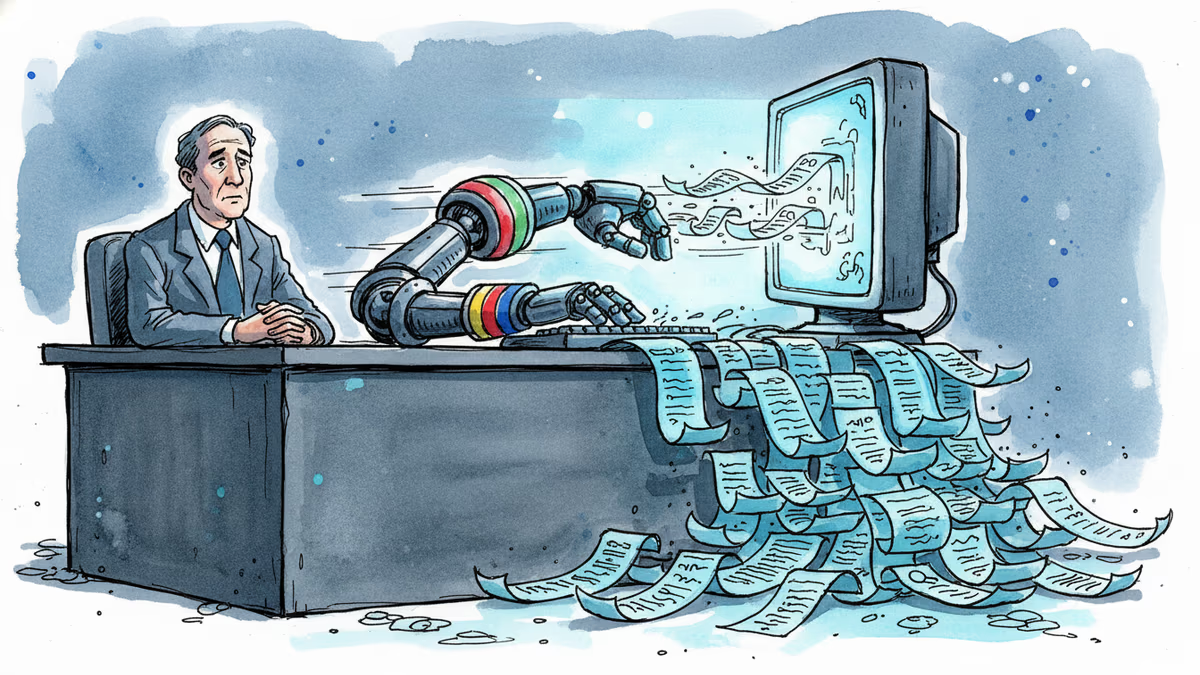

The distinction matters enormously. If every public recording becomes potential AI training material, voice professionals—from actors to podcasters—could find their livelihoods digitally replicated without compensation or control.

Yet AI companies face an impossible standard if they need explicit consent for every voice sample. Natural-sounding AI requires massive datasets. Individual permissions would make the technology practically impossible to develop.

Beyond the Courtroom: What's Really at Stake

This case represents a broader tension between innovation and individual rights. Voice cloning technology has legitimate uses—helping ALS patients like musician Patrick Darling sing again, or preserving the voices of loved ones.

But the same technology raises uncomfortable questions. If AI can replicate voices from minimal samples, what happens to voice actors, audiobook narrators, or radio hosts? More troubling: What about deepfake audio for fraud or misinformation?

The industry is scrambling for solutions. Some companies are developing "voice watermarking" to identify synthetic speech. Others are creating opt-out databases. But these technical fixes don't address the fundamental question: Who owns your voice?

Authors

Related Articles

Google is building AI agents that search the web proactively, without user prompting. That's not just a product update — it's a fundamental shift in who controls the information you receive.

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Thoughts

Share your thoughts on this article

Sign in to join the conversation