The $20 Million Question: Why AI Giants Are Buying Political Influence

Anthropic and OpenAI are pouring millions into opposing political campaigns over a single AI safety bill. What this proxy war reveals about the industry's future.

$100 Million in Political Firepower Over One Unknown Politician

Alex Bores wasn't supposed to be the center of Silicon Valley's biggest political fight. The New York Assembly member's congressional bid should have been a quiet local race. Instead, he's become ground zero for a $100+ million proxy war between AI's biggest players.

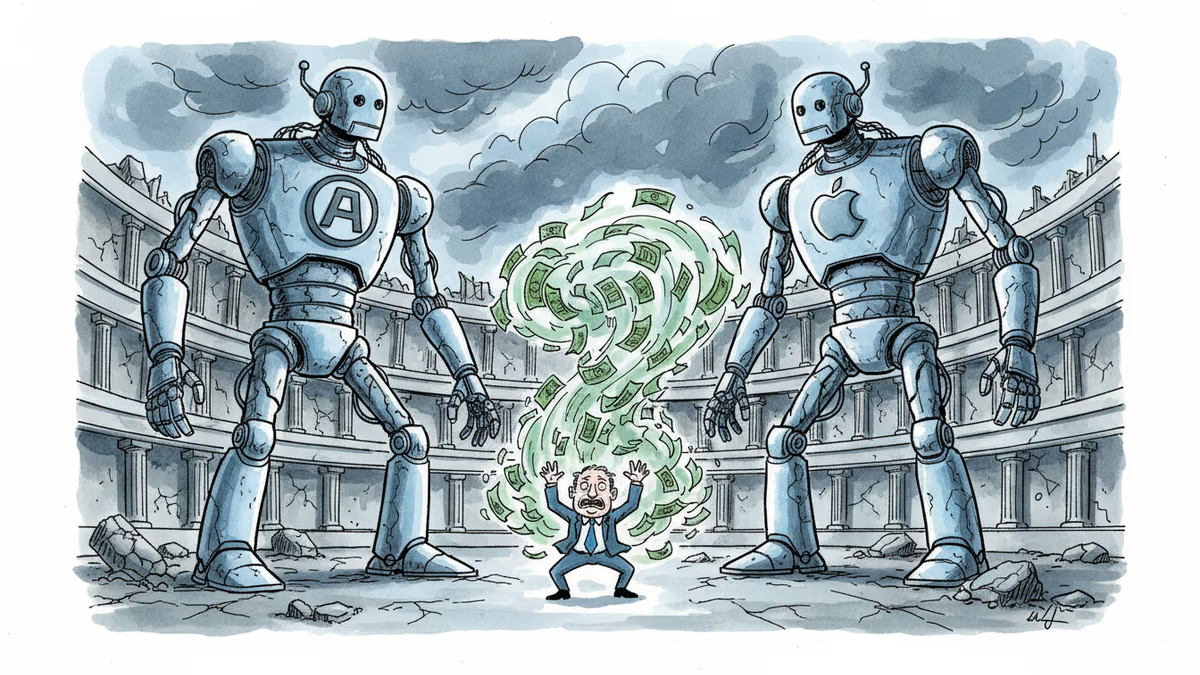

On one side: OpenAI, Andreessen Horowitz, and Palantir spending $1.1 million to attack him. On the other: Anthropic dropping $20 million to defend him. The weapon of choice? A single piece of legislation called the RAISE Act.

Two Visions of AI's Future Collide

The RAISE Act seems straightforward: require major AI developers to disclose safety protocols and report serious misuse. But it's split the industry into two camps with fundamentally different philosophies.

Leading the Future, backed by OpenAI's ecosystem, frames this as innovation vs. bureaucracy. Their $1.1 million ad blitz paints Bores as a "job-killing regulator" who doesn't understand technology's potential.

Public First Action, Anthropic's vehicle, counters with $450,000 promoting "responsible innovation." Same industry, opposite worldviews.

The Regulatory Chess Game

What's fascinating isn't the money—it's the strategy. Both sides claim to be "pro-AI," but they're betting on different regulatory futures.

OpenAI's camp believes speed trumps safety. Move fast, regulate later. Their fear? That premature rules will hand advantages to less scrupulous competitors, particularly from China.

Anthropic's approach: build trust through transparency. Better to self-regulate now than face harsher government intervention later.

Beyond New York's 12th District

This isn't really about Alex Bores or even the RAISE Act. It's about establishing precedent. If transparency requirements pass in New York, expect similar bills nationwide. If they fail, the "innovation-first" camp gains momentum.

European regulators are watching closely. The EU's AI Act already sets global standards. American companies know that regulatory fragmentation helps no one—except perhaps their international competitors.

The Unintended Consequences

Here's the irony: both camps may be creating the very problem they're trying to avoid. By turning AI regulation into a partisan political fight, they risk losing public trust entirely.

Voters see $100 million in corporate spending and wonder: what are these companies trying to hide? The more money flows, the more suspicious the public becomes.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation