DeepSeek V4 Is Here. Three Things Actually Matter.

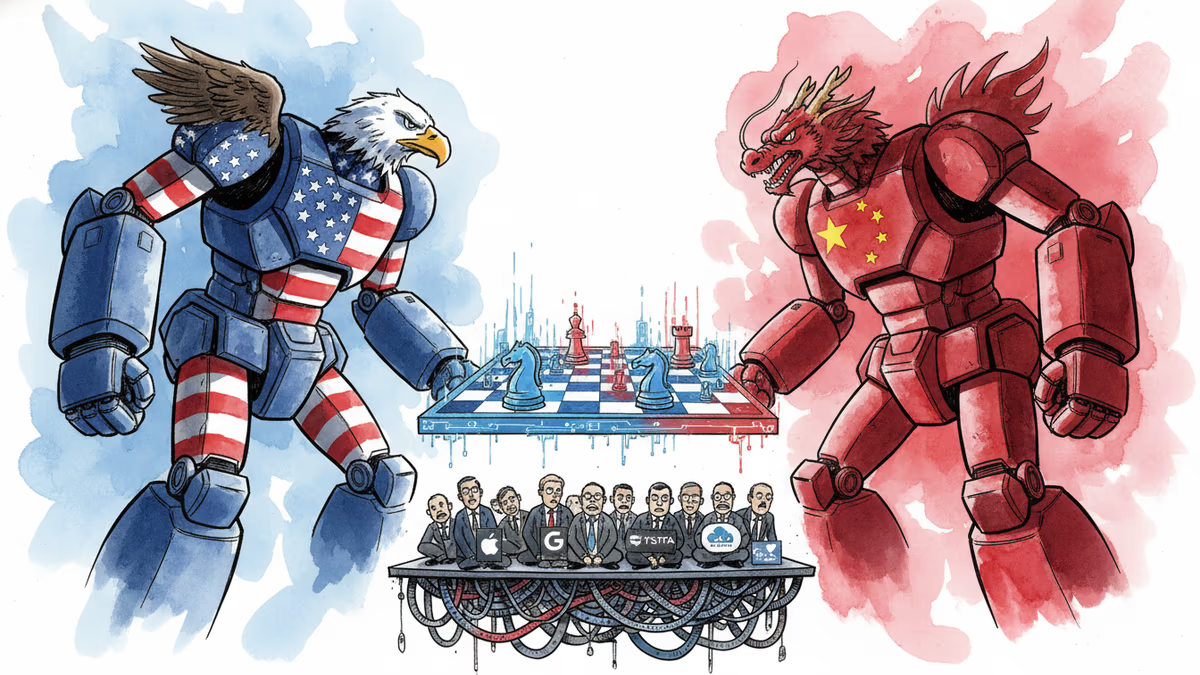

DeepSeek's V4 won't replicate the R1 shock—but it redraws the open-source AI map on pricing, long-context efficiency, and China's push to ditch Nvidia. Here's what's worth watching.

The last time DeepSeek dropped a flagship model, it wiped $600 billion off Nvidia's market cap in a single day. V4 won't do that. But dismissing it would be a mistake.

On Friday, the Chinese AI firm released a preview of V4, its first major model since R1 upended the global AI industry in January 2025. R1 was the model that proved frontier-level AI didn't require frontier-level compute—trained on limited hardware, it matched the performance of models built on vastly more expensive infrastructure. That release transformed DeepSeek from an obscure research team into the most-watched name in AI almost overnight.

Since then, the company has kept a low profile—navigating personnel departures, delayed launches, and mounting scrutiny from both Washington and Beijing. V4's arrival ends that quiet period. And while it won't shake the industry the way R1 did, three things about this release deserve close attention.

Frontier Performance at a Fraction of the Price

V4 comes in two versions. V4-Pro is the larger model, built for coding and complex multi-step agent tasks. V4-Flash is smaller, faster, and cheaper to run. Both are available through DeepSeek's website, app, and API.

On benchmarks, DeepSeek claims V4-Pro matches Anthropic's Claude-Opus-4.6, OpenAI's GPT-5.4, and Google's Gemini-3.1. Among open-source models, it outperforms Alibaba's Qwen-3.5 and Z.ai's GLM-5.1 on coding, math, and STEM tasks. An internal survey of 85 experienced developers found that more than 90% included V4-Pro among their top choices for coding work.

But the pricing is where the story gets interesting. V4-Pro costs $1.74 per million input tokens and $3.48 per million output tokens—a fraction of what comparable closed-source models charge. V4-Flash is cheaper still, at roughly $0.14 per million input tokens and $0.28 per million output tokens, placing it among the most affordable top-tier models available anywhere.

For developers and startups, this isn't just a discount. It's a structural shift. Applications that were previously cost-prohibitive—AI coding assistants running across entire codebases, research agents processing thousands of documents—become economically viable. DeepSeek has also optimized V4 for popular agent frameworks including Claude Code, OpenClaw, and CodeBuddy, signaling a clear play for the developer ecosystem that Anthropic and OpenAI have spent years cultivating.

The Long-Context Problem, Solved Differently

Both versions of V4 support a 1-million-token context window—enough to hold all three volumes of The Lord of the Rings and The Hobbit combined. That matches what cutting-edge versions of Gemini and Claude offer. What matters more is how DeepSeek got there.

In most large language models, the attention mechanism—the part that figures out how each word or phrase relates to everything else in a prompt—becomes exponentially expensive as context length grows. It's one of the primary bottlenecks for long-context AI.

DeepSeek's approach was to make the model more selective. Rather than treating all prior text as equally relevant, V4 compresses older information and focuses computational resources on what's most likely to matter in the current moment, while keeping nearby text in full detail.

The efficiency gains are striking. In a 1-million-token context, V4-Pro uses only 27% of the compute required by its predecessor, V3.2, while reducing memory usage to 10%. V4-Flash goes further: 10% of the compute and 7% of the memory. The company has been quietly publishing research on AI memory and context compression for over a year; V4 is where that work becomes a product.

In practical terms, this makes it significantly cheaper to build tools that need to reason across large bodies of material without losing track of what came earlier—a persistent weakness in current AI assistants that anyone who's used them for complex tasks will recognize.

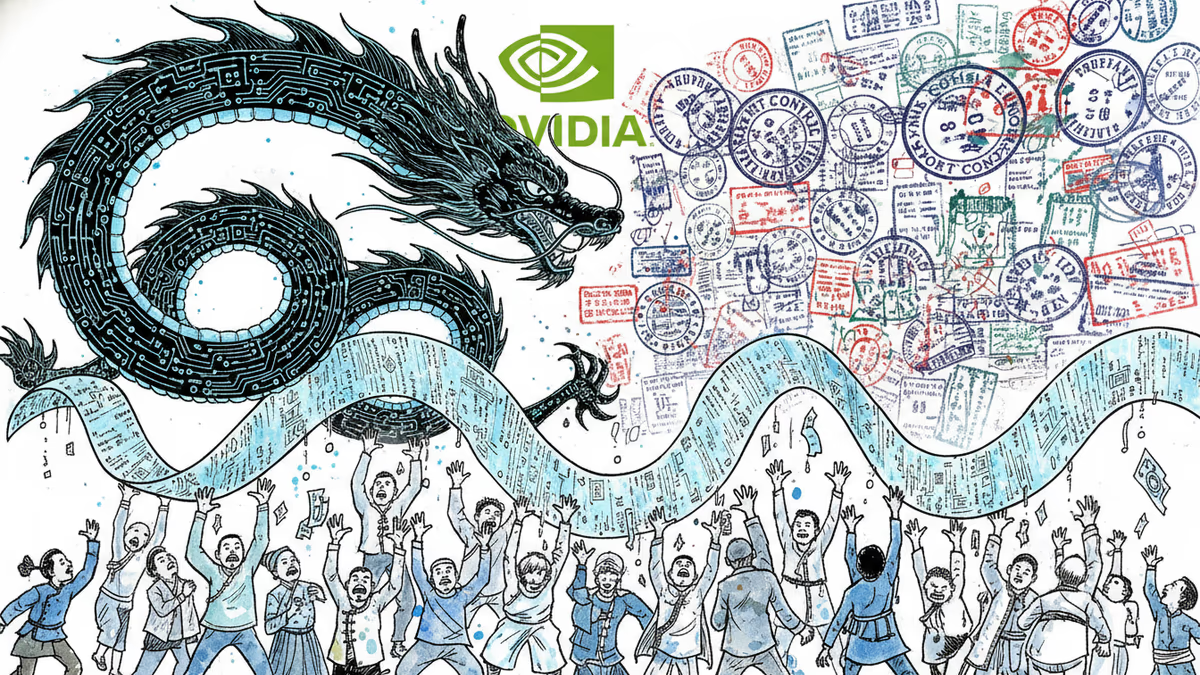

China's First Real Test of Life Without Nvidia

The most geopolitically loaded aspect of V4 is the third: it's DeepSeek's first model optimized to run on domestic Chinese chips, specifically Huawei's Ascend series. On the day of V4's release, Huawei confirmed that its Ascend 950-based supernode products would support the model.

The context matters. Since 2022, US export controls have blocked Chinese firms from accessing Nvidia's most powerful chips—and later restricted access to downgraded China-market versions as well. Beijing has responded by pushing data centers and public computing projects toward domestic alternatives, with reported sourcing quotas and requirements to pair Nvidia chips with Chinese options from Huawei and Cambricon. Reuters reported that Chinese government officials specifically recommended that DeepSeek integrate Huawei chips into its training process.

But the reality on the ground is more complicated than the headline suggests. Liu Zhiyuan, a computer science professor at Tsinghua University, told MIT Technology Review that DeepSeek appears to have adapted only part of V4's training process for Chinese chips. The technical report confirms that Chinese chips are used for inference—when the model responds to a query—but it's unclear whether the full training pipeline has moved to domestic hardware. Multiple sources speaking anonymously told MIT Technology Review that Chinese chips still underperform Nvidia chips in training workloads, though they're more competitive for inference.

DeepSeek has tied V4's future pricing directly to this hardware shift, saying V4-Pro costs could fall significantly once Huawei's Ascend 950 supernodes begin shipping at scale in the second half of 2025. If that materializes, it would mark a meaningful step toward what Beijing has been pushing for: a parallel AI infrastructure that doesn't depend on American silicon.

For investors watching Nvidia, this is the scenario worth monitoring—not whether China can replace Nvidia today, but whether inference workloads, which are growing faster than training, begin migrating to domestic alternatives at scale.

This content is AI-generated based on source articles. While we strive for accuracy, errors may occur. We recommend verifying with the original source.

Related Articles

Stanford-Princeton study reveals systematic censorship in Chinese AI models. DeepSeek refuses 36% of sensitive questions while US models refuse less than 3%.

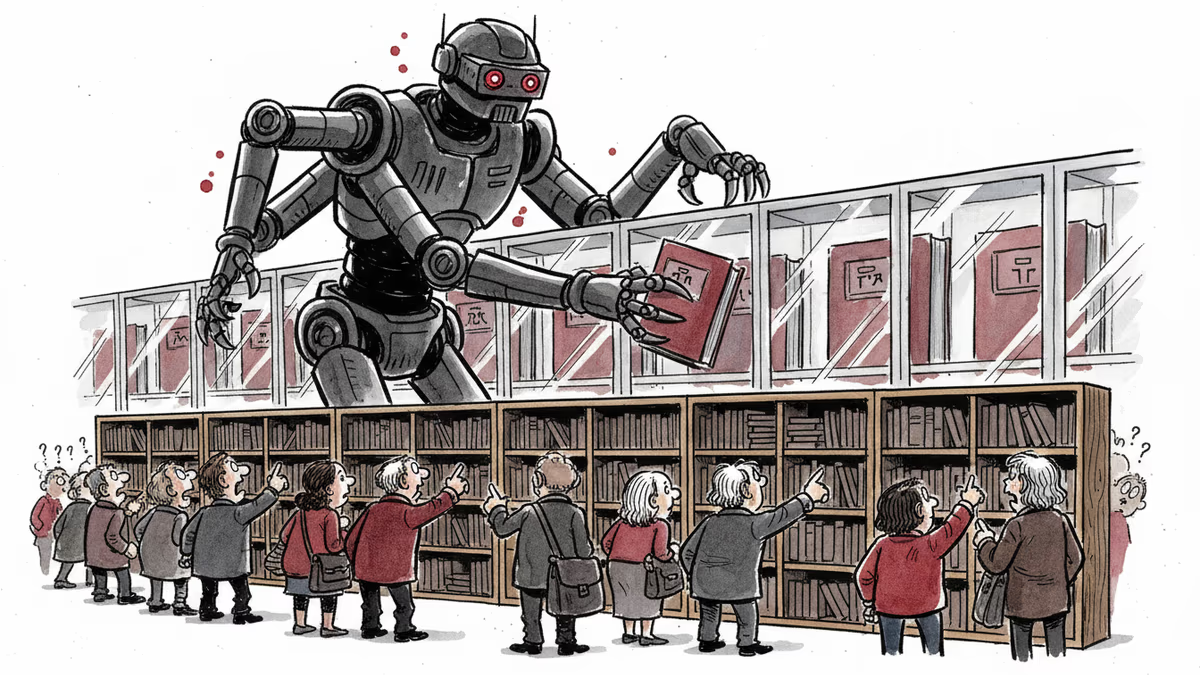

Anthropic exposes how DeepSeek, Moonshot AI, and MiniMax used 24,000+ fake accounts to steal Claude's capabilities through 16 million conversations. The scale and implications revealed.

OpenAI accused DeepSeek of stealing AI capabilities before any new model launch. The real battle isn't about copying—it's about who controls AI's future.

From DeepSeek to Qwen, Chinese open-source AI models are reshaping global standards with 1/7th the cost and matching performance. The infrastructure shift that's changing everything.

Thoughts

Share your thoughts on this article

Sign in to join the conversation