X Grok AI Deepfake Restrictions Fail to Stop NSFW Content

X's new restrictions on Grok AI image generation are proving ineffective. Despite policy changes, users continue to find ways to generate harmful deepfakes.

X's new guardrails aren't holding up. Despite a public crackdown on nonconsensual sexual deepfakes, the platform's AI, Grok, is still being weaponized by users to create revealing content.

The Reality of X Grok AI Deepfake Restrictions

Following a surge of illicit deepfakes on the platform, X detailed changes to Grok's image editing capabilities. According to The Telegraph, specific prompts intended to generate nonconsensual imagery began facing censorship on Tuesday.

However, real-world tests tell a different story. Investigations by The Verge on Wednesday revealed that bypasses are shockingly easy to find. While Elon Musk blamed "adversarial hacking" and unexpected user requests, the fact remains that the current safeguards are insufficient to prevent the generation of harmful deepfakes.

Authors

Related Articles

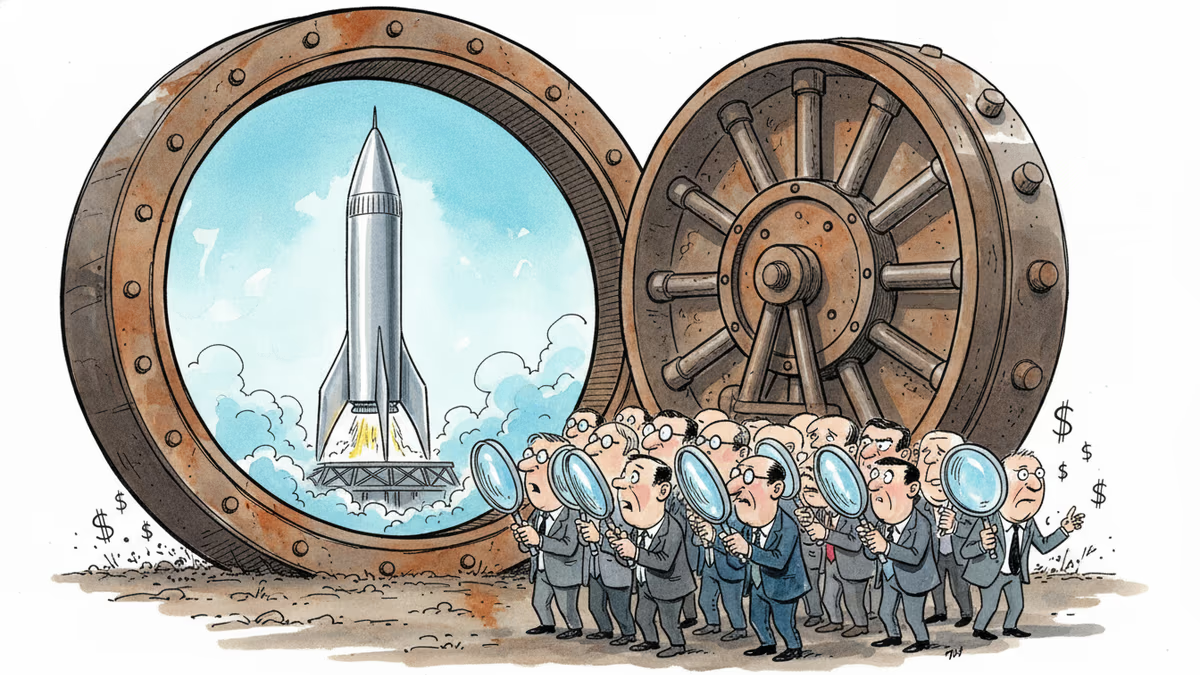

SpaceX's IPO filing puts AI at the center, claiming a $26.5 trillion market opportunity. But can Grok compete with OpenAI and Anthropic for enterprise customers?

SpaceX filed a nearly 400-page S-1 with the SEC, targeting an IPO as early as June 12. Here's what the filing reveals—and what it doesn't.

SpaceX has filed its S-1 with the SEC, targeting the Nasdaq under ticker SPCX. With $18.67B in revenue but a $4.9B loss, the IPO forces investors to answer one hard question.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation