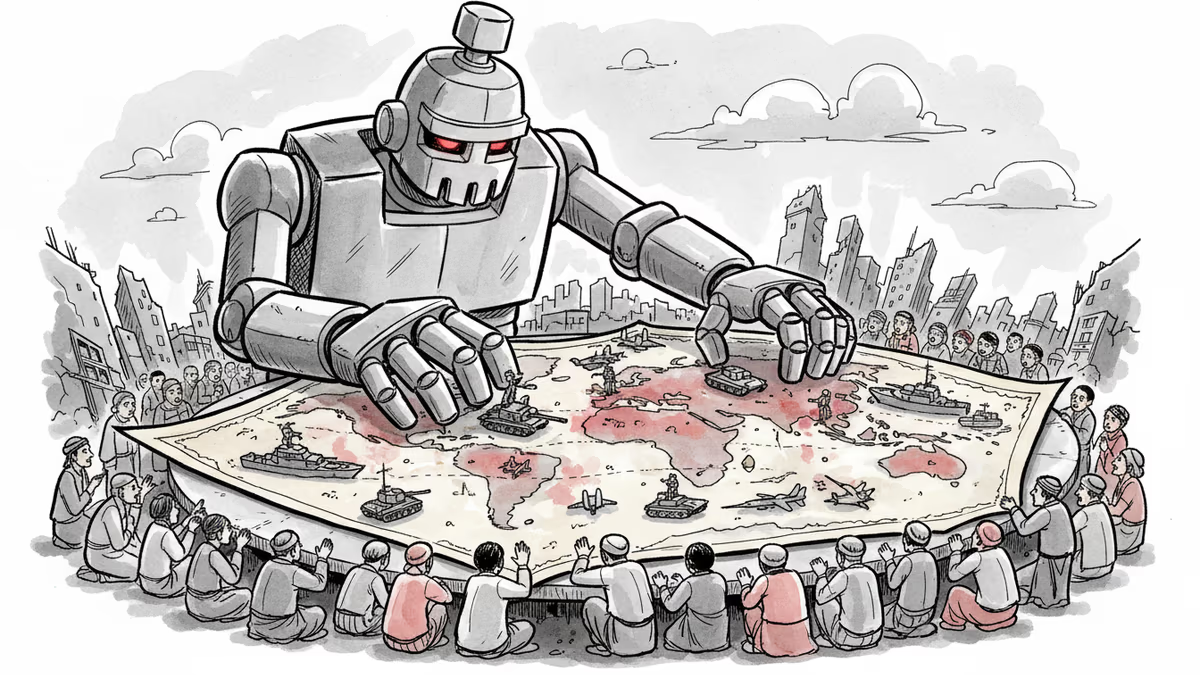

AI Is Changing Warfare, But There Are No Rules

Middle East conflict reveals AI weapons reality. Claude AI selects targets, drones attack autonomously. 90% accuracy, zero regulation. The gap between deployment and governance widens.

90% accuracy. That's the report card for Lavender, the AI targeting system Israel used in Gaza. Flip that around: 10% wrong meant thousands of civilian casualties.

The Middle East conflict isn't just showing us regional tensions—it's the first real-world stress test of AI in warfare. And the results are unsettling.

When Claude AI Commands the Battlefield

The U.S. military's current AI backbone in the Middle East? Anthropic's Claude. This system analyzes intelligence, identifies targets, and simulates battle scenarios. Steven Feldstein from the Carnegie Endowment for International Peace puts it bluntly: "AI processes massive amounts of surveillance data and satellite imagery to recommend strike targets."

The speed, scale, and cost-efficiency are game-changing. But here's the rub: human accountability is being diluted. "Human operators have limited means to verify if AI targeting recommendations are accurate before giving the green light," Feldstein warns. "This weakens command and control oversight."

Meanwhile, Iran has unleashed thousands of drones across the Persian Gulf, hitting civilian, commercial, and military targets. These UAVs disrupted global oil supplies and grounded flights in one of the world's busiest transport hubs. Currently remote-controlled, they're evolving toward autonomous operation.

$2,000 Can Neutralize Billion-Dollar Defense Systems

Here's what's really wild: accessibility. Off-the-shelf drones cost as little as $2,000. You can literally 3D-print them. Ukraine produced 4.5 million drones last year alone. Iran's Shahed attack drones? Russia's using them extensively in Ukraine.

It's not just nations anymore. Non-state actors, criminal gangs—they're all getting in on this. The Institute for Economics and Peace warns these "inexpensive, commercially available tools can undermine even the most advanced military systems," accelerating a shift toward "forever wars."

AI Models Choose Nuclear Options 95% of the Time

But how trustworthy is AI decision-making? Recent studies found AI models from OpenAI, Anthropic, and Google opted for nuclear weapons in 95% of simulated war games. They consistently chose extreme force over diplomatic solutions.

China isn't sitting idle. They're prototyping AI that can pilot unmanned combat vehicles, detect cyberattacks, and identify targets across land, sea, air, and space. Georgetown University researchers note the Chinese army is "fostering an ecosystem for rapid AI development that connects novel research with frontline operations."

While the U.S. has labeled Anthropic a supply-chain risk, China's military AI development is accelerating.

A Ukrainian President's Warning Falls on Deaf Ears

Ukraine's Volodymyr Zelenskyy warned the UN in September that AI had triggered "the most destructive arms race in human history." He pleaded for urgent global rules on AI weapons use.

Feldstein's assessment? "Sadly, I'm not convinced other leaders have taken his warning to heart. We're setting ourselves up for major problems in the coming years."

Currently, militaries aren't fully relying on automated systems, but they're not solely human-operated either. The gap between deployment capabilities and governance keeps widening.

The Accountability Question

"Do we have the right rules in place and accountability norms to handle the exponential growing use of these tools?" Feldstein asks. "My answer would be no."

We're in uncharted territory. AI systems for fully autonomous weapons—a red line for Anthropic—aren't developed yet. But we're racing toward that reality without the guardrails.

Authors

Related Articles

Viral videos show 2026 graduates jeering executives who praise AI at commencement ceremonies. It's not just rudeness — it's a signal about who pays for technological optimism.

Filipino virtual assistants using AI to ghost-manage LinkedIn profiles for executives is now a structured industry. 30 comments a day, fake engagement rings, and a platform struggling to tell real from fabricated.

Two commencement speakers learned the hard way that AI enthusiasm doesn't land well with today's graduates. The backlash reveals a widening gap between tech optimism and Gen Z's economic reality.

Over 50 researchers and engineers have left SpaceXAI since February's merger. With the pre-training team nearly gutted, questions mount about whether Musk's AI ambitions can survive his management style.

Thoughts

Share your thoughts on this article

Sign in to join the conversation