Apple Music's AI Transparency Tags: Trust or Verify?

Apple Music introduces optional AI transparency tags for music content, sparking debate about self-regulation versus detection in the streaming era.

A Reddit user's mockup became reality in 48 hours. Last week, someone posted a concept for Apple Music to distinguish AI-generated tracks. This Wednesday, Apple announced they're actually doing it.

The Honor System Approach

Apple Music is rolling out "transparency tags" that let record labels and distributors flag AI-generated or AI-assisted content when uploading to the platform. According to Music Business Worldwide, the new metadata system covers four distinct areas: artwork, track (music), composition (lyrics), and music videos.

But here's the catch: it's entirely voluntary. Labels choose whether to disclose AI usage, making this an honor system in an industry where competitive advantage matters more than transparency.

Spotify is taking a similar hands-off approach, while Deezer attempts in-house AI detection tools—though accuracy remains a challenge. The divide reveals a fundamental question: Should platforms police AI content or trust creators to self-report?

Industry's Delicate Dance

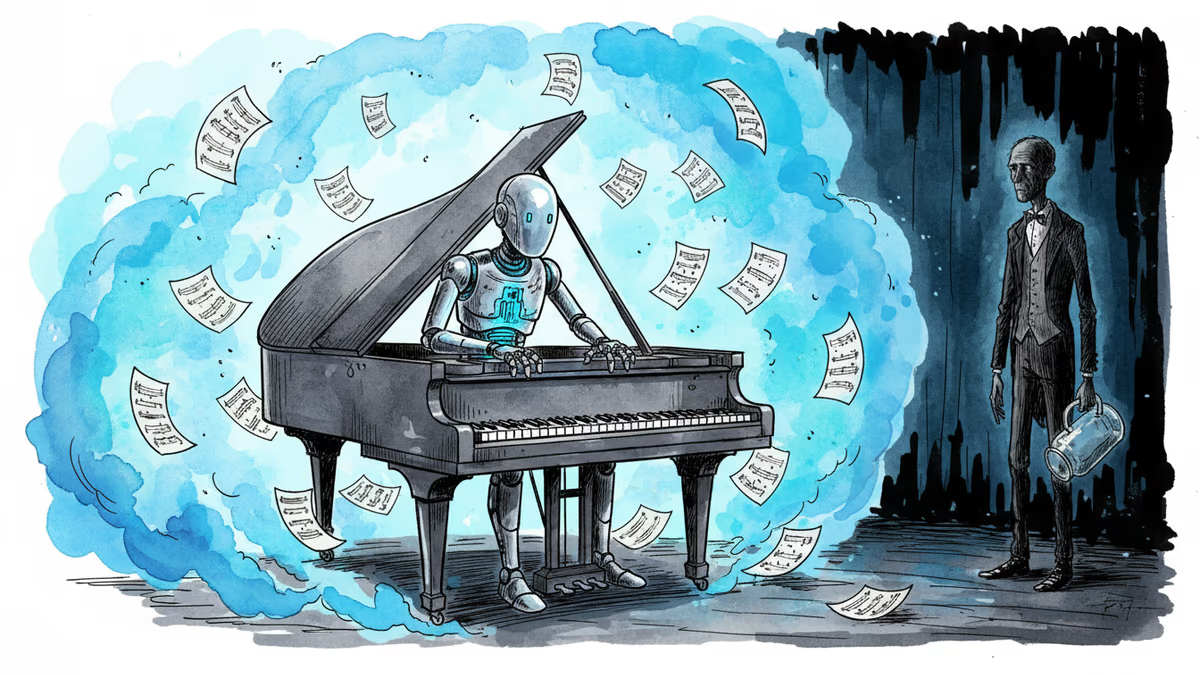

The music industry's relationship with AI is complicated. Major labels embrace AI tools for cost-cutting while simultaneously fighting AI models trained on their catalogs without permission. Artists welcome AI as a creative assistant but fear replacement by synthetic performers.

Apple's solution reflects this nuanced reality. Rather than banning AI music or allowing unlimited use, they're betting on transparency as the middle ground. It puts choice in consumers' hands while avoiding the thorny business of content moderation.

For musicians, this creates a new dilemma. Full disclosure might hurt commercial prospects, but getting caught using undisclosed AI could damage credibility. The tags essentially create a new category: "AI-assisted" music that exists somewhere between human and machine creation.

The Detection Dilemma

The voluntary nature of these tags exposes a critical weakness. In competitive markets, can we really expect honest disclosure? Deezer's attempt at automated detection shows the technical challenges, but relying on self-reporting seems equally flawed.

This mirrors broader tech industry patterns. Social media platforms initially relied on user reporting for harmful content before building detection systems. Search engines trusted website owners to accurately describe their content before developing ranking algorithms.

The question isn't whether Apple's system will work perfectly—it's whether transparency, even imperfect transparency, moves the industry in the right direction.

Authors

Related Articles

From a niche experiment in 2018 to a mainstream disruption in 2026, AI-generated music is forcing the industry to rethink creativity, copyright, and compensation.

iOS 26.4 brings AI-generated playlists to Apple Music. It's convenient, but it raises real questions about taste, discovery, and who controls what you hear.

Czech figure skaters used AI-generated music at Olympics, sparking debate about creativity, authenticity, and the future of artistic expression in competitive sports.

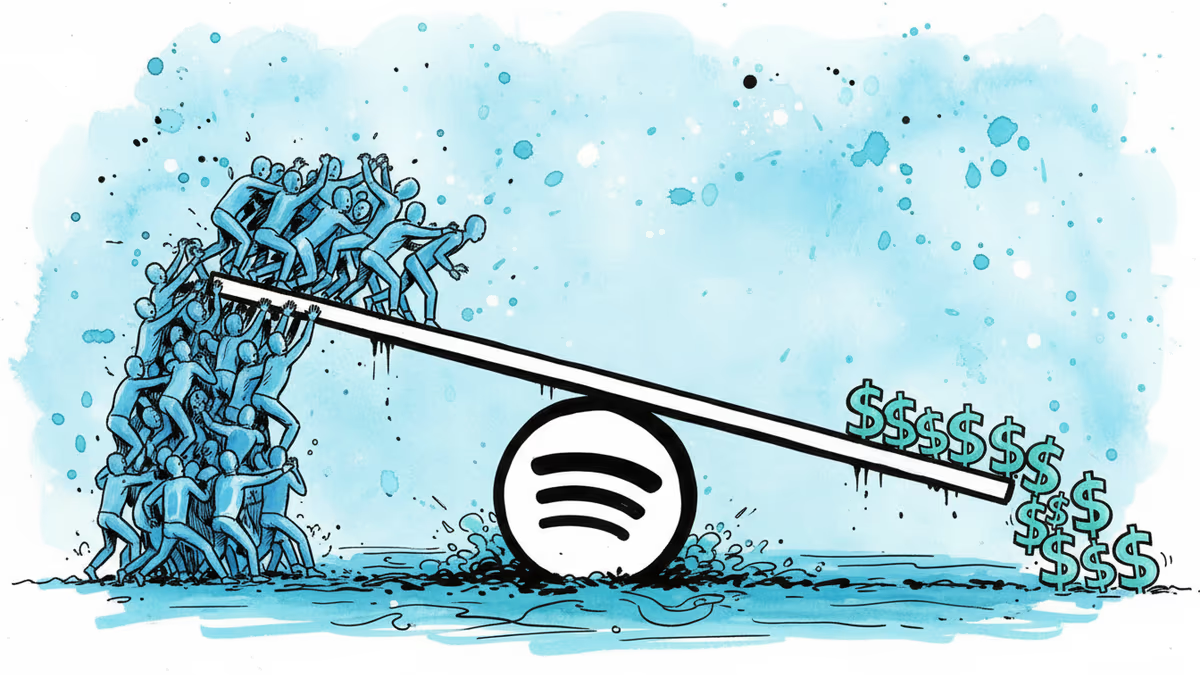

Spotify posted record Q4 user growth reaching 751M monthly users, but ad revenue declined 4%. New co-CEOs face the challenge of balancing free features with profitability.

Thoughts

Share your thoughts on this article

Sign in to join the conversation