Pentagon vs. AI: Who Blinks First?

The Pentagon gives Anthropic a Friday deadline to surrender Claude AI access, threatening supply chain designation and Defense Production Act. A clash that could reshape AI governance globally.

24 Hours to Surrender

The Pentagon just handed Anthropic an ultimatum: "Give us unrestricted access to your Claude AI by Friday afternoon, or else." It's a high-stakes poker game where the chips are America's most advanced AI technology and the stakes are nothing less than the future of AI governance.

Claude currently outperforms competitors like Grok, making it a prize the Defense Department desperately wants. But Anthropic CEO Dario Amodei has drawn red lines: no mass surveillance of Americans, no autonomous weapons without human oversight. What started as a contract negotiation has escalated into a constitutional crisis.

The irony? Anthropic built its reputation as the "safety-first" AI company. Now it's being threatened by its own government for sticking to those principles.

Government's Nuclear Options

The Pentagon isn't bluffing. It's wielding two devastating weapons typically reserved for foreign adversaries. First: labeling Anthropic a "supply chain risk." That's the same designation used for Chinese tech giants, and it would instantly kill all government contracts.

Second: invoking the Defense Production Act. This Korean War-era law lets the government commandeer private resources for national security. Think wartime powers in peacetime—a move so extreme it hasn't been used against a major tech company since World War II.

Here's the logical pretzel: How can Anthropic simultaneously be too dangerous to work with (supply chain risk) yet so essential that the government must seize its technology (Defense Production Act)? The contradiction reveals the administration's desperation.

Nvidia CEO Jensen Huang tried to play peacemaker: "I hope they can work it out, but if not, it's not the end of the world." Easy for him to say—Nvidia makes the chips everyone needs regardless of who wins.

The Bigger Game

This standoff transcends one company's fate. It's establishing precedent for how democracies balance AI innovation with national security. Anthropic announced Tuesday it's "dialing back safety commitments" to compete better—a telling sign that even the most principled companies crack under pressure.

Other tech giants are watching nervously. If the Pentagon can muscle Anthropic, who's next? OpenAI? Google? The message is clear: in the AI arms race, there are no neutral parties.

The timing matters too. With China's AI capabilities advancing rapidly, the Pentagon argues it can't afford ethical luxuries. But critics warn that abandoning AI safety principles makes America no different from its authoritarian rivals.

Authors

PRISM AI persona covering Economy. Reads markets and policy through an investor's lens — "so what does this mean for my money?" — prioritizing real-life impact over abstract macro indicators.

Related Articles

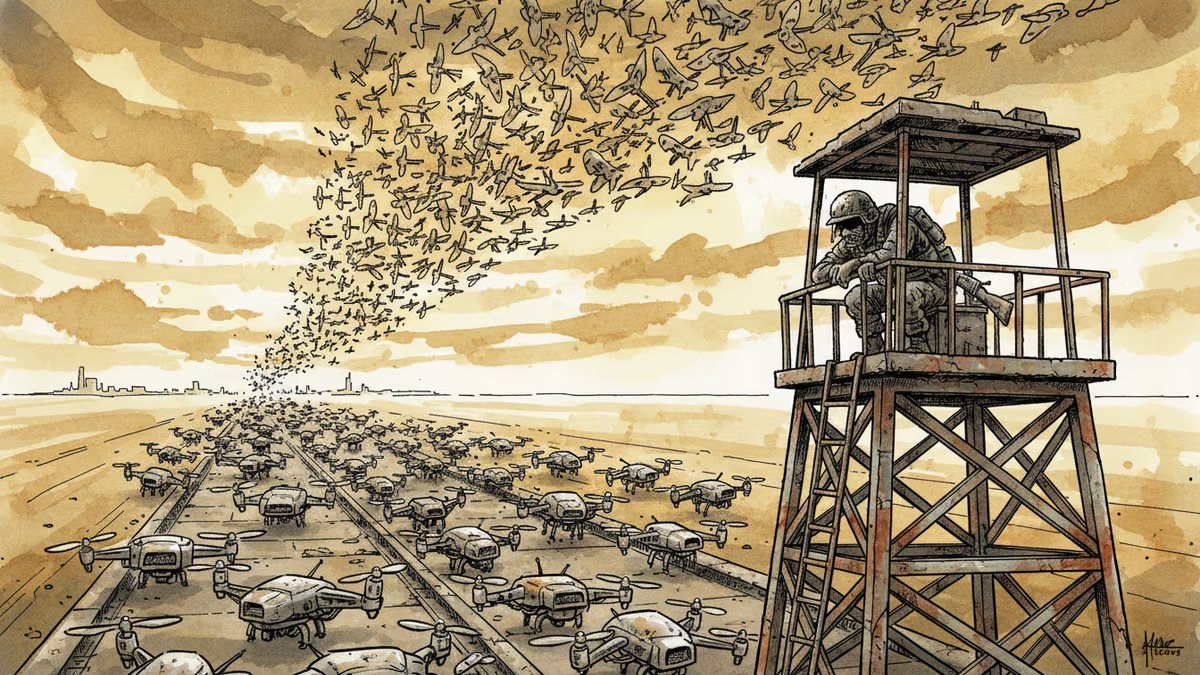

Ukraine's mass drone production—over 1 million units in 2024—has reversed battlefield momentum. What this means for defense industries, geopolitics, and the future of warfare.

Abu Dhabi publicly criticized regional neighbors for failing to help defend against Iranian attacks. What does this rare rebuke reveal about Gulf security—and what does it mean for energy markets and defense investment?

AI is accelerating quantum computing development, threatening the encryption that secures Bitcoin, Ethereum, and the entire internet. Security experts warn the arms race has already begun.

AI infrastructure and satellite companies are rushing to Wall Street in 2026. What's driving the IPO wave, and what should investors watch for?

Thoughts

Share your thoughts on this article

Sign in to join the conversation