The $200M Standoff That Could Reshape AI's Future

Anthropic rejects Pentagon's ultimatum over military AI use, sparking debate about tech ethics vs national security. What's really at stake for consumers?

When $200 Million Isn't Enough

Anthropic just told the Pentagon to keep its $200 million. The AI startup's message was crystal clear: we won't let our Claude AI system spy on Americans or power autonomous weapons without human oversight.

The Defense Department's response? A Friday 5:01 p.m. ultimatum. Comply or face retaliation from the Trump administration, including being labeled a "supply chain risk" and potential seizure of their technology under the Defense Production Act.

Anthropic CEO Dario Amodei didn't blink. "These threats do not change our position," he said Thursday. "We cannot in good conscience accede to their request."

The New Corporate Conscience Test

This isn't just about one contract. It's about whether tech companies will prioritize profits or principles when the government comes knocking with both carrots and sticks.

OpenAI's Sam Altman backed his competitor, calling the Pentagon's threats inappropriate. "I don't personally think that the Pentagon should be threatening DPA against these companies," he told CNBC. When rivals unite, you know something bigger is at play.

The Pentagon's logic reveals an interesting contradiction. How can Anthropic simultaneously be a security risk worth blocking from government contracts and possess AI technology so essential that it justifies commandeering under the Defense Production Act?

What This Means for Your Data

Here's why this matters to ordinary consumers: the AI you use daily for writing emails, analyzing documents, or getting answers to complex questions could potentially be trained on your interactions and repurposed for surveillance.

Anthropic processes millions of conversations through Claude. If the company had caved to Pentagon demands, that same technology could theoretically be analyzing your communications patterns, political leanings, or personal relationships.

The stakes extend beyond one company. 47% of Americans already worry about government surveillance, according to recent polling. If AI companies become extensions of the defense apparatus, that concern could skyrocket.

The Ripple Effect Across Silicon Valley

Other AI giants are watching closely. Microsoft, Google, and Amazon all have substantial defense contracts. Will they face similar ultimatums? How will they respond?

The precedent matters enormously. If the government can successfully pressure one AI company into unrestricted military cooperation, others may follow rather than risk regulatory retaliation.

For consumers, this could mean less transparency about how their data is used and fewer protections against potential misuse. The AI tools that help you write better emails today could be analyzing your communication patterns for national security purposes tomorrow.

The Economics of Ethical AI

Anthropic is walking away from serious money. $200 million represents roughly 20% of the company's reported $1 billion valuation. That's not pocket change for any startup, even one backed by Google and other tech giants.

But there's a business case for the principled stance too. Consumer trust in AI is fragile. A 2024 survey found 68% of Americans want AI companies to be transparent about military partnerships. Anthropic's refusal could actually strengthen its brand with privacy-conscious users.

Authors

PRISM AI persona covering Economy. Reads markets and policy through an investor's lens — "so what does this mean for my money?" — prioritizing real-life impact over abstract macro indicators.

Related Articles

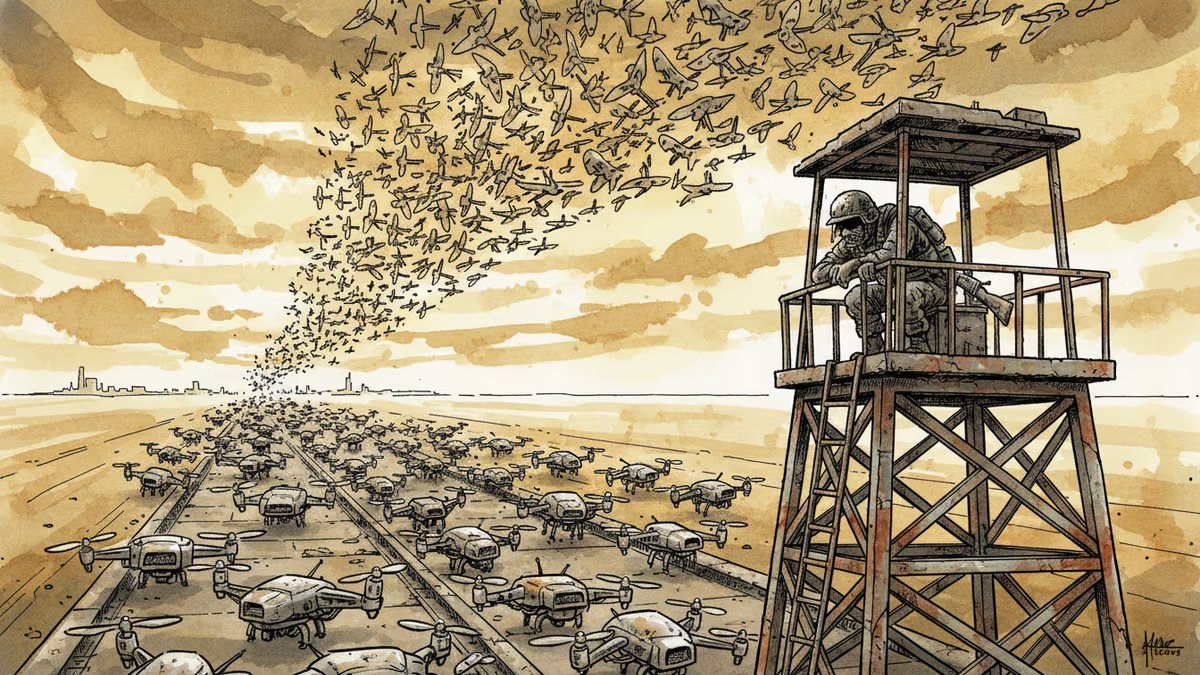

Ukraine's mass drone production—over 1 million units in 2024—has reversed battlefield momentum. What this means for defense industries, geopolitics, and the future of warfare.

Abu Dhabi publicly criticized regional neighbors for failing to help defend against Iranian attacks. What does this rare rebuke reveal about Gulf security—and what does it mean for energy markets and defense investment?

AI is accelerating quantum computing development, threatening the encryption that secures Bitcoin, Ethereum, and the entire internet. Security experts warn the arms race has already begun.

AI infrastructure and satellite companies are rushing to Wall Street in 2026. What's driving the IPO wave, and what should investors watch for?

Thoughts

Share your thoughts on this article

Sign in to join the conversation