AlphaGo Can't Beat a Matchstick Game. That's a Problem.

A new paper proves that the self-play training method behind AlphaGo and AlphaZero structurally fails on a whole category of games. What that means for AI systems making real-world decisions.

The World's Most Powerful Game-Playing AI Has a Blind Spot

The rules take 30 seconds to learn. Two players. A pile of matchsticks arranged in rows. Take turns removing some. Whoever is forced to take the last one loses. It's called Nim, and it has been solved by mathematicians for over a century. Yet a new paper in the journal Machine Learning demonstrates that the same training method that produced AlphaGo—the AI that defeated the world's best Go player—structurally fails to master it.

This isn't a bug. It's a feature of the method itself. And that distinction matters far beyond any board game.

How AlphaGo Learned to Win—and Why That's Not Enough

DeepMind's Alpha series represented a genuine leap in AI capability. The core idea was elegant: instead of training on human games, let the AI play against itself millions of times, gradually improving through trial and error. No human expertise required. No labeled data. Just the rules of the game and time.

It worked spectacularly. AlphaZero mastered chess, Go, and shogi—games that had consumed human grandmasters for centuries—within days of training. The approach seemed almost universally applicable.

But cracks appeared quietly. Researchers began identifying specific Go board positions where AlphaGo would consistently lose to players far below its supposed skill level. At first, these looked like isolated quirks—edge cases, insufficient training data. The new paper argues otherwise. It provides a mathematical proof that self-play training fails across an entire category of games, not just specific positions. Nim is the clearest example, but the failure condition is defined structurally: certain game architectures create what researchers describe as "traps" in the training landscape, where an AI learns to beat its current self without ever learning the actual optimal strategy.

In plain terms: the AI gets better at winning against itself, but that doesn't mean it's getting better at the game.

From Matchsticks to Medicine

The obvious question is: who cares if a game AI can't play Nim?

The less obvious answer: anyone who relies on AI systems trained with similar methods to make consequential decisions. Reinforcement learning—the broader family of techniques that includes self-play—is now embedded in drug discovery pipelines, financial trading algorithms, logistics optimization, and military planning tools. These domains share a structural resemblance to games: sequences of decisions, feedback loops, optimization toward a goal.

If self-play training creates systematic blind spots—not random errors, but patterned failures tied to specific problem structures—then the risk isn't that AI occasionally gets things wrong. It's that AI gets things wrong in predictable, repeatable ways that humans might not notice until the stakes are high.

A misdiagnosis here. A mispriced risk there. A tactical recommendation that looks locally optimal but is globally wrong. The Nim paper doesn't prove these failures are happening in deployed systems. But it provides the mathematical vocabulary to ask the question seriously.

Who's Watching the Watchdog?

For AI developers, this research is uncomfortable but actionable. A mathematically defined failure category is actually useful—it tells you where to look, what to test, and potentially how to redesign training pipelines for high-stakes applications.

For regulators, the implications are harder to ignore. The EU's AI Act already mandates conformity assessments for high-risk AI systems. A growing body of academic literature identifying structural failure modes in leading training methods gives regulators concrete grounds to require adversarial testing—not just performance benchmarks, but deliberate attempts to find the categories of problems where a system breaks.

For enterprises deploying AI, the practical takeaway is this: performance metrics measured on training distributions don't tell you where the edges are. AlphaGo looked unbeatable—until someone found the positions it couldn't handle. The question isn't whether your AI system is accurate on average. It's whether anyone has seriously tried to find what it consistently gets wrong.

And for the average person increasingly subject to AI-assisted decisions—in hiring, lending, healthcare, content moderation—the unsettling implication is that the errors affecting them may not be random noise. They may be structural. Systematic. And invisible to the organizations deploying the systems.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Cohere and Aleph Alpha are merging to build a transatlantic AI challenger valued at $20 billion. Their pitch: sovereignty, not just performance. Can it work?

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

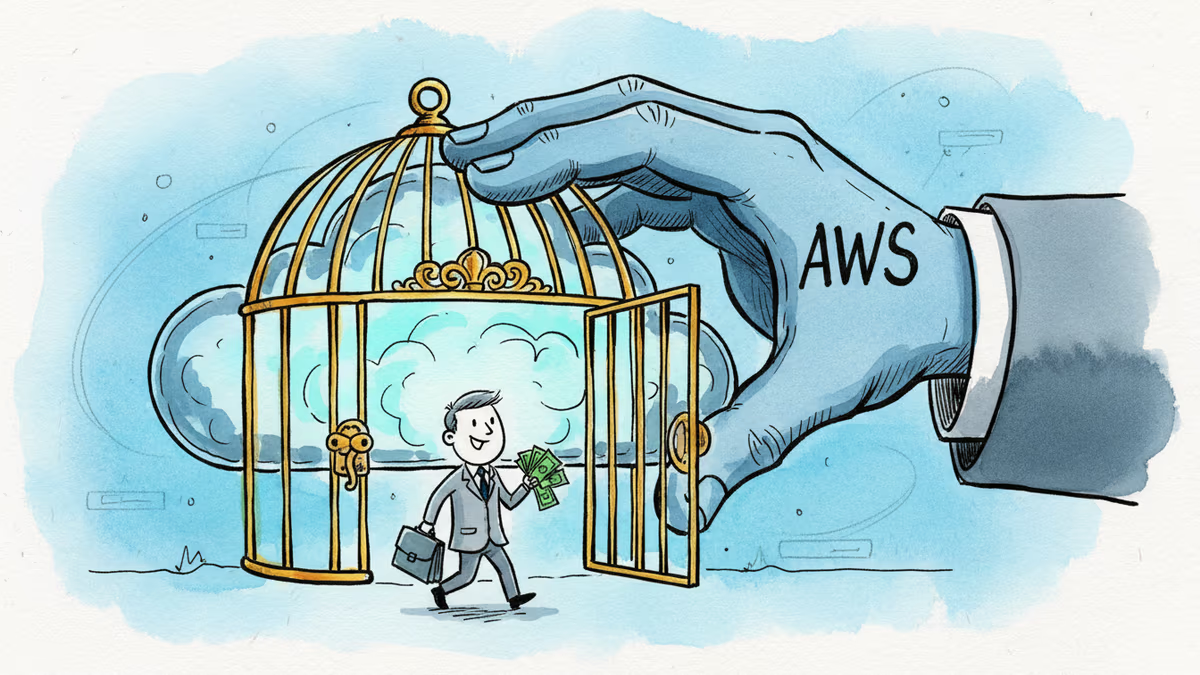

Amazon's fresh $5B investment in Anthropic brings its total to $13B. But the real story is a $100B AWS spending pledge and a bet on Amazon's own AI chips over Nvidia.

Thoughts

Share your thoughts on this article

Sign in to join the conversation