Your Phone Just Ordered Dinner Without You

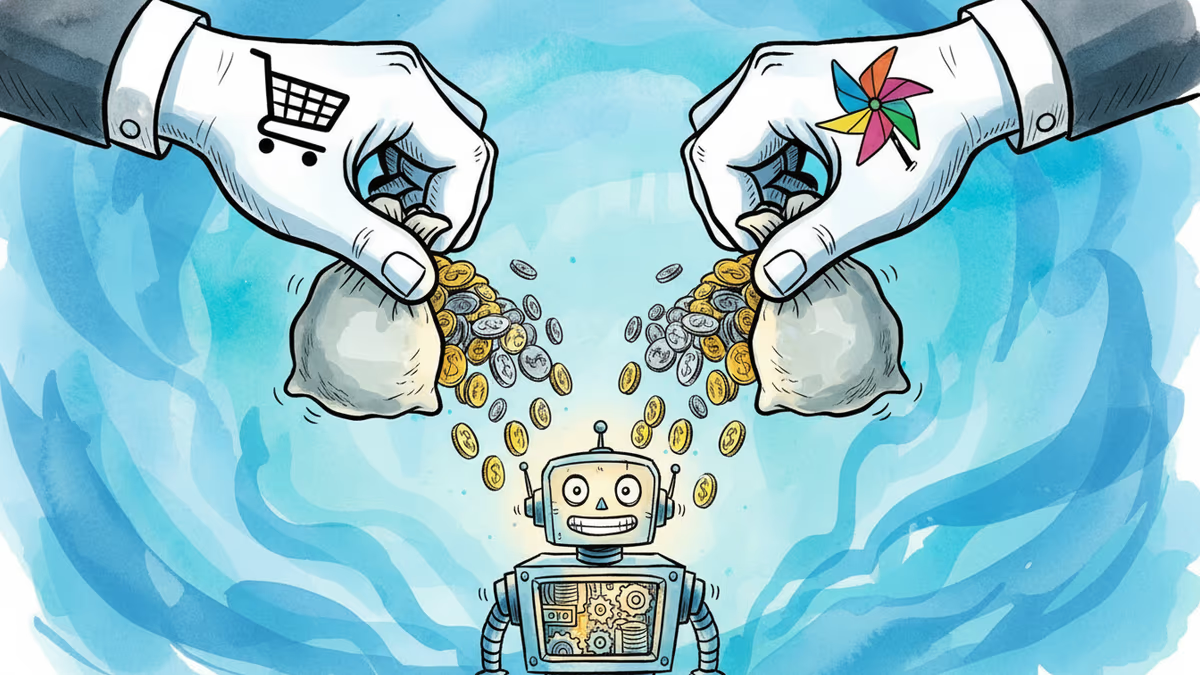

Google and Samsung have launched a beta of Gemini-powered app automation on the Galaxy S25 Ultra, letting AI handle food delivery and rideshare orders on your behalf. What does it mean when your phone starts acting for you?

You say "order me a ride to the airport." Your phone opens the app, selects the car type, confirms the pickup — and you never touch the screen. That's not a concept demo. It's live on the Samsung Galaxy S25 Ultra right now, in beta.

What's Actually Happening

A few weeks ago, Google and Samsung jointly announced that Gemini would gain the ability to operate apps on your behalf — not just suggest actions, but physically navigate app interfaces inside a virtual window and complete tasks end-to-end. The initial focus: food delivery and rideshare apps. The pitch: tell Gemini what you want in plain language, and it handles the rest.

When the S25 Ultra first shipped, the feature wasn't active. Then a beta update rolled it out — and reviewers who tested it described the same disorienting experience: watching your phone use itself. The familiar app UI tapping through menus with no human hand involved. Technically expected. Visually strange.

This is the category the industry has been calling "AI agents" — and after years of hype, it's showing up in a real product update on a real device.

Why This Moment Matters

The timing isn't accidental. The AI assistant race has quietly shifted gears. The first wave was about answering questions. The current wave is about doing things. OpenAI's Operator, Anthropic's Claude agent features, Apple's upgraded Siri — every major player is moving toward the same destination: AI that acts, not just advises.

The question was always who would get there first in a form people actually use daily. A flagship Android phone with hundreds of millions of users in the ecosystem is a meaningful beachhead. Google gets Gemini embedded deeper into Android's core. Samsung gets a software differentiator at a time when premium smartphone hardware is increasingly hard to tell apart. Both win — at least on paper.

Three Ways to Read This

For consumers, the immediate appeal is frictionless convenience. Fewer taps, faster outcomes. If you're already trusting apps with your location, payment info, and daily habits, delegating the tapping itself feels like a small additional step.

For app developers and platforms, this is more complicated. Gemini's automation requires apps to play along — either through formal integration or by navigating standard UI elements. Platforms that control high-value transactions (think DoorDash, Uber, Amazon) have real incentive to cooperate. But they also have reason to be cautious: an AI layer sitting between the user and the app means less direct engagement, potentially less upselling, and a new dependency on Google's goodwill.

For privacy advocates and regulators, this is the scenario they've been flagging for years. An AI agent that operates across multiple apps doesn't just see one slice of your behavior — it sees the whole picture. When you eat, where you go, how often, with what payment method. The EU's AI Act and ongoing scrutiny of big tech data practices in the US make this an area worth watching closely. Whether regulators treat Gemini's automation as a novel risk or an extension of existing data practices is an open question.

The Deeper Trade-Off

There's a version of this technology that's genuinely useful and relatively benign — a shortcut layer that saves you 30 seconds of tapping. There's another version where the AI learns your patterns so thoroughly that it starts anticipating actions you haven't asked for yet, and the line between assistant and influence becomes blurry.

Right now, Gemini's automation is firmly in the first category: it acts when you tell it to, in a defined scope. But the architecture being built — an AI with cross-app visibility and action capability — is the same architecture that enables the second version. The difference is a matter of policy decisions and product choices that haven't been made yet.

The beta label matters here. This is Google and Samsung learning how users actually interact with agent-style AI in the wild. The behavior of the final product will be shaped by what they observe now.

Authors

Related Articles

From hyper-personalized phishing to deepfake video calls, AI has turbocharged cybercrime. Meanwhile, hospitals adopt AI tools whose patient benefits remain unproven. What does this mean for trust?

Google is investing at least $10 billion in Anthropic, potentially up to $40 billion. With Amazon's $5B deal just days earlier, two tech giants are now backing the same AI startup — valued at $350 billion.

Cohere and Aleph Alpha are merging to build a transatlantic AI challenger valued at $20 billion. Their pitch: sovereignty, not just performance. Can it work?

Google is committing up to $40 billion to Anthropic, a direct AI competitor. The deal reveals how the real AI arms race isn't about models — it's about who controls the infrastructure beneath them.

Thoughts

Share your thoughts on this article

Sign in to join the conversation